SLIDE 1

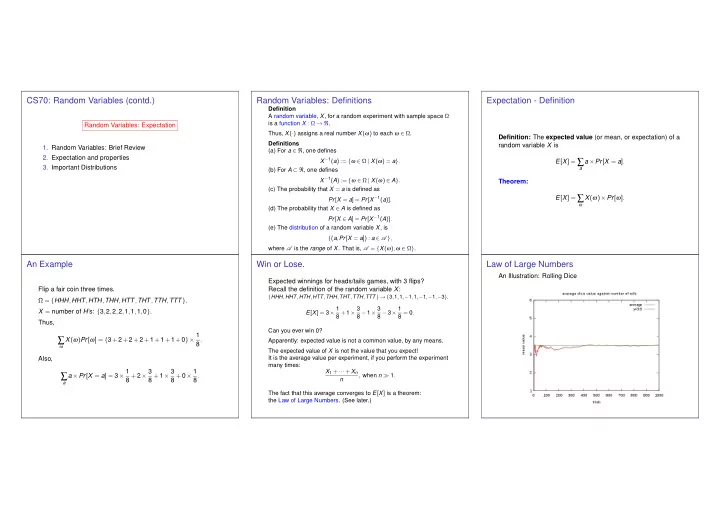

CS70: Random Variables (contd.)

Random Variables: Expectation

- 1. Random Variables: Brief Review

- 2. Expectation and properties

- 3. Important Distributions