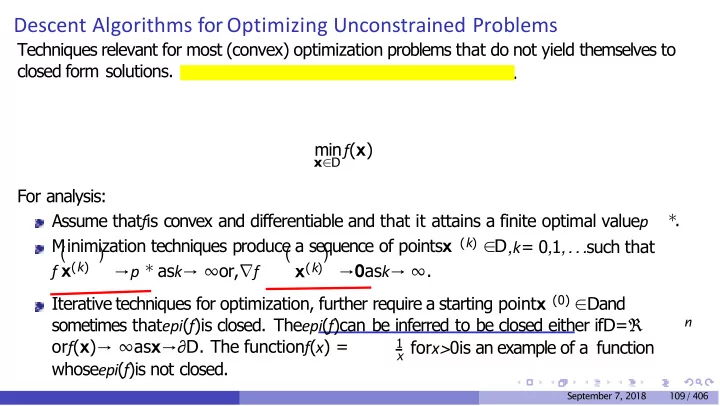

Descent Algorithms for Optimizing Unconstrained Problems

Techniques relevant for most (convex) optimization problems that do not yield themselves to closed form solutions. We will start with unconstrained minimization.

x∈D

minf(x) For analysis: Assume thatfis convex and differentiable and that it attains a finite optimal valuep

∗

.

(k)

Minimization techniques produce a sequence of pointsx ∈D,k= 0,1,...such that f x(k) x(k) ( ) ( ) →p ∗

ask→ ∞or,∇f

→0ask→ ∞. Iterative techniques for optimization, further require a starting pointx (0) ∈Dand sometimes thatepi(f)is closed. Theepi(f)can be inferred to be closed either ifD=ℜ

n

- rf(x)→ ∞asx→∂D. The functionf(x) =

1 x forx>0is an example of a function

whoseepi(f)is not closed.

September 7, 2018 109 / 406