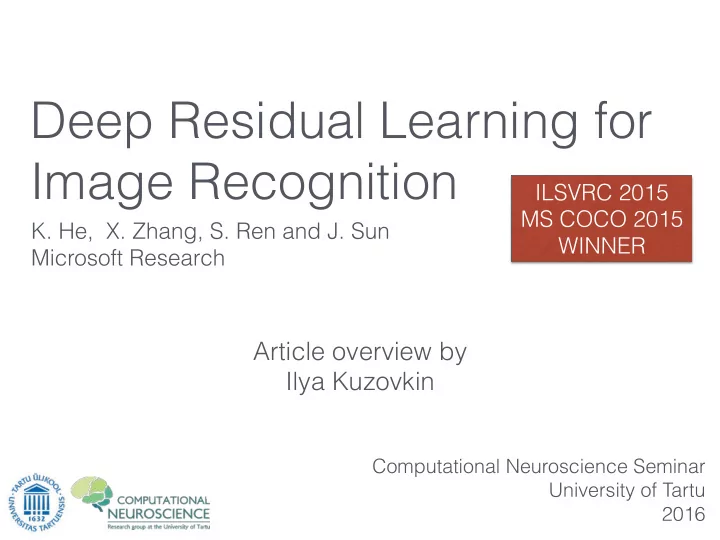

Article overview by Ilya Kuzovkin

- K. He, X. Zhang, S. Ren and J. Sun

Microsoft Research

Computational Neuroscience Seminar University of Tartu 2016

Deep Residual Learning for Image Recognition

ILSVRC 2015 MS COCO 2015 WINNER

Deep Residual Learning for Image Recognition ILSVRC 2015 MS COCO - - PowerPoint PPT Presentation

Deep Residual Learning for Image Recognition ILSVRC 2015 MS COCO 2015 K. He, X. Zhang, S. Ren and J. Sun WINNER Microsoft Research Article overview by Ilya Kuzovkin Computational Neuroscience Seminar University of Tartu 2016 T HE I DEA

Microsoft Research

Computational Neuroscience Seminar University of Tartu 2016

ILSVRC 2015 MS COCO 2015 WINNER

8 layers 15.31% error

8 layers 15.31% error

9 layers, 2x params 11.74% error

8 layers 15.31% error

9 layers, 2x params 11.74% error 19 layers 7.41% error

8 layers 15.31% error

9 layers, 2x params 11.74% error 19 layers 7.41% error

8 layers 15.31% error

9 layers, 2x params 11.74% error 19 layers 7.41% error

8 layers 15.31% error

9 layers, 2x params 11.74% error 19 layers 7.41% error

8 layers 15.31% error

9 layers, 2x params 11.74% error 19 layers 7.41% error

8 layers 15.31% error

9 layers, 2x params 11.74% error 19 layers 7.41% error

Conv Conv Conv Conv

Trained Accuracy X% Tested

Conv Conv Conv Conv

Trained Accuracy X%

Conv Conv Conv Conv Identity Identity Identity Identity

Tested Tested

Conv Conv Conv Conv

Trained Accuracy X%

Conv Conv Conv Conv Identity Identity Identity Identity

Same performance Tested Tested

Conv Conv Conv Conv

Trained Accuracy X%

Conv Conv Conv Conv Identity Identity Identity Identity

Same performance

Conv Conv Conv Conv Conv Conv Conv Conv Trained

Tested Tested Tested

Conv Conv Conv Conv

Trained Accuracy X%

Conv Conv Conv Conv Identity Identity Identity Identity

Same performance

Conv Conv Conv Conv Conv Conv Conv Conv Trained

Worse! Tested Tested Tested

Conv Conv Conv Conv

Trained Accuracy X%

Conv Conv Conv Conv Identity Identity Identity Identity

Same performance

Conv Conv Conv Conv Conv Conv Conv Conv Trained

Worse! Tested Tested Tested

Conv Conv Conv Conv

Trained Accuracy X%

Conv Conv Conv Conv Identity Identity Identity Identity

Same performance

Conv Conv Conv Conv Conv Conv Conv Conv Trained

Worse! Tested Tested Tested

Conv Conv Conv Conv

Trained Accuracy X%

Conv Conv Conv Conv Identity Identity Identity Identity

Same performance

Conv Conv Conv Conv Conv Conv Conv Conv Trained

Worse! Tested Tested Tested

is the true function we want to learn

is the true function we want to learn Let’s pretend we want to learn instead.

is the true function we want to learn Let’s pretend we want to learn instead. The original function is then

8 layers 15.31% error

9 layers, 2x params 11.74% error 19 layers 7.41% error

8 layers 15.31% error

9 layers, 2x params 11.74% error 19 layers 7.41% error 152 layers 3.57% error

This indicates that the degradation problem is well addressed and we manage to obtain accuracy gains from increased depth.

This indicates that the degradation problem is well addressed and we manage to obtain accuracy gains from increased depth.

by 3.5%

This indicates that the degradation problem is well addressed and we manage to obtain accuracy gains from increased depth.

by 3.5%

thus ResNet eases the optimization by providing faster convergence at the early stage.

ImageNet Classification 2015 1st 3.57% error ImageNet Object Detection 2015 1st 194 / 200 categories ImageNet Object Localization 2015 1st 9.02% error COCO Detection 2015 1st 37.3% COCO Segmentation 2015 1st 28.2%

http://research.microsoft.com/en-us/um/people/kahe/ilsvrc15/ilsvrc2015_deep_residual_learning_kaiminghe.pdf