Data Management Systems

- Access Methods

- Pages and Blocks

- Indexing

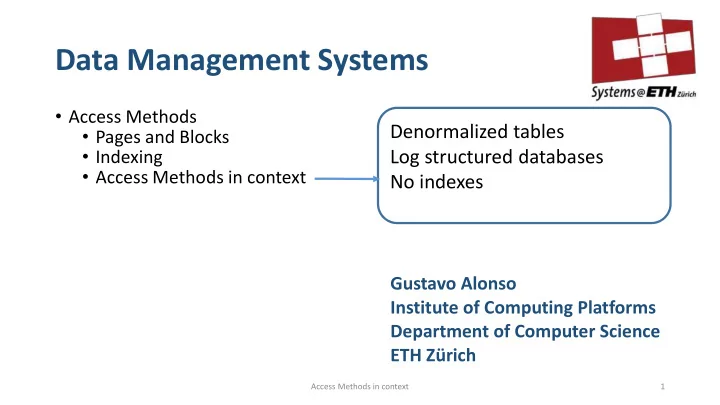

- Access Methods in context

Gustavo Alonso Institute of Computing Platforms Department of Computer Science ETH Zürich

1 Access Methods in context

Denormalized tables Log structured databases No indexes