1

CSE 473: Artificial Intelligence Hidden Markov Models

Daniel Weld University of Washington

[Many of these slides were created by Dan Klein and Pieter Abbeel for CS188 Intro to AI at UC Berkeley. All CS188 materials are available at http://ai.berkeley.edu.]

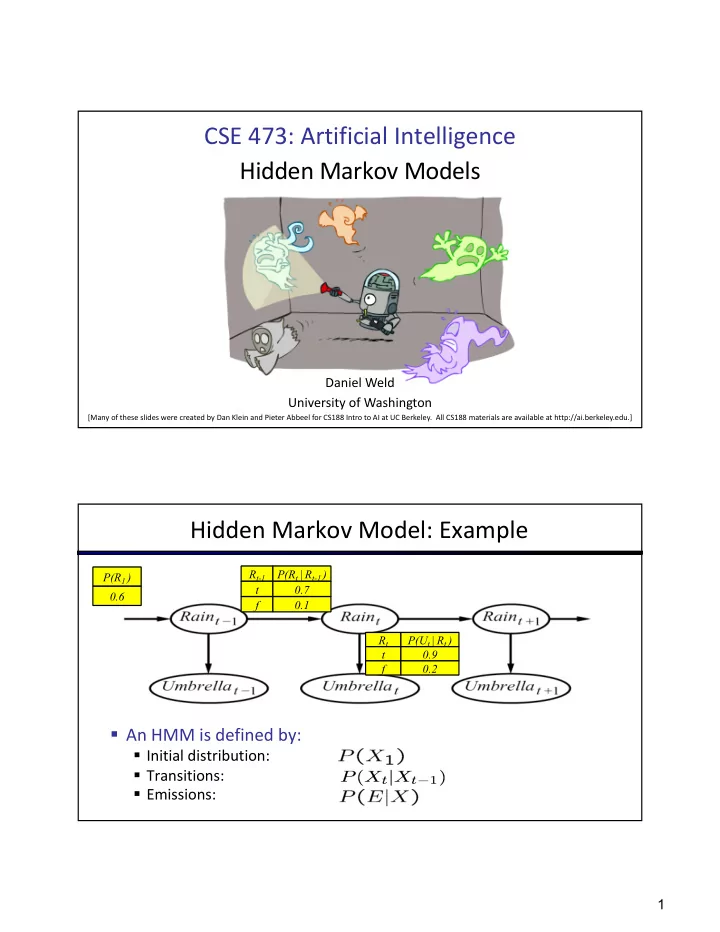

Hidden Markov Model: Example

§ An HMM is defined by:

§ Initial distribution: § Transitions: § Emissions:

P(R1 ) 0.6 Rt-1 t f P(Rt | Rt-1 ) 0.7 0.1 Rt t f P(Ut | Rt ) 0.9 0.2