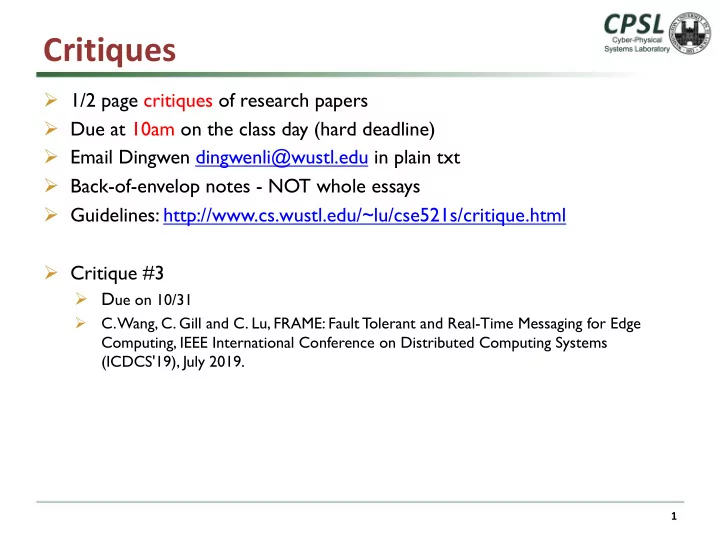

Critiques

Ø 1/2 page critiques of research papers Ø Due at 10am on the class day (hard deadline) Ø Email Dingwen dingwenli@wustl.edu in plain txt Ø Back-of-envelop notes - NOT whole essays Ø Guidelines: http://www.cs.wustl.edu/~lu/cse521s/critique.html Ø Critique #3

Ø Due on 10/31

Ø C. Wang, C. Gill and C. Lu, FRAME: Fault Tolerant and Real-Time Messaging for Edge Computing, IEEE International Conference on Distributed Computing Systems (ICDCS'19), July 2019.

1