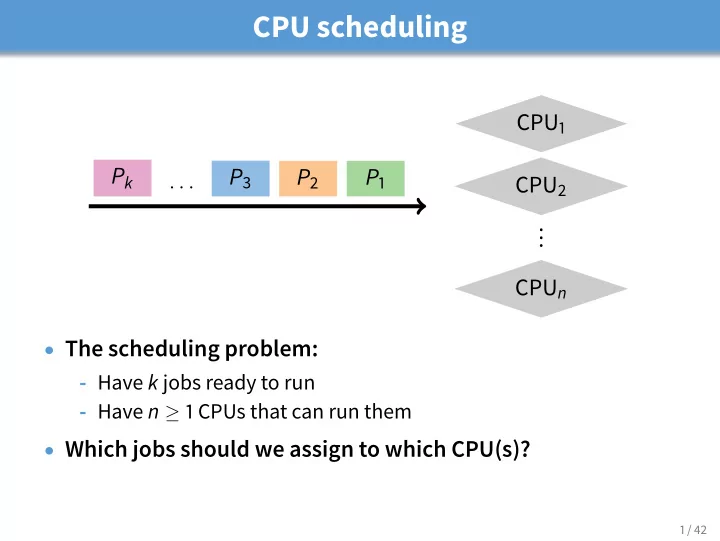

CPU scheduling

CPU1 CPU2 . . . CPUn P1 P2 P3

. . .

Pk

- The scheduling problem:

- Have k jobs ready to run

- Have n ≥ 1 CPUs that can run them

- Which jobs should we assign to which CPU(s)?

1 / 42

CPU scheduling CPU 1 P k P 3 P 2 P 1 . . . CPU 2 . . . CPU n The - - PowerPoint PPT Presentation

CPU scheduling CPU 1 P k P 3 P 2 P 1 . . . CPU 2 . . . CPU n The scheduling problem: - Have k jobs ready to run - Have n 1 CPUs that can run them Which jobs should we assign to which CPU(s)? 1 / 42 Outline Textbook scheduling 1 2

1 / 42

2 / 42

3 / 42

4 / 42

4 / 42

5 / 42

6 / 42

6 / 42

7 / 42

8 / 42

9 / 42

10 / 42

10 / 42

11 / 42

11 / 42

12 / 42

13 / 42

13 / 42

14 / 42

15 / 42

16 / 42

16 / 42

17 / 42

17 / 42

17 / 42

18 / 42

19 / 42

20 / 42

21 / 42

22 / 42

22 / 42

23 / 42

24 / 42

1See library.stanford.edu for off-campus access 25 / 42

26 / 42

27 / 42

28 / 42

29 / 42

30 / 42

CPU1 P2 P3 P1 P2 CPU2 P3 P1 P2 P3 CPU3 P1 P2 P3 P1

CPU1 P1 P1 P1 P1 CPU2 P2 P2 P2 P2 CPU3 P3 P3 P3 P3

31 / 42

32 / 42

33 / 42

34 / 42

j

35 / 42

j

35 / 42

36 / 42

37 / 42

38 / 42

j

39 / 42

40 / 42

41 / 42

42 / 42