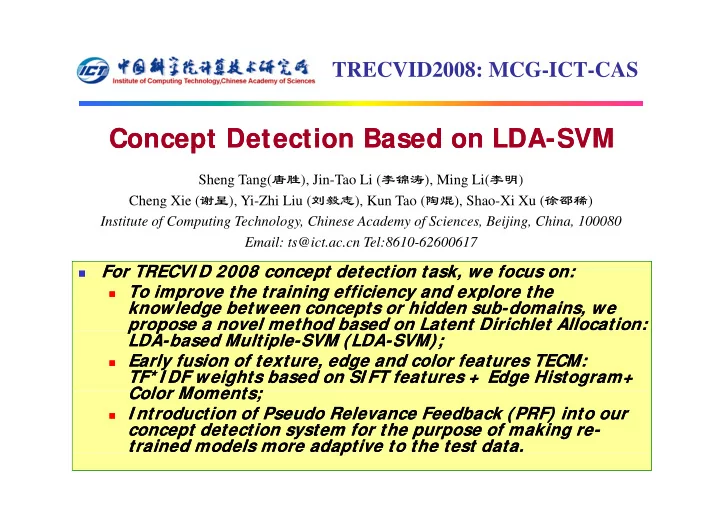

TRECVID2008: MCG-ICT-CAS

Concept Detection Based on Concept Detection Based on LDA LDA-

- SVM

SVM

Sheng Tang(唐胜) Jin Tao Li (李锦涛) Ming Li(李明)

Co cept etect o ased o Co cept etect o ased o S

Sheng Tang(唐胜), Jin-Tao Li (李锦涛), Ming Li(李明) Cheng Xie (谢呈), Yi-Zhi Liu (刘毅志), Kun Tao (陶焜), Shao-Xi Xu (徐邵稀) Institute of Computing Technology, Chinese Academy of Sciences, Beijing, China, 100080 E il t @i t T l 8610 62600617 Email: ts@ict.ac.cn Tel:8610-62600617

For TRECVI D 2008 concept detection task we focus on: For TRECVI D 2008 concept detection task we focus on:

- For TRECVI D 2008 concept detection task, we focus on:

For TRECVI D 2008 concept detection task, we focus on:

To improve the training efficiency and explore the

To improve the training efficiency and explore the p g y p p g y p knowledge between concepts or hidden sub knowledge between concepts or hidden sub-domains, we domains, we propose a novel method based on Latent Dirichlet Allocation: propose a novel method based on Latent Dirichlet Allocation: p p p p LDA LDA-based Multiple based Multiple-SVM (LDA SVM (LDA-SVM); SVM);

Early fusion of texture edge and color features TECM:

Early fusion of texture edge and color features TECM:

Early fusion of texture, edge and color features TECM:

Early fusion of texture, edge and color features TECM: TF* I DF weights based on SI FT features + Edge Histogram+ TF* I DF weights based on SI FT features + Edge Histogram+ Color Moments; Color Moments; Color Moments; Color Moments;

I ntroduction of Pseudo Relevance Feedback (PRF) into our