IRDM WS 2015

Chapter 3: Basics from Probability Theory and Statistics

3-39

Chapter 3: Basics from Probability Theory and Statistics 3.1 - - PowerPoint PPT Presentation

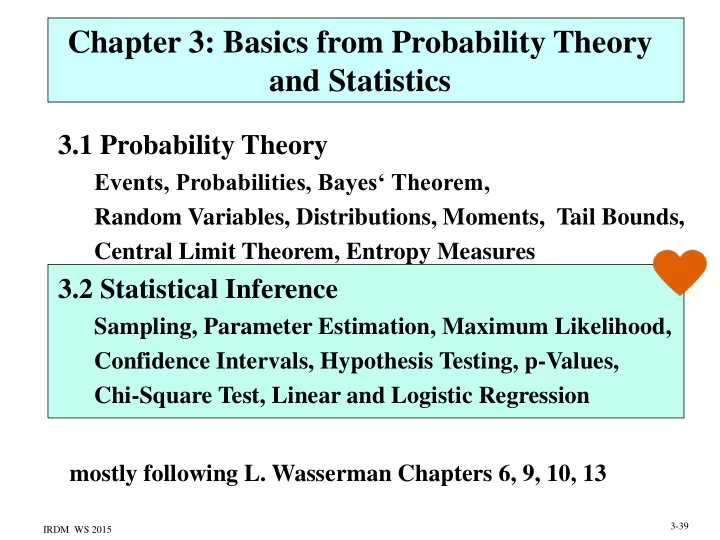

Chapter 3: Basics from Probability Theory and Statistics 3.1 Probability Theory Events, Probabilities, Bayes Theorem, Random Variables, Distributions, Moments, Tail Bounds, Central Limit Theorem, Entropy Measures 3.2 Statistical Inference

IRDM WS 2015

3-39

IRDM WS 2015

3-40

IRDM WS 2015

3-41

IRDM WS 2015

3-42

IRDM WS 2015

n i i

1

2 1 2

n i i

3-43

IRDM WS 2015

n

n

n

n

n

n

3-44

IRDM WS 2015

n

n n i i 1

3-45

IRDM WS 2015

n n i i 1

3-46

X1 = X2 = 1 X3 = X4 = X5 = 2 X6 = … X10 = 3 X11 = … X14 = 4 X15 = … X17 = 5 X18 = X19 = 6 X20 = 7

IRDM WS 2015 3-47

Sources: en.wikipedia.org de.wikipedia.org

IRDM WS 2015

3-48

IRDM WS 2015

3-49

𝑗=1 𝑜

𝑗=1 𝑜

2

𝑗=1 𝑜

IRDM WS 2015

𝒐

3-50

IRDM WS 2015

n

n

3-51

IRDM WS 2015

3-52

IRDM WS 2015

3-53

IRDM WS 2015

3-54

IRDM WS 2015

n i ) i x ( n n

1 2 2 2 2 1

1 2 4 2 2

n i i

n i i

1

2 1 2

n i i

3-55

IRDM WS 2015

1 1 1 k k k

j

3-56

IRDM WS 2015

2-57

IRDM WS 2015

n 1 i ' i i n 1 i i n 1

2-58

IRDM WS 2015

3-59

area: (a)= a=

IRDM WS 2015

2 1 2 1

/ /

3-60

IRDM WS 2015

3-61

IRDM WS 2015

2 1 2

n n , T

1

x t

2 1 1 2 1 1

/ , n / , n

3-62

IRDM WS 2015

3-63

William Gosset (1876-1937)

The Probable Error of a Mean, Biometrika 6(1), 1908

IRDM WS 2015

3-64

1 ) z ( 2 )) z ( 1 ( ) z ( ) z ( ) z ( ] z n ) X ( z [ P ] n z X n z X [ P

1

2 1 2 1

] n X n X [ P

/ /

n a : z

) , ( N

quantile ) ( : z 1 2 1

z a : n

IRDM WS 2015

3-65

IRDM WS 2015

3-66

IRDM WS 2015

3-67

IRDM WS 2015

3-68

IRDM WS 2015

2 2 1 / /

3-69

/2 1/2

IRDM WS 2015

3-70

IRDM WS 2015

3-71

3 320

𝑞−𝑞 𝑡𝑓 𝑞 0.25 1/100 2.5

IRDM WS 2015

𝜄−𝜄0 𝑡𝑓( 𝜄)

3-72

IRDM WS 2015

3-73

IRDM WS 2015

2 2 1 2 n n

n x n n

2 2 2 2

3-74

IRDM WS 2015

k i i i k

1

2 1 1

, k k

k i i i i k

1 2

3-75

IRDM WS 2015

j i ij

c j ij i

1

r i ij j

1 2 r c ij ij ij i 1 j 1

2 1 1 1

), c )( r (

3-76

IRDM WS 2015

3-77

𝑠

𝑑 𝐼𝑗𝑘−𝐹𝑗𝑘

2

𝐹𝑗𝑘

102 70 + −10 2 30

(−2)2 42 + 22 18 + (−8)2 28 + 82 12 12.6

2,0.95 5.99 reject H0

IRDM WS 2015

3-78

IRDM WS 2015

3-79

IRDM WS 2015

m i i i 1

(i) , ..., xm (i), y(i)), i=1..n, the

2 ( i ) ( i ) k m k i 1..n k 0..m

(i)=1)

k

T 1 T

(1) (1) (1) m 1 2 ( 2 ) ( 2 ) ( 2 ) m 1 2 ( n ) ( n ) ( n ) m 1 2

1 x x ... x 1 x x ... x X ... 1 x x ... x

2-80

IRDM WS 2015

m i i i 1 m i i i 1

x x

2-81

IRDM WS 2015

2-82

IRDM WS 2015

3-83