SLIDE 28 28 Stefanos Leonardos, Iosif Sakos, Costas Courcoubetis, and Georgios Piliouras

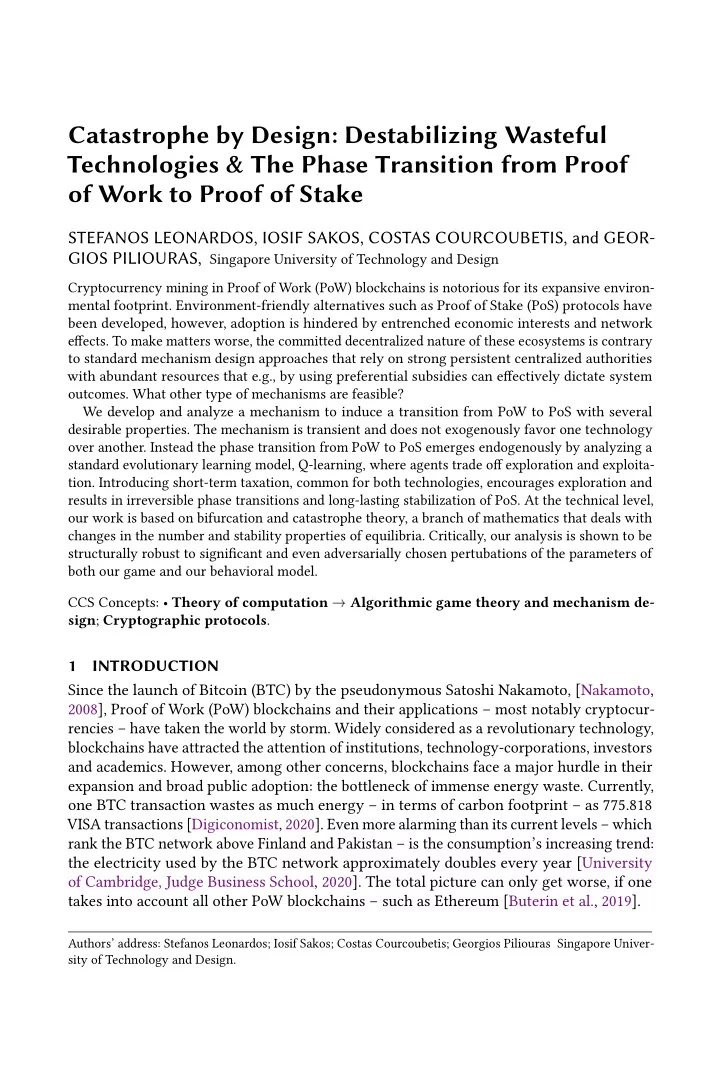

0.2 0.4 0.6 0.8 1 80 100 120 140 160 Fraction 𝑦 of investment in technology 𝑋 Aggregate payofg 𝑣𝐵 (𝑦) 𝛽 = 2 0.2 0.4 0.6 0.8 1 15 20 25 30 35 40 Fraction 𝑦 of investment in technology 𝑋 Aggregate payofg 𝑣𝐵 (𝑦) 𝛽 = 1/2 𝛽 = 1

- Fig. 13. Aggregate payofg 𝑣𝐵 (𝑦) created by the population as a whole at population state 𝑦 ∈ [0, 1]

(investment in technology 𝑋 ) for various values of parameter 𝛽. The aggregate payofg (vertical axis) is calculated by the formula: 𝑣𝐵 (𝑦) = 𝑊𝐿𝛽 𝑦𝛽 −

𝛿 𝑊𝐿𝛽−1𝑦 + (1 − 𝑦)𝛽, with 𝑦 ∈ [0, 1] and

selected values of the parameters 𝑊 = 10, 𝐿 = 4 and 𝛿 = 1. For 𝛽 = 2 (lefu panel) and in general for 𝛽 > 1, the total aggregate value is (locally) maximized at the boundaries, i.e., when either technology is fully adopted (superadditive value). The global maximum is atuained when the less costly technology 𝑇 is fully adopted, i.e., when 𝑦 = 0. By contrast, for 𝛽 = 1/2 (right panel, blue line), and in general for 𝛽 < 1, the aggregate wealth is maximized when the population is split between the two technologies (subadditive value). For 𝛽 = 1 (right panel, red line), the aggregate wealth is increasing in the adoption of the less costly technology 𝑇 (linear value).

𝑦 = 𝛽−1

𝛽

with value 𝛽−1

𝛽

𝛽−1 1

𝛽 . Accordingly, it suffjces to show that

𝛽 − 1 𝛽 𝛽−1 1 𝛽 ≤ 1 2𝛽

𝛽

𝛽−1 ≤ 1/2 for any 𝛽 ≥ 2. However, the term on the left side is decreasing in 𝛽, since by taking the logarithm and applying the inequality ln (𝑦) ≤ 𝑦 − 1, we obtain that 𝑒 𝑒𝛽 ln 𝛽 − 1 𝛽 𝛽−1 = ln 𝛽 − 1 𝛽

𝛽 ≤ 𝛽 − 1 𝛽 − 1 + 1 𝛽 = 0 Hence, the maximum of the left side is attained for 𝛽 = 2, yielding a value of 2−1

2

2−1 = 1

2,

which concludes the proof. □ Proof of Theorem 5.2. The case 𝛽 = 1 is trivial and 𝛽 = 2 has been treated in the main part of paper. For 𝛽 ≥ 3, 𝛿 ∈ (0, 1) and 𝑈 ≥ 1

2, the function 𝑔 𝛽 (𝑦) := 𝑦𝛽−1 − (1 − 𝑦)𝛽−1 −

𝛿 −𝑈 ln

𝑦 1−𝑦 is continuous and satisfjes

lim

𝑦→0+ 𝑔 (𝑦) = lim 𝑦→0+

- 𝑦𝛽−1 − (1 − 𝑦)𝛽−1 − 𝛿 −𝑈 ln

𝑦 1 − 𝑦

𝑔 1 2

Hence, since 𝑔 is continuous in (0, 1), by Bolzano’s theorem that there exists 𝑦∗ ∈ (0, 1

2)

such that 𝑔 (𝑦∗). To prove uniqueness, it will be suffjcient to prove that for 𝑈 ≥ 1/2, 𝑔𝛽 (𝑦) is decreasing in 𝑦. This implies that for 𝑈 ≥ 1/2, there is a unique steady state 𝑦∗ and