Caching / Performance

1

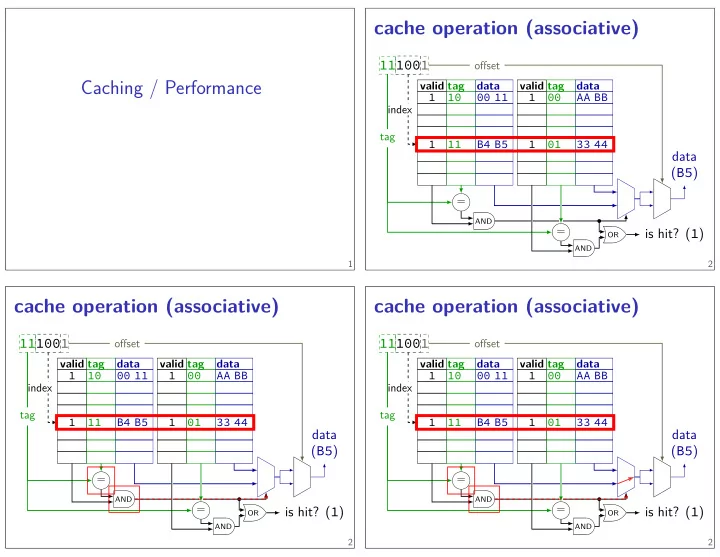

cache operation (associative)

valid tag data valid tag data 1 10 00 11 1 00 AA BB 1 11 B4 B5 1 01 33 44

100 11 1

index = = tag

AND AND OR

is hit? (1)

- fgset

data (B5)

2

cache operation (associative)

valid tag data valid tag data 1 10 00 11 1 00 AA BB 1 11 B4 B5 1 01 33 44

100 11 1

index = = tag

AND AND OR

is hit? (1)

- fgset

data (B5)

2

cache operation (associative)

valid tag data valid tag data 1 10 00 11 1 00 AA BB 1 11 B4 B5 1 01 33 44

100 11 1

index = = tag

AND AND OR

is hit? (1)

- fgset

data (B5)

2