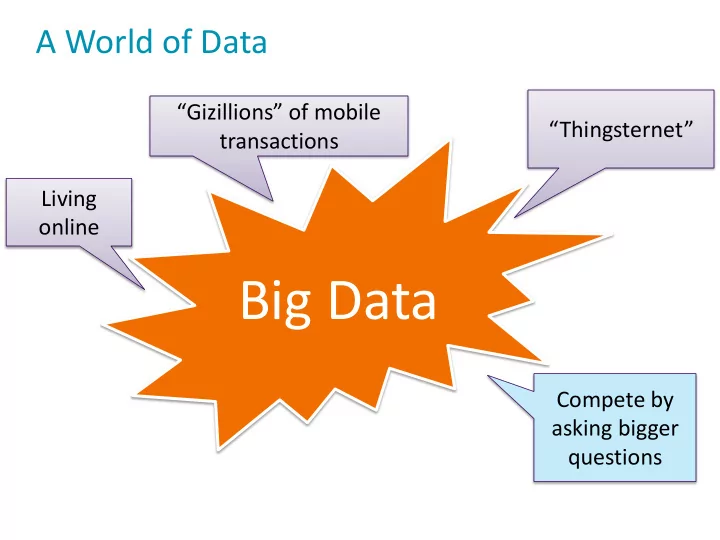

A World of Data

“Thingsternet” Compete by asking bigger questions Living

- nline

Big Data

“Gizillions” of mobile transactions

Big Data Compete by asking bigger questions $$$... $ ??? SLA - - PowerPoint PPT Presentation

A World of Data Gizillions of mobile Thingsternet transactions Living online Big Data Compete by asking bigger questions $$$... $ ??? SLA Yaaaay Hadoop to Save the Daaaay!! But its not always easy to tame an

A World of Data

“Thingsternet” Compete by asking bigger questions Living

“Gizillions” of mobile transactions

Yaaaay – Hadoop to Save the Daaaay!!

CUSTOMERS WEB CLIENT WEB SHOP BACKEND WEB SHOP DATA BASE ~100GB Product and Customer Transaction Data

Introducing “DataCo”

“We don’t really have a big data problem…”

> 6 months? CUSTOMERS WEB CLIENT WEB SHOP BACKEND WEB SHOP DATA BASE Mobile App Data Web App Click Stream Data IT/Ops and InfoSec Data Product and Customer Transaction Data

Introducing “DataCo”

Hive

Active Archive / Self Serve Ad-hoc BI

HDFS Impala

Using Sqoop to Ingest Data from MySQL

$ sqoop import-all-tables -m 12 –connect jdbc:mysql://my.sql.host:3306/retail_db --username=dataco_dba

$ sqoop import -m 12 –connect jdbc:mysql://my.sql.host:3306/retail_db

Create Tables in Hive

structures

hive> CREATE EXTERNAL TABLE products > ROW FORMAT SERDE 'org.apache.hadoop.hive.serde2.avro.AvroSerDe' > STORED AS INPUTFORMAT 'org.apache.hadoop.hive.ql.io.avro.AvroContainerInputFormat' > OUTPUTFORMAT 'org.apache.hadoop.hive.ql.io.avro.AvroContainerOutputFormat' > LOCATION 'hdfs:///user/hive/warehouse/products' > TBLPROPERTIES ('avro.schema.url'='hdfs://namenode_dataco/user/examples/products.avsc');

Use Impala via Hue to Query

Correlate Multi-type Data Sets

Hive

HDFS Impala Flume

Ingest Data Using Flume

FLUME SOURCE FLUME SINK Continuously generated events, e.g. syslog, tweets Flume Agent, HDFS, HBase, Solr, or other destination Optional Logic FLUME AGENT

Create Hive Tables over Log Data

CREATE EXTERNAL TABLE intermediate_access_logs ( ip STRING, date STRING, method STRING, url STRING, http_version STRING, code1 STRING, code2 STRING, dash STRING, user_agent STRING) ROW FORMAT SERDE 'org.apache.hadoop.hive.contrib.serde2.RegexSerDe' WITH SERDEPROPERTIES ( "input.regex" = "([^ ]*) - - \\[([^\\]]*)\\] \"([^\ ]*) ([^\ ]*) ([^\ ]*)\" (\\d*) (\\d*) \"([^\"]*)\" \"([^\"]*)\"", "output.format.string" = "%1$s %2$s %3$s %4$s %5$s %6$s %7$s %8$s %9$s" ) LOCATION '/user/hive/warehouse/original_access_logs'; CREATE EXTERNAL TABLE tokenized_access_logs ( ip STRING, date STRING, method STRING, url STRING, http_version STRING, code1 STRING, code2 STRING, dash STRING, user_agent STRING) ROW FORMAT DELIMITED FIELDS TERMINATED BY ',' LOCATION '/user/hive/warehouse/tokenized_access_logs'; ADD JAR /opt/cloudera/parcels/CDH/lib/hive/lib/hive-contrib.jar; INSERT OVERWRITE TABLE tokenized_access_logs SELECT * FROM intermediate_access_logs; exit;

Use Impala and Hue to Query

Missing!!! 2 8 5 7 1 6 3 4 9

Solr

Multi-Use-Case Data Hub

HDFS

Search Queries

Flume

Create your Index

to search over

$ solrctl --zk <ALL YOUR ZK IPs>/solr instancedir --generate live_logs_dir

… <field name="_version_" type="long" indexed="true" stored="true" multiValued="false" /> <field name="id" type="string" indexed="true" stored="true" required="true" multiValued="false" /> <field name="ip" type="text_general" indexed="true" stored="true"/> <field name="request_date" type="date" indexed="true" stored="true"/> …

Create your Index cont.

ZooKeeper

indexing data for it

$ solrctl --zk <ALL YOUR ZK IPs>/ solr collection --create live_logs -s 4 $ solrctl --zk <ALL YOUR ZK IPs>/solr instancedir --create live_logs ./live_logs_dir

Flume and Morphline Pipeline

Flume with Morphlines Configured

parsed data to Solr

…. # Describe solrSink agent1.sinks.solrSink.type = org.apache.flume.sink.solr.morphline.MorphlineSolrSink agent1.sinks.solrSink.channel = memoryChannel agent1.sinks.solrSink.batchSize = 1000 agent1.sinks.solrSink.batchDurationMillis = 1000 agent1.sinks.solrSink.morphlineFile = /opt/examples/flume/conf/morphline.conf agent1.sinks.solrSink.morphlineId = morphline agent1.sinks.solrSink.threadCount = 1 …..

Dynamic Search UI in Hue

Shared Storage!!

Benefits

faster insight

asthma related ICU visits

fees < 3 processor licenses for EDW

Solution

data per week

Impala, HDFS

Challenges

monitoring data capacity

correlate large research data sets

hoc study environment impact

How Do We Improve Healthcare?

How Do We Feed The World?

Global Warming Changes Conditions

How do we improve quality and resistance of crops and seeds in a variety of global and rapidly changing environments?

Benefits

processes

reduced from years to months!!!

Solution

Solr, MapReduce, Sqoop, Impala, …

Challenges

for each new product: 5-10 years

scientists working in silos

bottlenecks slow development

How Do We Feed The World?

Challenges

events/month

type event correlation complex

ad-hoc game analytics

Benefits

trends

reduction

the 1st week

Solution

servers

Impala, HDFS

Learn More?

http://cloudera.com/content/cloudera/en/training.html

Hope You Enjoyed This Talk!

Don’t forget to VOTE!!!

Bonus Track…

My Advice for the Road…

Try Something Simple First…

Decide what to Cook!

Collect All Ingredients

Use the Right Tool for the Right Task

Prepare All Ingredients

Don’t Forget the Importance of Visualization!

Benefits

genome sequencing

data for biologists to explore

Solution

storage of multi- structured experimental data

exploration via Impala, R, HBase, Solr, Hive

Challenges

information locked away in medical records & scientific studies

& systems can’t “talk” to each

Using Sqoop to Ingest Data from MySQL

$ hadoop fs -ls /user/hive/warehouse/ $ hadoop fs -ls /user/hive/warehouse/mytablename/

Hadoop - A New Approach to Data Management

Schema on Read Distributed Storage Distributed Processing Active Archive Cost-Efficient Offload Flexible Analytics

Hadoop: Storage & Batch Processing

The Birth of the Data Lake

2006 2007 2008 2009 2010 2011

2012 2013 2014

A Rapidly Growing Ecosystem

The Rise of an Enterprise Data Hub

Applications

HDFS

2005-2007 – Hadoop

MapReduce

HDFS

2008 – HBase, ZooKeeper, Mahout

MapReduce HBase ZooKeeper Mahout

HDFS

2009 – Hive, Pig

MapReduce HBase ZooKeeper Mahout Hive Pig

HDFS

2010 – Flume, Sqoop, Avro

MapReduce HBase ZooKeeper Mahout Hive Pig Flume DB Avro

HDFS

2011 – Oozie, Hue

MapReduce HBase ZooKeeper Mahout Hive Pig Flume DB Avro Oozie Hue

HDFS

2012 – YARN, Impala, Parquet

MapReduce HBase ZooKeeper Mahout Hive Pig Flume DB Avro Oozie Hue Parquet Impala YARN

HDFS

2013 – Solr, Sentry

MapReduce HBase ZooKeeper Mahout Hive Pig Flume DB Avro Oozie Hue Parquet Impala Solr YARN Sentry

HDFS

2014 – Spark, Kafka

MapReduce HBase ZooKeeper Mahout Hive Pig Flume DB Avro Oozie Hue Parquet Impala Solr YARN Sentry Spark Kafka

Inter- active SQL

Distributed File System (Scalable Storage)

The Hadoop Ecosystem – Explained!

Event-based data ingest Batch Processing KeyValue Store SQL

Proc. Oriented Query

Machine Learning Process Mgmt Workflow Mgmt GUI Resource Management and Scheduling Free- Text Search

Real Time Proces sing

Access Control DB

Common Use Cases

identification

The Right Tool For the Right Task

Tool Workload Use Case Result Ordering Hive Batch SQL, Analytics & Joins Structured Pig Batch

Joins Structured Impala Interactive SQL, Analytics & Joins Structured Solr Interactive Fuzzy, Phonetic, Polygon, GEO- special Relevance- based HBase Real Time Random key-lookups over sparsely populated columnar data Scan-order Spark NRT Advanced analytics & ML Sorted

When to use what?

not wait hours for the response

need to contain 15+ “like” conditions

When to use what?

my data sets fit into memory

time

process it with my custom logic – no real time needs