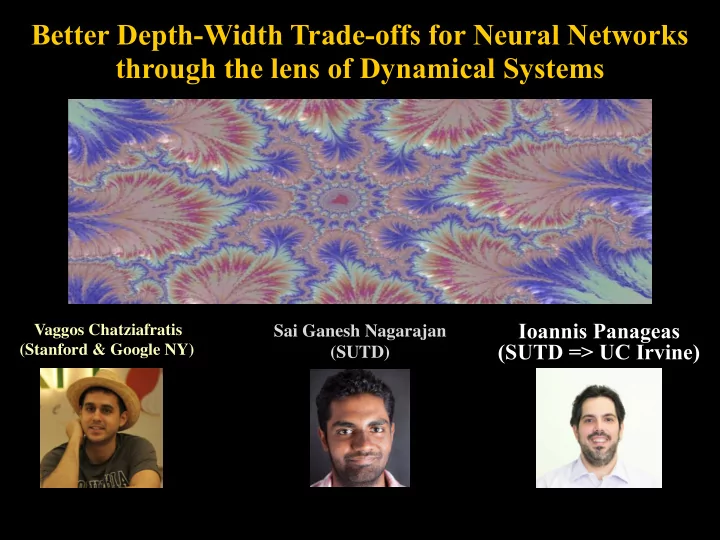

SLIDE 1 Vaggos Chatziafratis (Stanford & Google NY)

Sai Ganesh Nagarajan (SUTD)

Better Depth-Width Trade-offs for Neural Networks through the lens of Dynamical Systems

Ioannis Panageas (SUTD => UC Irvine)

SLIDE 2

Deep Neural Networks

Are Deeper NNs more powerful?

SLIDE 3

Approximation Theory (1885-today) ReLU activation units Semi-algebraic units [Telgarsky 15’,16’]: piecewise polynomials, max/min gates, and (boosted) decision trees

SLIDE 4

Expressivity of NNs

Which functions can NNs approximate?

Cybenko [1989]:

Any continuous function can be represented as a (hidden) 1-layer sigmoid net (with “some” width).

SLIDE 5

Expressivity of NNs

Which functions can NNs approximate?

Cybenko [1989]:

Any continuous function can be represented as a (hidden) 1-layer sigmoid net (with “some” width).

in practice: bounded resources!

SLIDE 6

Depth Separation Results

Is there a function expressible by a deep NN that cannot be approximated with a much wider shallow NN?

Yes! Challenging!

SLIDE 7

Depth Separation Results

Is there a function expressible by a deep NN that cannot be approximated with a much wider shallow NN?

Yes! Challenging!

L=100 400 vs 10000 ReLUs

Tent or Triangle map

SLIDE 8 [Telgarsky’15,’16] Tantalizing open question:

- 1. Can we understand larger families of functions?

- 2. Why is the tent map suitable to prove depth separations?

(what if we slightly tweak the tent map?)

Prior Work

SLIDE 9 [Telgarsky’15,’16] Tantalizing open question:

- 1. Can we understand larger families of functions?

- 2. Why is the tent map suitable to prove depth separations?

(what if we slightly tweak the tent map?)

Prior Work

SLIDE 10 [Telgarsky’15,’16] Tantalizing open question:

- 1. Can we understand larger families of functions?

- 2. Why is the tent map suitable to prove depth separations?

(what if we slightly tweak the tent map?)

Prior Work

SLIDE 11 [Telgarsky’15,’16] Tantalizing open question:

- 1. Can we understand larger families of functions?

- 2. Why is the tent map suitable to prove depth separations?

(what if we slightly tweak the tent map?)

Prior Work

0.5 1 1.5 2 2.5 3 3.5 4 4.5 5

x

0.5 1 1.5 2 2.5 3 3.5 4 4.5 5

f(x)

SLIDE 12 [Telgarsky’15,’16] Tantalizing open question:

- 1. Can we understand larger families of functions?

- 2. Why is the tent map suitable to prove depth separations?

(what if we slightly tweak the tent map?)

Prior Work

0.5 1 1.5 2 2.5 3 3.5 4 4.5 5

x

0.5 1 1.5 2 2.5 3 3.5 4 4.5 5

f(x)

SLIDE 13

- 2. We show tight connections between Lipschitz constant,

periods of f, and oscillations.

- 3. Sharper period-dependent depth-width tradeoffs and

easy constructions of examples. Connections to Dynamical Systems [ICLR’20]:

Our work in ICML 2020

- 4. Experimental validation of our theoretical results.

- 1. We get L1-approximation error and

not just classification error.

SLIDE 14

Tent Map (by Telgarsky)

SLIDE 15

exponentially many bumps

Repeated Compositions

SLIDE 16 exponentially many bumps

Repeated Compositions

0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 1

x

0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 1

f6(x)

ReLU NN: #linearRegions:

SLIDE 17

Our starting observation: Period 3

SLIDE 18

Li-Yorke Chaos (1975)

SLIDE 19

Sharkovsky’s Theorem (1964)

SLIDE 20

Sharkovsky’s Theorem (1964)

SLIDE 21

Period-dependent Trade-offs

Main Lemma:

[ICLR 2020]

SLIDE 22

Main Lemma:

Period-dependent Trade-offs [ICLR 2020]

Informal Main Result:

SLIDE 23

Main Lemma:

Period-dependent Trade-offs [ICLR 2020]

SLIDE 24 [ICLR 2020]

period 3 period 3 period 5 period 4

0.5 1 1.5 2 2.5 3 3.5 4 4.5 5

x

0.5 1 1.5 2 2.5 3 3.5 4 4.5 5

f(x)

0.5 1 1.5 2 2.5 3 3.5 4 4.5 5

x

0.5 1 1.5 2 2.5 3 3.5 4 4.5 5

f(x)

period 4 period 4

Examples

SLIDE 25

Main Lemma:

Period-dependent Trade-offs [ICLR 2020]

Informal Main Result:

SLIDE 26

- 2. We show tight connections between Lipschitz constant,

periods of f, and oscillations.

- 3. Sharper period-dependent depth-width tradeoffs and

easy constructions of examples. Further connections to Dynamical Systems:

Our work in ICML 2020

- 4. Experimental validation of our theoretical results.

- 1. We get L1-approximation error and

not just classification error.

SLIDE 27 Further connections to Dynamical Systems:

Our work in ICML 2020

- 1. We get L1-approximation error and

not just classification error.

SLIDE 28

Further connections to Dynamical Systems:

Our work in ICML 2020

Is it so hard to obtain L1 guarantees? Period 3 of f, only informs us on 3 values of f.

SLIDE 29

- 2. We show tight connections between Lipschitz constant,

periods of f, and oscillations.

- 3. Sharper period-dependent depth-width tradeoffs and

easy constructions of examples. Further connections to Dynamical Systems:

Our work in ICML 2020

- 4. Experimental validation of our theoretical results.

- 1. We get L1-approximation error and

not just classification error.

SLIDE 30

Lemma (Lower Bound on L):

Periods, Oscillations, Lipschitz

Informal Main Result (Lipschitz matches oscillations):

SLIDE 31

Proof Sketch

Definitions: Fact [Telgarsky’16]:

SLIDE 32

Proof Sketch

Definitions: Claim:

SLIDE 33

Proof Sketch

SLIDE 34

- 2. We show tight connections between Lipschitz constant,

periods of f, and oscillations.

- 3. Sharper period-dependent depth-width tradeoffs and

easy constructions of examples. Further connections to Dynamical Systems:

Our work in ICML 2020

- 4. Experimental validation of our theoretical results.

- 1. We get L1-approximation error and

not just classification error.

SLIDE 35

Periods, Oscillations

Main Lemma:

If f has period p, how many oscillations?

Period-specific threshold phenomenon:

SLIDE 36

Proof Sketch If f has period p, how many oscillations? Oscillations Root of

characteristic

SLIDE 37

Proof Sketch If f has period p, how many oscillations?

SLIDE 38

Tight examples - Sensitivity

Function of period p & Lipschitz

matching oscillation growth: If slope is less than 1.618, then no period 3 appears

SLIDE 39

- 2. We show tight connections between Lipschitz constant,

periods of f, and oscillations.

- 3. Sharper period-dependent depth-width tradeoffs and

easy constructions of examples. Further connections to Dynamical Systems:

Our work in ICML 2020

- 4. Experimental validation of our theoretical results.

- 1. We get L1-approximation error and

not just classification error.

SLIDE 40 Experimental Section Goals:

- 2. Validate our theoretical threshold

for separating shallow NNs from deep.

- 1. Instantiate benefits of depth

for a period-specific task. Setting: f(x)=1.618|x|-1 Training: Define a regression task on 10K datapoints chosen uniformly at random by evaluating f. We use Adam as the optimizer and train for 1500 epochs. Overfitting: We are interested in representation. Width: 20, #layers: 1 up to 5 Easy Task: We take only 8 compositions of f. Hard Task: We take 40 compositions of f.

SLIDE 41 Regression error vs depth for easy task Classification error vs depth for the easy task appearing in

Easy Task: We take only 8 compositions of f. Adding depth does help in reducing error.

SLIDE 42

Hard Task: We take 40 compositions of f. Error (blue line) is independent of depth

and is extremely close to theoretical bound (orange line).

SLIDE 43 Recap

- 2. Tight connections between Lipschitz, periods,oscillations.

Natural property of continuous funcitons: Period

- 1. Sharp depth-width tradeoffs and L1-separations

Simple constructions useful for proving separations.

Future Work

Understanding optimization (e.g., Malach, Shalev-Shwartz’19) Unifying notions of complexity used for separations:

trajectory length, global curvature, algrebraic varieties Topological Entropy from Dynamical Systems

SLIDE 44 Vaggos Chatziafratis (Stanford & Google NY)

MIT Mifods Talk by Panageas (2020):

https://www.youtube.com/watch?v=HNQ204BmOQ8

Better Depth-Width Trade-offs for Neural Networks through the lens of Dynamical Systems

Sai Ganesh Nagarajan (SUTD)

Ioannis Panageas (SUTD => UC Irvine)

ICLR 2020 spotlight talk:

https://iclr.cc/virtual_2020/poster_BJe55gBtvH.html