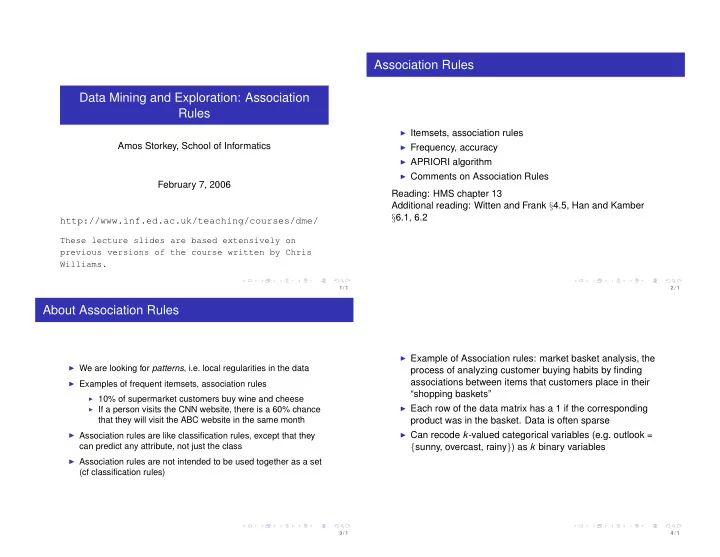

Data Mining and Exploration: Association Rules

Amos Storkey, School of Informatics February 7, 2006 http://www.inf.ed.ac.uk/teaching/courses/dme/

These lecture slides are based extensively on previous versions of the course written by Chris Williams.

1 / 1

Association Rules

◮ Itemsets, association rules ◮ Frequency, accuracy ◮ APRIORI algorithm ◮ Comments on Association Rules

Reading: HMS chapter 13 Additional reading: Witten and Frank §4.5, Han and Kamber §6.1, 6.2

2 / 1

About Association Rules

◮ We are looking for patterns, i.e. local regularities in the data ◮ Examples of frequent itemsets, association rules

◮ 10% of supermarket customers buy wine and cheese ◮ If a person visits the CNN website, there is a 60% chance

that they will visit the ABC website in the same month

◮ Association rules are like classification rules, except that they

can predict any attribute, not just the class

◮ Association rules are not intended to be used together as a set

(cf classification rules)

3 / 1

◮ Example of Association rules: market basket analysis, the

process of analyzing customer buying habits by finding associations between items that customers place in their “shopping baskets”

◮ Each row of the data matrix has a 1 if the corresponding

product was in the basket. Data is often sparse

◮ Can recode k-valued categorical variables (e.g. outlook =

{sunny, overcast, rainy}) as k binary variables

4 / 1