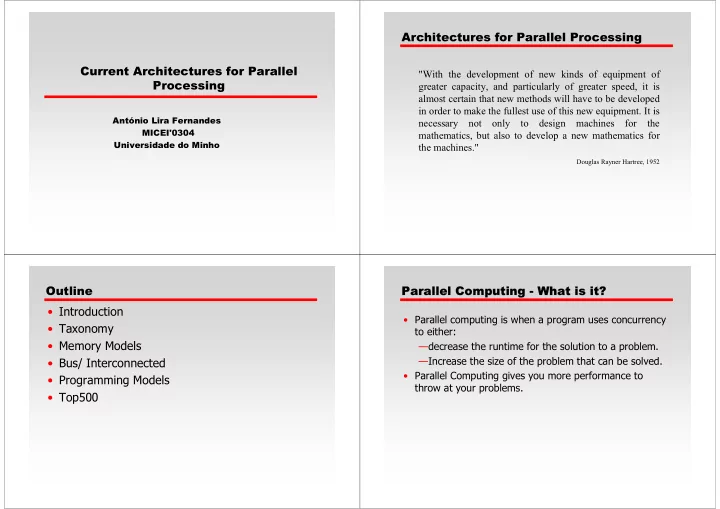

SLIDE 10 Outline

- Introduction

- Taxonomy

- Memory Models

- Bus/ Interconnected

- Programming Models

- Top500

Top 500

Rank Site

Country/Year Computer / Processors Manufacturer Computer Family Model

- Inst. type

- Inst. Area Rmax

Rpeak Nmax nhalf

1 Earth Simulator Center Japan/2002 Earth-Simulator / 5120 NEC NEC Vector SX6 Research 35860 40960 1.0752e+06 266240 2 Los Alamos National Laboratory United States/2002 ASCI Q - AlphaServer SC45, 1.25 GHz / 8192 HP HP AlphaServer Alpha-Server-Cluster Research 13880 20480 633000 225000 3 Virginia Tech United States/2003 1100 Dual 2.0 GHz Apple G5/Mellanox Infiniband 4X/Cisco GigE / 2200 Self-made NOW - PowerPC G5 Cluster Academic 10280 17600 520000 152000 4 NCSA United States/2003 Tungsten PowerEdge 1750, P4 Xeon 3.06 GHz, Myrinet / 2500 Dell Dell Cluster PowerEdge 1750, Myrinet Academic 9819 15300 630000 5 Pacific Northwest National Laboratory United States/2003 Mpp2 Integrity rx2600 Itanium2 1.5 GHz, Quadrics / 1936 HP HP Cluster Integrity rx2600 Itanium2 Cluster Research 8633 11616 835000 140000 6 Los Alamos National Laboratory United States/2003 Lightning Opteron 2 GHz, Myrinet / 2816 Linux Networx NOW - AMD NOW Cluster - AMD - Myrinet Research 8051 11264 761160 109208 7 Lawrence Livermore National Laboratory United States/2002 MCR Linux Cluster Xeon 2.4 GHz - Quadrics / 2304 Linux Networx/Quadrics NOW - Intel Pentium NOW Cluster - Intel

Research 7634 11060 350000 75000 8 Lawrence Livermore National Laboratory United States/2000 ASCI White, SP Power3 375 MHz / 8192 IBM IBM SP SP Power3 375 MHz high node Research 7304 12288 640000 9 NERSC/LBNL United States/2002 Seaborg SP Power3 375 MHz 16 way / 6656 IBM IBM SP SP Power3 375 MHz high node Research 7304 9984 640000 10 Lawrence Livermore National Laboratory United States/2003 xSeries Cluster Xeon 2.4 GHz - Quadrics / 1920 IBM/Quadrics IBM Cluster xSeries Cluster Xeon

Research 6586 9216 425000 90000 14 Chinese Academy of Science China/2003 DeepComp 6800, Itanium2 1.3 GHz, QsNet / 1024 Legend Legend DeepComp 6800 Academic 4183 5324.8 491488 15 Commissariat a l'Energie Atomique (CEA) France/2001 AlphaServer SC45, 1 GHz / 2560 HP HP AlphaServer Alpha-Server-Cluster Research 3980 5120 360000 85000 16 HPCx United Kingdom/2002 pSeries 690 Turbo 1.3GHz / 1280 IBM IBM SP SP Power4, Colony Academic 3406 6656 317000

Top 500 Top 500