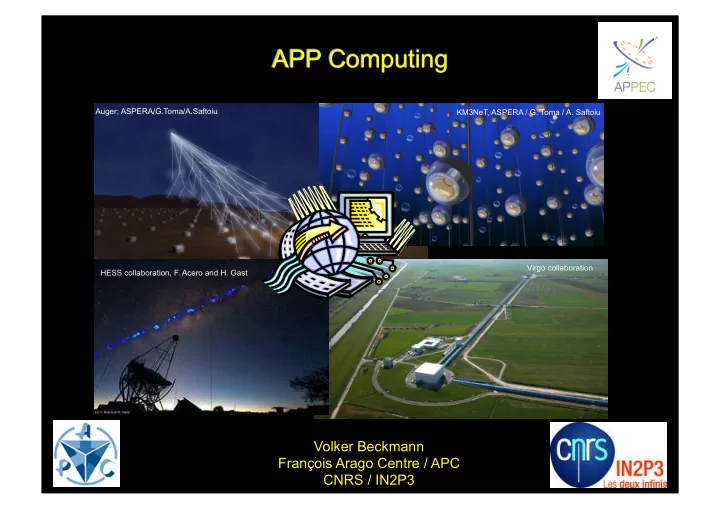

APP Computing

Volker Beckmann François Arago Centre / APC CNRS / IN2P3

Virgo collaboration KM3NeT, ASPERA / G. Toma / A. Saftoiu Auger; ASPERA/G.Toma/A.Saftoiu HESS collaboration, F. Acero and H. Gast

APP Computing Auger; ASPERA/G.Toma/A.Saftoiu KM3NeT, ASPERA / G. - - PowerPoint PPT Presentation

APP Computing Auger; ASPERA/G.Toma/A.Saftoiu KM3NeT, ASPERA / G. Toma / A. Saftoiu Virgo collaboration HESS collaboration, F. Acero and H. Gast Volker Beckmann Franois Arago Centre / APC CNRS / IN2P3 Outline Status Computing

Volker Beckmann François Arago Centre / APC CNRS / IN2P3

Virgo collaboration KM3NeT, ASPERA / G. Toma / A. Saftoiu Auger; ASPERA/G.Toma/A.Saftoiu HESS collaboration, F. Acero and H. Gast

Berghöfer et al. 2015 arXiv:1512.00988

HESS Fermi Cherenkov Telescope Array (CTA)

Fermi INTEGRAL Swift

Auger; ASPERA/G.Toma/A.Saftoiu Antares; Credits: F. Montanet

Trend similar for tape storage

Usage of CPU time France Grille per project

InSiDe Jülich

Credit: Pierre Macchi (CC-IN2P3) Computing of 7 PNHE projects amounts to 5% GENCI computing; see previous presentation by F. Casse

Euclid RedBook (2012) Images (optical / infrared) spectra External (ground-based) images Merging of the data Photometric redshifts (distances) spectra Shape measurements High-level science products

Euclid RedBook (2012)