Apache Storm: Hands-on Session

A.A. 2018/19 Fabiana Rossi Laurea Magistrale in Ingegneria Informatica - II anno

Macroareadi Ingegneria Dipartimento di Ingegneria Civile e Ingegneria Informatica

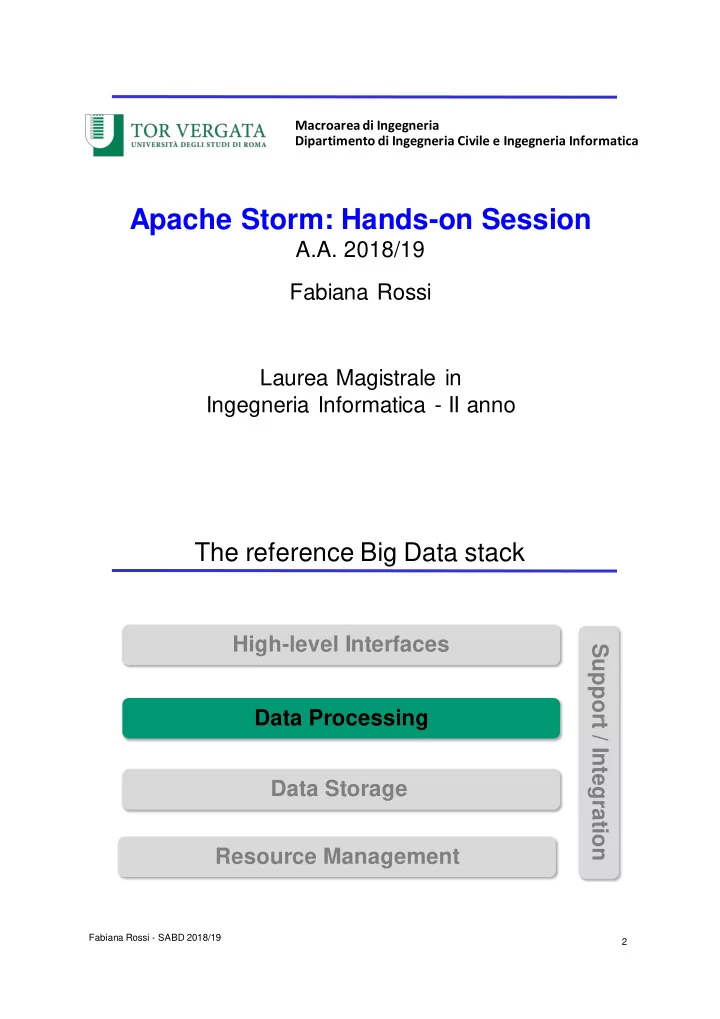

The reference Big Data stack

Fabiana Rossi - SABD 2018/19 2