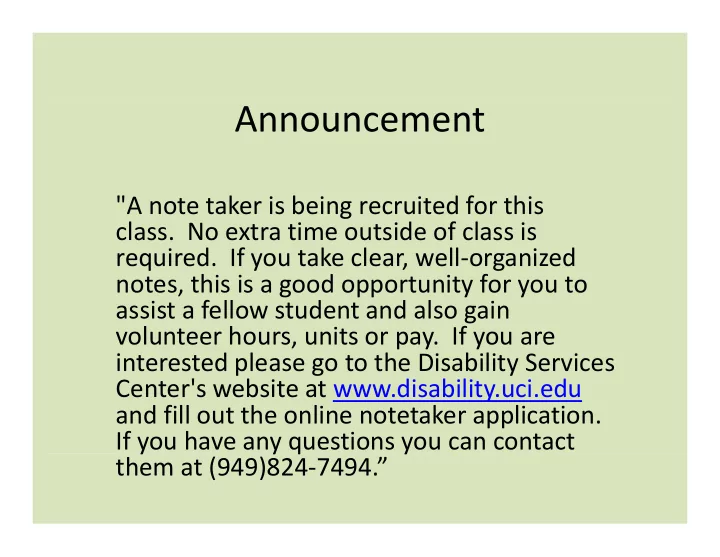

Announcement

"A note taker is being recruited for this

- class. No extra time outside of class is

- required. If you take clear, well‐organized

Announcement "A note taker is being recruited for this class. No - - PowerPoint PPT Presentation

Announcement "A note taker is being recruited for this class. No extra time outside of class is required. If you take clear, well organized notes, this is a good opportunity for you to assist a fellow student and also gain assist a fellow

N= 3

– You have N lights that can change colors.

M= 4

– Initial state: Each light is a given color. – Actions: Change the color of a specific light.

Y d ’ k h i h hi h li h

– Transition Model: RESULT(s,a) = s’

where s’ differs from s by exactly one light’s color.

– Goal test: A desired color for each light.

g

h “ l d h ” h bl

N= 3

– Find: Shortest action sequence to goal.

M= 4

h(n) number of lights the wrong color

– f(n) = (under‐) estimate of total path cost ( ) th t f b f ti f – g(n) = path cost so far = number of actions so far

– Admissible = never overestimates the cost to the goal. Yes because: (a) each light that is the wrong color must change; – Yes, because: (a) each light that is the wrong color must change; and (b) only one light changes at each action.

– Consistent = h(n) ≤ c(n,a,n’) + h(n’), for n’ a successor of n.

( ) ( , , ) ( ), – Yes, because: (a) c(n,a,n’)=1; and (b) h(n) ≤ h(n’)+1

– Gradient Descent in continuous spaces

Note that a state cannot be an incomplete configuration with m< n queens

Each number indicates h if we move i it di l a queen in its corresponding column

p q g , y indirectly (h = 17 for the above state)

A local minimum with h = 1

(what can you do to get out of this local minima?)

and we want minimize over continuous variables X1,X2,..,Xn

1

( ,..., )

n

C x x

, , ,

1

( ,..., )

n i

C x x i x

1

' ( ,..., )

i i i n i

x x x C x x i x

i 1 1

( ,.., ',.., ) ( ,.., ,.., )

i n i n

C x x x C x x x

searches along that direction for the optimal step:

* argmin C(xt vt)

g (

t

t)

d h d “ d ”

2 4 8 (until cost increases)

Very good method is “conjugate gradients”.

Basins of attraction for x5 − 1 = 0; darker means more iterations to converge.

f (xn) f (xn) 0 xn1 xn xn1 xn f (xn) f (xn)

f (x ) f (xn) 0 x x f (x )

1f (x )

f (xn) xn1 xn xn1 xn f (xn)

f (xn)

d l l b ll "b d" b

gradually decrease their frequency.

annealing search will find a global optimum with probability approaching 1 (however, this may take VERY long)

– However, in any finite search space RANDOM GUESSING also will find a global

Wid l d i VLSI l i li h d li

excluded from being visited again. Thi th l f l d l d i d

(in principle) avoids getting stuck in local minima.

l t li t d t complete list and repeat.

Ma lose di ersit as search progresses res lting in asted effort – May lose diversity as search progresses, resulting in wasted effort.

and 1s)

( ) g

mutation

fitness: fitness: # non-attacking queens b bili f b i probability of being regenerated in next generation

8 × 7/2 = 28)

Problems of the sort: maximize cT x

subject to : Ax b; Bx = c subject to : Ax b; Bx c

available for LRs.