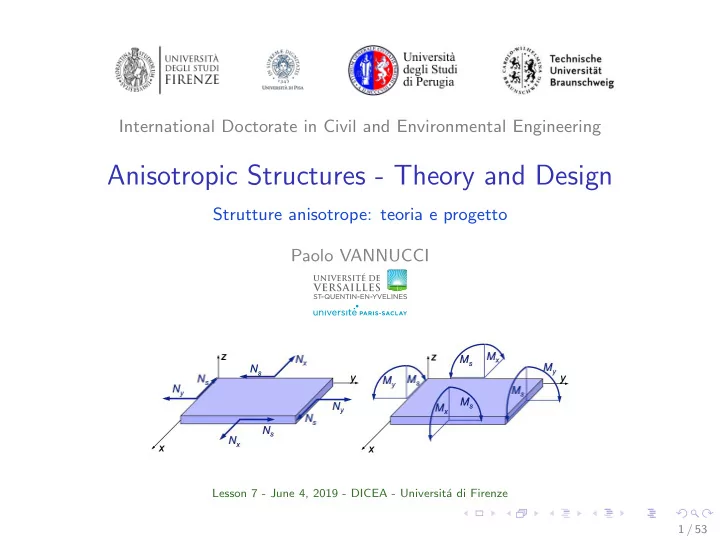

International Doctorate in Civil and Environmental Engineering

Anisotropic Structures - Theory and Design

Strutture anisotrope: teoria e progetto Paolo VANNUCCI

Lesson 7 - June 4, 2019 - DICEA - Universit´ a di Firenze 1 / 53

Anisotropic Structures - Theory and Design Strutture anisotrope: - - PowerPoint PPT Presentation

International Doctorate in Civil and Environmental Engineering Anisotropic Structures - Theory and Design Strutture anisotrope: teoria e progetto Paolo VANNUCCI Lesson 7 - June 4, 2019 - DICEA - Universit a di Firenze 1 / 53 Topics of the

Lesson 7 - June 4, 2019 - DICEA - Universit´ a di Firenze 1 / 53

2 / 53

3 / 53

4 / 53

5 / 53

6 / 53

7 / 53

8 / 53

9 / 53

+

10 / 53

11 / 53

12 / 53

13 / 53

14 / 53

15 / 53

16 / 53

17 / 53

35

11% 15% 23% 8% 22% 9% 12%

=

18 / 53

19 / 53

20 / 53

21 / 53

42

22 / 53

23 / 53

24 / 53

Figure 1.8: Crossover among species: (a) parents couple, (b) effect of the shift operator, (c) crossover on homologous genes, (d) children couple and (e) effect of the chromosome reorder operator. Figure 1.9: Mutation of species: (a) mutation of the number of chromosomes and effect of the chromosome addition-deletion operator, (b) effect of the mutation operator on every gene

25 / 53

1 +x2 2 sin ax1 cos 2bx2,

1 − x2 − 1 ≤ 0,

26 / 53

27 / 53

28 / 53

100 200 300 400 8.0 7.5 7.0 6.5 generation

Evolution of the best 100 200 300 400 20 40 60 80 generation

Evolution of the average

28 / 53

29 / 53

30 / 53

31 / 53

32 / 53

10 20 30 40 50 20 40 60 80 100 iteration

Evolution of the average 10 20 30 40 50 0.0 0.5 1.0 1.5 2.0 2.5 3.0 iteration

Evolution of the best of ever

33 / 53

10 5 5 10 var_1 10 5 5 10 var_2 10 5 5 10 var_3

34 / 53

20 40 60 80 100 8 6 4 2 2 iteration

Evolution of the average 20 40 60 80 100 8.0 7.8 7.6 7.4 7.2 iteration

Evolution of the best of ever

35 / 53

36 / 53

37 / 53

37 / 53

37 / 53

37 / 53

38 / 53

38 / 53

39 / 53

40 / 53

41 / 53

42 / 53

20 40 60 80 100 0.05 0.10 0.15 iteration

Evolution of the average 20 40 60 80 100 0.000 0.005 0.010 0.015 0.020 0.025 0.030 iteration

Evolution of the best of ever

43 / 53

50 50 var_1 50 50 var_2 50 50 var_3

44 / 53

100000 50000 50000 100000 60000 40000 20000 20000 40000 60000

A1111, B1111 and D1111

20000 10000 10000 20000 20000 10000 10000 20000

A1212, B1212 and D1212

50000 50000 50000 50000

A1122, B1122 and D1122 60000 40000 20000 20000 40000 60000 40000 20000 20000 40000

A1112, B1112 and D1112

45 / 53

50 100 150 200 0.00 0.05 0.10 0.15 0.20 0.25 iteration

Evolution of the average 50 100 150 200 0.000 0.005 0.010 0.015 0.020 0.025 0.030 iteration

Evolution of the best of ever

46 / 53

50000 50000 60000 40000 20000 20000 40000 60000

A1111, B1111 and D1111

20000 10000 10000 20000 20000 10000 10000 20000

A1212, B1212 and D1212

50000 50000 50000 50000

A1122, B1122 and D1122

47 / 53

48 / 53

49 / 53

y xy

x yy s xy

50 / 53

51 / 53

δj ϕ(δj) =(RD 0 − RD 0 opt)2 + (RD 1 − RD 1 opt)2+

2 + RB 1 2 + (ΦD 0 − ΦD 1 − K D π

52 / 53

53 / 53