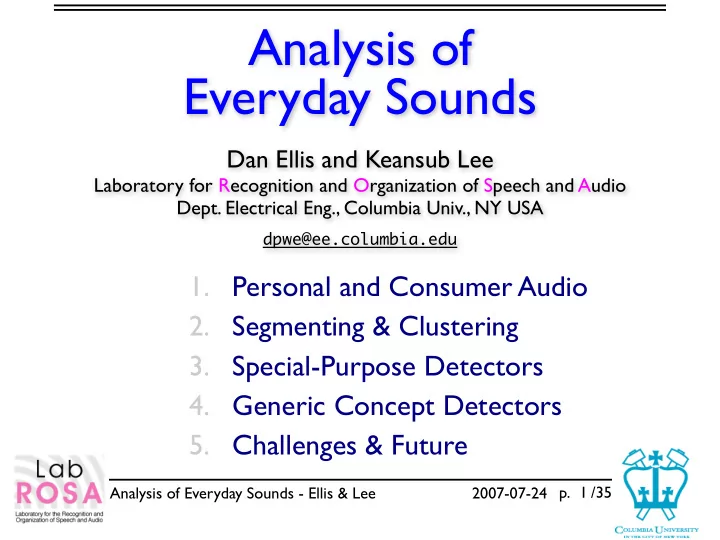

SLIDE 17 Analysis of Everyday Sounds - Ellis & Lee 2007-07-24 p. /35

Browsing Interface

- Browsing / Diary interface

links to other information (diary, email, photos) synchronize with note taking? (Stifelman & Arons) audio thumbnails

- Release Tools + “how to” for capture

17

!"#!! !"#$! !%#!! !%#$! &!#!! &!#$! &&#!! &&#$! &'#!! &'#$! &$#!! &$#$! &(#!! &(#$! &)#!! &)#$! &*#!! &*#$! &+#!! ,-./01223 045. ,-./01223 045. 3.067-. 25580. 25580. 276922- :-27, 34; 045. <..68=:' 25580. 25580. 276922- 34; >2= ?4=7.3 @--2A2B C.//.-

'!!(D!%D&$

C' 045. 25580. 276922- 3.067-. 276922- <..68=:' 276922- 25580. 045. 25580. 276922- 25580. ,2/63.0 25580. EFG!$ 02<,<6: ?8H. F4<;4-64B

'!!(D!%D&(

C' 045. 25580. <..68=: 3.067-.' 25580. :-414< H.4=/7;

'!!(D!%D&)

<..68=: 045. 25580. <..68=:' ,2/63.0 3.067-. 276922- 276922- 25580. 276922- 276922- <..68=: 25580. ,2/63.0 <..68=: 25580. <..68=:' EFG!( 25580.

'!!(D!%D&*

045. :-27, 045. :-27, :-27, 276922- :-27, :-27, 25580. 045.

/.<8=4- :,

'!!(D!%D&+