Algorithms using “real” numbers

- floating point arithmetic

- typical computational errors due to limited precision arithmetic

- advice for constructing algorithms using real numbers

1

Algorithms using “real” numbers

- Floating point arithmetic

– limited precision – computational errors – IEEE standard – type double in Java

- Computational errors due to limited precision arithmetic

– typical errors involving summation, convergence, subtraction – how to avoid some of them

- Applications of numerical algorithms

– Ex. π-computation – graphical / geometric computations – scientific computations

2

Floating point arithmetic: limited precision

- A computer can handle only a finite subset of the reals directly

in hardware

- These numbers make up a floating point number system

F(β, s, m, M) characterized by a base β ∈ N\{1} a number of digits s ∈ N a smallest exponent m ∈ Z largest exponent M ∈ Z

- Each floating point number has the form

y = ±d1.d2 . . . ds · βe, with m ≤ e ≤ M and 0 ≤ dk ≤ β − 1.

3

Floating point arithmetic: limited precision

- Each floating point number in F(β, s, m, M) has the form

y = ±d1.d2 . . . ds · βe, with m ≤ e ≤ M and 0 ≤ dk ≤ β − 1.

- The mantissa d1.d2 . . . ds represents d1 +d2 ·β−1 +· · ·+ds ·β−s+1

- the exponent part is βe

- The system includes 0 = 0.0 . . . 0 · βe.

- All other numbers are normalised: 1 ≤ d1 ≤ β − 1

4

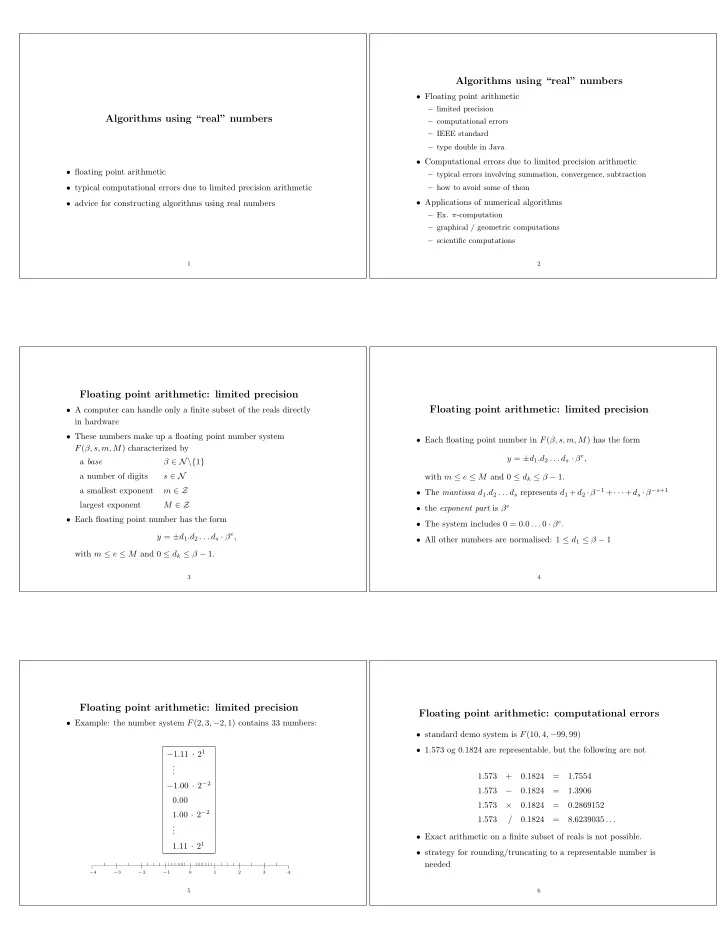

Floating point arithmetic: limited precision

- Example: the number system F(2, 3, −2, 1) contains 33 numbers:

−1.11 · 21 . . . −1.00 · 2−2 0.00 1.00 · 2−2 . . . 1.11 · 21

1 2 3 4 −4 −3 −2 −15

Floating point arithmetic: computational errors

- standard demo system is F(10, 4, −99, 99)

- 1.573 og 0.1824 are representable, but the following are not

1.573 + 0.1824 = 1.7554 1.573 − 0.1824 = 1.3906 1.573 × 0.1824 = 0.2869152 1.573 / 0.1824 = 8.6239035 . . .

- Exact arithmetic on a finite subset of reals is not possible.

- strategy for rounding/truncating to a representable number is

needed

6