Learning From Data Lecture 4 Real Learning is Feasible

Real Learning vs. Verification The Two Step Solution to Learning Closer to Reality: Error and Noise

- M. Magdon-Ismail

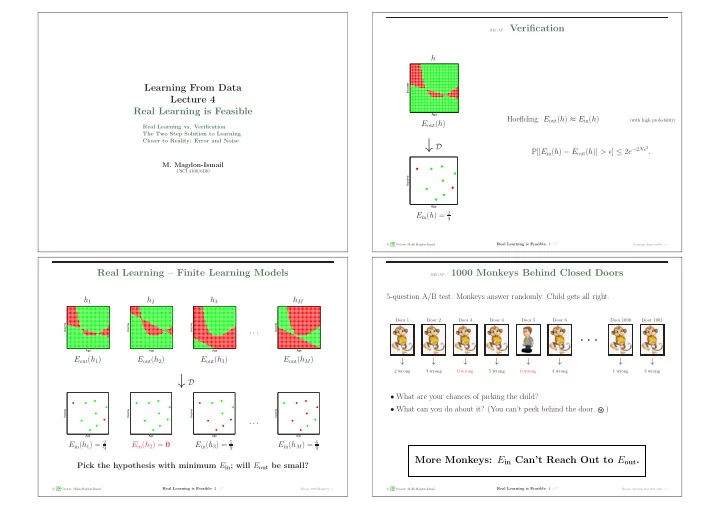

CSCI 4100/6100 recap: Verification

h

Age Income

Eout(h)

↓ D

Age Income

Ein(h) = 2

9

Hoeffding: Eout(h) ≈ Ein(h)

(with high probability)

P[|Ein(h) − Eout(h)| > ǫ] ≤ 2e−2Nǫ2.

c A M L Creator: Malik Magdon-Ismail

Real Learning is Feasible: 2 /17

Learning: finite model − →

Real Learning – Finite Learning Models

h1

Age Income

Eout(h1) h2

Age Income

Eout(h2) h3

Age Income

Eout(h3) · · · hM

Age Income

Eout(hM)

↓ D

Age Income

Ein(h1) = 2

9

Age Income

Ein(h2) = 0

Age Income

Ein(h3) = 5

9

· · ·

Age Income

Ein(hM) = 6

9

Pick the hypothesis with minimum Ein; will Eout be small?

c A M L Creator: Malik Magdon-Ismail

Real Learning is Feasible: 3 /17

Recap: 1000 Monkeys− →

recap: 1000 Monkeys Behind Closed Doors

5-question A/B test. Monkeys answer randomly. Child gets all right.

Door 1 Door 2 Door 3 Door 4 Door 5 Door 6 Door 1000 Door 1001

· · ·

↓ ↓ ↓ ↓ ↓ ↓ ↓ ↓

2 wrong 3 wrong 0 wrong 5 wrong 0 wrong 4 wrong 1 wrong 3 wrong

- What are your chances of picking the child?

- What can you do about it? (You can’t peek behind the door.

)

More Monkeys: Ein Can’t Reach Out to Eout.

c A M L Creator: Malik Magdon-Ismail

Real Learning is Feasible: 4 /17

Recap: selection bias and coins − →