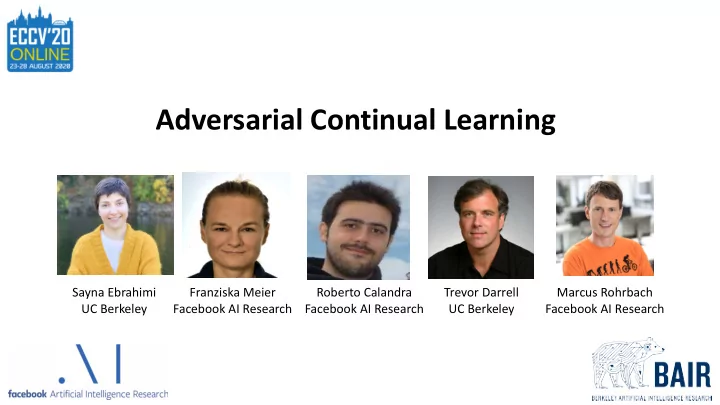

Adversarial Continual Learning

Sayna Ebrahimi UC Berkeley Trevor Darrell UC Berkeley Marcus Rohrbach Facebook AI Research Roberto Calandra Facebook AI Research Franziska Meier Facebook AI Research

Adversarial Continual Learning Sayna Ebrahimi Franziska Meier - - PowerPoint PPT Presentation

Adversarial Continual Learning Sayna Ebrahimi Franziska Meier Roberto Calandra Trevor Darrell Marcus Rohrbach UC Berkeley Facebook AI Research Facebook AI Research UC Berkeley Facebook AI Research What is Continual Learning? Definition:

Sayna Ebrahimi UC Berkeley Trevor Darrell UC Berkeley Marcus Rohrbach Facebook AI Research Roberto Calandra Facebook AI Research Franziska Meier Facebook AI Research

Task 2 Task 3 Task 1

SI MAS EWC UCB LWF LFL

LWF: Li & Hoiem, 2016 LFL: Jung et al., 2016 EWC: Kirkpatrick et al., 2016 SI: Zenke et al., 2017 MAS: Aljundi, 2018 UCB: Ebrahimi et al., 2020

VCL

LWF: Li & Hoiem, 2016 LFL: Jung et al., 2016 EWC: Kirkpatrick et al., 2016 SI: Zenke et al., 2017 VCL: Nguyen et al., 2017 MAS: Aljundi, 2018 UCB: Ebrahimi et al., 2020

SI MAS EWC UCB LWF LFL

LWF: Li & Hoiem, 2016 LFL: Jung et al., 2016 EWC: Kirkpatrick et al., 2016 SI: Zenke et al., 2017 MAS: Aljundi, 2018 UCB: Ebrahimi et al., 2020

VCL

GEM Experience replay A-GEM Experience replay: Robins, 1995

GEM: Lopez-Paz & Ranzato, 2017 A-GEM: Chaudhry et al., 2019

LWF: Li & Hoiem, 2016 LFL: Jung et al., 2016 EWC: Kirkpatrick et al., 2016 SI: Zenke et al., 2017 VCL: Nguyen et al., 2017 MAS: Aljundi, 2018 UCB: Ebrahimi et al., 2020

SI MAS EWC UCB LWF LFL

LWF: Li & Hoiem, 2016 LFL: Jung et al., 2016 EWC: Kirkpatrick et al., 2016 SI: Zenke et al., 2017 MAS: Aljundi, 2018 UCB: Ebrahimi et al., 2020

VCL

GEM Experience replay A-GEM Experience replay: Robins, 1995

GEM: Lopez-Paz & Ranzato, 2017 A-GEM: Chaudhry et al., 2019

PNN DEN

PNN: Rusu et al., 2016 DEN: Yoon et al., 2018 PC: Schwarz et al., 2018

PC

LWF: Li & Hoiem, 2016 LFL: Jung et al., 2016 EWC: Kirkpatrick et al., 2016 SI: Zenke et al., 2017 VCL: Nguyen et al., 2017 MAS: Aljundi, 2018 UCB: Ebrahimi et al., 2020

SI MAS EWC UCB LWF LFL

LWF: Li & Hoiem, 2016 LFL: Jung et al., 2016 EWC: Kirkpatrick et al., 2016 SI: Zenke et al., 2017 MAS: Aljundi, 2018 UCB: Ebrahimi et al., 2020

VCL

GEM Experience replay A-GEM Experience replay: Robins, 1995

GEM: Lopez-Paz & Ranzato, 2017 A-GEM: Chaudhry et al., 2019

PNN DEN

PNN: Rusu et al., 2016 DEN: Yoon et al., 2018 PC: Schwarz et al., 2018

PC

LWF: Li & Hoiem, 2016 LFL: Jung et al., 2016 EWC: Kirkpatrick et al., 2016 SI: Zenke et al., 2017 VCL: Nguyen et al., 2017 MAS: Aljundi, 2018 UCB: Ebrahimi et al., 2020

PackNet

FearNet DGR

iCaRL: Rebuffi et al., 2016 DGR: Shin et al., 2017 FearNet: Kemker et al., 2017 PackNet: Mallya, 2018 Piggyback: Mallya 2018 HAT: Serrà et al., 2018

Piggyback HAT iCaRL

Shared Knowledge (task-invariant)

Private (Task 1) Private (Task 2) Private (Task 3)

Private (Task 2) Private (Task 1) Private (Task 3)

Task label

Private (Task 2) Private (Task 1) Private (Task 3)

Target label

Input images & task labels

Private (Task 2) Private (Task 1) Private (Task 3)

Discriminator

Task label

Private (Task 2) Private (Task 1) Private (Task 3)

Input images & task labels

Target label

Private (Task 2) Private (Task 1) Private (Task 3)

Task label

Private (Task 2) Private (Task 1) Private (Task 3)

Input images & task labels

Target label

Private (Task 2) Private (Task 1) Private (Task 3)

Task label

Private (Task 2) Private (Task 1) Private (Task 3)

Input images & task labels

Target label

Private (Task 2) Private (Task 1) Private (Task 3)

Task label

Private (Task 2) Private (Task 1) Private (Task 3)

Input images & task labels

Target label Target label

Private (Task 2) Private (Task 1) Private (Task 3)

Task label

Private (Task 2) Private (Task 1) Private (Task 3)

Input images & task labels (x,t)

Target label Target label Target label

Task label

Input images & task labels

Stored per task

Private (Task 2) Private (Task 1) Private (Task 3)

Private (Task 2) Private (Task 1) Private (Task 3)

Target label

Experience Replay

(SVHN, CIFAR10, MNIST, FashionMNIST, NotMNIST)

ACC = 1 n

n

∑

i=1

Ri,n BWT = 1 n − 1

n

∑

i=1

Ri,n − Ri,i

Backward Transfer: Average Accuracy

ACL (Ours) HAT PNN ER-RES A-GEM Ordinary Finetune 70

28.76 52.43 57.32 58.96 59.45 62.07

ACC (%)

63

62.07

Accuracy (%)

ACL (Ours) HAT PNN ER-RES A-GEM Ordinary Finetune

70

28.76 52.43 57.32 58.96 59.45 62.07

63

62.07

0.00 0.00

ACL (Ours) HAT PNN ER-RES A-GEM Ordinary Finetune

70

28.76 52.43 57.32 58.96 59.45 62.07

63

62.07

ACL (Ours) HAT PNN ER-RES A-GEM Ordinary Finetune 200 400 600

110.10 110.10 8.50

28.8 52.4 57.3 588.0 123.6 113.1

Architecture Memory (MB) Replay Buffer (MB)

Memory (MB)

0.00 0.00

(SVHN, CIFAR10, MNIST, FashionMNIST, NotMNIST)

# Classes Training Test 50 212,785 48,365

ACL (Ours) UCB Finetune 80

27.32 76.34 78.55

ACC (%)

79

78.55

Accuracy (%)

(SVHN, CIFAR10, MNIST, FashionMNIST, NotMNIST)

# Classes Training Test 50 212,785 48,365

ACL (Ours) UCB Ordinary Finetune

80

27.32 76.34 78.55

79

78.55

ACL (Ours) UCB Finetune 13.333 26.667 40

16.5 32.8 16.5

Architecture Memory (MB)

Discriminator

Replay buffer

ACC (%) BWT (%) X X X 62.07 0.00

ℒdiff

Discriminator

Replay buffer

ACC (%) BWT (%)

X 52.07

X X X 62.07 0.00

ℒdiff

Discriminator

Replay buffer

ACC (%) BWT (%)

X 52.07

X

57.66

X X X 62.07 0.00

ℒdiff

Discriminator

Replay buffer

ACC (%) BWT (%) X X 52.07

X X 57.66

X X

0.00 X X X 62.07 0.00

ℒdiff

w/o Discriminator Task Number Shared Private Shared Private Task 20 Tasks 1-10

Without Dis

C1 C2 C3 C4 C5 C1 C2 C3 C4 C5 C1 C2 C3 C4 C5 C1 C2 C3 C4 C5 T1 T2 T3 T4 T5 T6 T7 T8 T9 T10 T1 T2 T3 T4 T5 T6 T7 T8 T9 T10

w/o Discriminator Task Number Shared Private Shared Private Task 20 Tasks 1-10

Without Dis

C1 C2 C3 C4 C5 C1 C2 C3 C4 C5 C1 C2 C3 C4 C5 C1 C2 C3 C4 C5 T1 T2 T3 T4 T5 T6 T7 T8 T9 T10 T1 T2 T3 T4 T5 T6 T7 T8 T9 T10

w/o Discriminator Task Number Shared Private Shared Private Task 20 Tasks 1-10

Without Dis

C1 C2 C3 C4 C5 C1 C2 C3 C4 C5 C1 C2 C3 C4 C5 C1 C2 C3 C4 C5 T1 T2 T3 T4 T5 T6 T7 T8 T9 T10 T1 T2 T3 T4 T5 T6 T7 T8 T9 T10

w/o Discriminator Task Number Shared Private Shared Private Task 20 Tasks 1-10

Without Dis

C1 C2 C3 C4 C5 C1 C2 C3 C4 C5 C1 C2 C3 C4 C5 C1 C2 C3 C4 C5 T1 T2 T3 T4 T5 T6 T7 T8 T9 T10 T1 T2 T3 T4 T5 T6 T7 T8 T9 T10

w/o Discriminator Task Number Shared Private Shared Private Task 20 Tasks 1-10

Without Dis

C1 C2 C3 C4 C5 C1 C2 C3 C4 C5 C1 C2 C3 C4 C5 C1 C2 C3 C4 C5 T1 T2 T3 T4 T5 T6 T7 T8 T9 T10 T1 T2 T3 T4 T5 T6 T7 T8 T9 T10

w/o Discriminator Task Number Shared Private Shared Private Task 20 Tasks 1-10

Without Dis

C1 C2 C3 C4 C5 C1 C2 C3 C4 C5 C1 C2 C3 C4 C5 C1 C2 C3 C4 C5 T1 T2 T3 T4 T5 T6 T7 T8 T9 T10 T1 T2 T3 T4 T5 T6 T7 T8 T9 T10

w/o Discriminator Task Number Shared Private Shared Private Task 20 Tasks 1-10

Without Dis

C1 C2 C3 C4 C5 C1 C2 C3 C4 C5 C1 C2 C3 C4 C5 C1 C2 C3 C4 C5 T1 T2 T3 T4 T5 T6 T7 T8 T9 T10 T1 T2 T3 T4 T5 T6 T7 T8 T9 T10

10 well-separated clusters 9-entangled clusters

Sayna Ebrahimi UC Berkeley Trevor Darrell UC Berkeley Marcus Rohrbach Facebook AI Research Roberto Calandra Facebook AI Research Franziska Meier Facebook AI Research