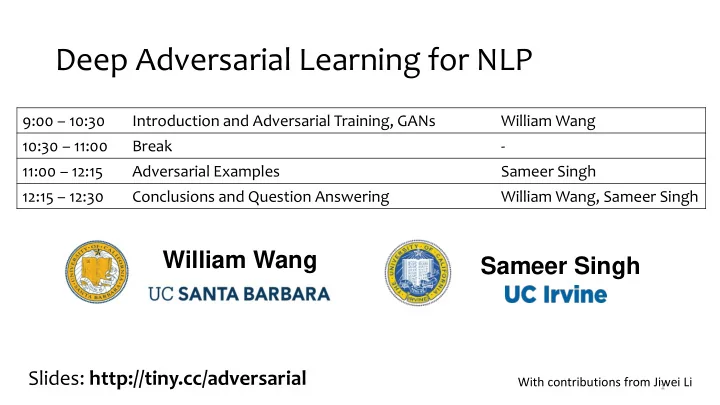

Deep Adversarial Learning for NLP

9:00 – 10:30 Introduction and Adversarial Training, GANs William Wang 10:30 – 11:00 Break

- 11:00 – 12:15

Adversarial Examples Sameer Singh 12:15 – 12:30 Conclusions and Question Answering William Wang, Sameer Singh

William Wang Sameer Singh

With contributions from Jiwei Li

1

Slides: http://tiny.cc/adversarial