About Least-squares type approach to address direct and - PowerPoint PPT Presentation

About Least-squares type approach to address direct and controllability problems A RNAUD M NCH Universit Blaise Pascal - Clermont-Ferrand - France Chambery, June 15-18 , 2015 joint work with P ABLO P EDREGAL (Ciudad Real, Spain) Introduction

About Least-squares type approach to address direct and controllability problems A RNAUD M ÜNCH Université Blaise Pascal - Clermont-Ferrand - France Chambery, June 15-18 , 2015 joint work with P ABLO P EDREGAL (Ciudad Real, Spain)

Introduction (the linear heat eq. to fix ideas) ω ⊂ Ω ⊂ R N , N ≥ 1, a ∈ C 1 (Ω , R + ∗ ) , d ∈ L ∞ ( Q T ) , T > 0, Q T = Ω × ( 0 , T ) , q T = ω × ( 0 , T ) , Γ T := ∂ Ω × ( 0 , T ) Ly ≡ y t − ∇ · ( a ( x ) ∇ y ) + dy = v 1 ω , 8 Q T in > < y = 0 , (1) on Γ T > y ( · , 0 ) = y 0 , Ω . : in ( y 0 ∈ L 2 (Ω) , v ∈ L 2 ( q T )) = ⇒ y ∈ C 0 ([ 0 , T ]; L 2 (Ω)) ∩ L 2 ( 0 , T ; H 1 0 (Ω)) . ∃ v ∈ L 2 ( q T ) s.t. y ( · , T ) = 0 Null controllability - ∀ T > 0 , ω ⊂ Ω , (F URSIKOV -I MANUVILOV ’96, R OBBIANO -L EBEAU ’95, etc) Control of minimal L 2 - norm- 8 min J ( y , v ) := � v � 2 C ( y 0 , T ) over L 2 ( q T ) < (2) C ( y 0 , T ) = { ( y , v ) : v ∈ L 2 ( q T ) , y solves (1) and satisfies y ( T , · ) = 0 } :

Introduction (the linear heat eq. to fix ideas) ω ⊂ Ω ⊂ R N , N ≥ 1, a ∈ C 1 (Ω , R + ∗ ) , d ∈ L ∞ ( Q T ) , T > 0, Q T = Ω × ( 0 , T ) , q T = ω × ( 0 , T ) , Γ T := ∂ Ω × ( 0 , T ) Ly ≡ y t − ∇ · ( a ( x ) ∇ y ) + dy = v 1 ω , 8 Q T in > < y = 0 , (1) on Γ T > y ( · , 0 ) = y 0 , Ω . : in ( y 0 ∈ L 2 (Ω) , v ∈ L 2 ( q T )) = ⇒ y ∈ C 0 ([ 0 , T ]; L 2 (Ω)) ∩ L 2 ( 0 , T ; H 1 0 (Ω)) . ∃ v ∈ L 2 ( q T ) s.t. y ( · , T ) = 0 Null controllability - ∀ T > 0 , ω ⊂ Ω , (F URSIKOV -I MANUVILOV ’96, R OBBIANO -L EBEAU ’95, etc) Control of minimal L 2 - norm- 8 min J ( y , v ) := � v � 2 C ( y 0 , T ) over L 2 ( q T ) < (2) C ( y 0 , T ) = { ( y , v ) : v ∈ L 2 ( q T ) , y solves (1) and satisfies y ( T , · ) = 0 } :

Introduction (the linear heat eq. to fix ideas) ω ⊂ Ω ⊂ R N , N ≥ 1, a ∈ C 1 (Ω , R + ∗ ) , d ∈ L ∞ ( Q T ) , T > 0, Q T = Ω × ( 0 , T ) , q T = ω × ( 0 , T ) , Γ T := ∂ Ω × ( 0 , T ) Ly ≡ y t − ∇ · ( a ( x ) ∇ y ) + dy = v 1 ω , 8 Q T in > < y = 0 , (1) on Γ T > y ( · , 0 ) = y 0 , Ω . : in ( y 0 ∈ L 2 (Ω) , v ∈ L 2 ( q T )) = ⇒ y ∈ C 0 ([ 0 , T ]; L 2 (Ω)) ∩ L 2 ( 0 , T ; H 1 0 (Ω)) . ∃ v ∈ L 2 ( q T ) s.t. y ( · , T ) = 0 Null controllability - ∀ T > 0 , ω ⊂ Ω , (F URSIKOV -I MANUVILOV ’96, R OBBIANO -L EBEAU ’95, etc) Control of minimal L 2 - norm- 8 min J ( y , v ) := � v � 2 C ( y 0 , T ) over L 2 ( q T ) < (2) C ( y 0 , T ) = { ( y , v ) : v ∈ L 2 ( q T ) , y solves (1) and satisfies y ( T , · ) = 0 } :

Minimal L 2 norm control using duality [Glowinski-Lions 94’] φ T ∈ H J ⋆ ( φ T ) , J ⋆ ( φ T ) := 1 Z Z φ 2 dxdt + inf inf φ ( 0 , · ) y 0 dx ( y , v ) ∈C ( y 0 , T ) J ( y , v ) = − 2 q T Ω where φ solves the backward system ( L ⋆ φ ≡ − φ t − ∇ · ( a ( x ) ∇ φ ) + d φ = 0 Q T = ( 0 , T ) × Ω , φ = 0 Σ T = ( 0 , T ) × ∂ Ω , φ ( T , · ) = φ T Ω . H -completion of D (Ω) with respect to the norm « 1 / 2 „Z φ 2 ( t , x ) dxdt � φ T � H = . q T From the observability inequality C ( T , ω ) � φ ( 0 , · ) � 2 L 2 (Ω) ≤ � φ T � 2 ∀ φ T ∈ L 2 (Ω) , H J ⋆ is coercive on H . The control is given by v = φ X ω on Q T .

Minimal L 2 norm control using duality [Glowinski-Lions 94’] φ T ∈ H J ⋆ ( φ T ) , J ⋆ ( φ T ) := 1 Z Z φ 2 dxdt + inf inf φ ( 0 , · ) y 0 dx ( y , v ) ∈C ( y 0 , T ) J ( y , v ) = − 2 q T Ω where φ solves the backward system ( L ⋆ φ ≡ − φ t − ∇ · ( a ( x ) ∇ φ ) + d φ = 0 Q T = ( 0 , T ) × Ω , φ = 0 Σ T = ( 0 , T ) × ∂ Ω , φ ( T , · ) = φ T Ω . H -completion of D (Ω) with respect to the norm « 1 / 2 „Z φ 2 ( t , x ) dxdt � φ T � H = . q T From the observability inequality C ( T , ω ) � φ ( 0 , · ) � 2 L 2 (Ω) ≤ � φ T � 2 ∀ φ T ∈ L 2 (Ω) , H J ⋆ is coercive on H . The control is given by v = φ X ω on Q T .

Minimal L 2 norm control using duality [Glowinski-Lions 94’] φ T ∈ H J ⋆ ( φ T ) , J ⋆ ( φ T ) := 1 Z Z φ 2 dxdt + inf inf φ ( 0 , · ) y 0 dx ( y , v ) ∈C ( y 0 , T ) J ( y , v ) = − 2 q T Ω where φ solves the backward system ( L ⋆ φ ≡ − φ t − ∇ · ( a ( x ) ∇ φ ) + d φ = 0 Q T = ( 0 , T ) × Ω , φ = 0 Σ T = ( 0 , T ) × ∂ Ω , φ ( T , · ) = φ T Ω . H -completion of D (Ω) with respect to the norm « 1 / 2 „Z φ 2 ( t , x ) dxdt � φ T � H = . q T From the observability inequality C ( T , ω ) � φ ( 0 , · ) � 2 L 2 (Ω) ≤ � φ T � 2 ∀ φ T ∈ L 2 (Ω) , H J ⋆ is coercive on H . The control is given by v = φ X ω on Q T .

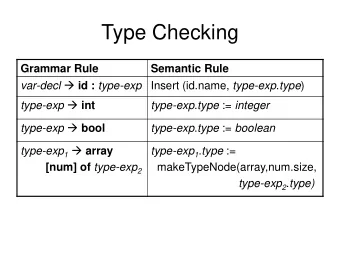

N = 1 - L 2 ( 0 , 1 ) -norm of the HUM control with respect to time Hugeness of H : H − s ⊂ H for any s ≥ 0 = ⇒ Ill-posedness 1 0.8 0.6 0.4 0.2 0 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 1 t Figure: y 0 ( x ) = sin ( π x ) - T = 1 - ω = ( 0 . 2 , 0 . 8 ) - t → � v ( · , t ) � L 2 ( 0 , 1 ) in [ 0 , T ] Remedies via Carleman approach and convergence results in [Fernandez-Cara, Münch, 2011-2014]

Least-squares approach We define the non-empty set 0 (Ω)); u ′ ∈ L 2 ( 0 , T , H − 1 (Ω)) , ( u , f ); u ∈ C ([ 0 , T ]; L 2 (Ω)) ∩ L 2 ( 0 , T ; H 1 A = ff u ( · , 0 ) = u 0 , u ( · , T ) = 0 , f ∈ L 2 ( q T ) and find ( u , f ) ∈ A solution of the heat eq. ! For any ( u , f ) ∈ A , we define the "corrector" v = v ( u , f ) ∈ H 1 ( Q T ) solution of the Q T - elliptic problem 8 „ « u t − ∇ · ( a ( x ) ∇ u ) + du − f 1 ω = 0 , − v tt − ∇ · ( a ( x ) ∇ v ) + ( x , t ) ∈ Q T , > > > < v t = 0 , x ∈ Ω , t ∈ { 0 , T } > > > v = 0 , : x ∈ Σ T . (3)

Least-squares approach We define the non-empty set 0 (Ω)); u ′ ∈ L 2 ( 0 , T , H − 1 (Ω)) , ( u , f ); u ∈ C ([ 0 , T ]; L 2 (Ω)) ∩ L 2 ( 0 , T ; H 1 A = ff u ( · , 0 ) = u 0 , u ( · , T ) = 0 , f ∈ L 2 ( q T ) and find ( u , f ) ∈ A solution of the heat eq. ! For any ( u , f ) ∈ A , we define the "corrector" v = v ( u , f ) ∈ H 1 ( Q T ) solution of the Q T - elliptic problem 8 „ « u t − ∇ · ( a ( x ) ∇ u ) + du − f 1 ω = 0 , − v tt − ∇ · ( a ( x ) ∇ v ) + ( x , t ) ∈ Q T , > > > < v t = 0 , x ∈ Ω , t ∈ { 0 , T } > > > v = 0 , : x ∈ Σ T . (3)

Least-squares approach (2) Theorem u is a controlled solution of the heat eq. by the control function f 1 ω ∈ L 2 ( q T ) if and only if ( u , f ) is a solution of the extremal problem ( u , f ) ∈A E ( u , f ) := 1 ZZ ( | v t | 2 + a ( x ) |∇ v | 2 ) dx dt . inf (4) 2 Q T Proof. = From the null controllability of the heat eq., the extremal problem is well-posed in ⇐ the sense that the infimum, equal to zero, is reached by any controlled solution of the heat eq. (the minimizer is not unique). ⇒ Conversely, we check that any minimizer of E is a solution of the (controlled) heat = eq.: We define the vector space 0 (Ω)); u ′ ∈ L 2 ( 0 , T , H − 1 (Ω)) , ( u , f ); u ∈ C ([ 0 , T ]; L 2 (Ω)) ∩ L 2 ( 0 , T ; H 1 A 0 = ff u ( · , 0 ) = u ( · , T ) = 0 , x ∈ Ω , f ∈ L 2 ( q T )

Least-squares approach (2) Theorem u is a controlled solution of the heat eq. by the control function f 1 ω ∈ L 2 ( q T ) if and only if ( u , f ) is a solution of the extremal problem ( u , f ) ∈A E ( u , f ) := 1 ZZ ( | v t | 2 + a ( x ) |∇ v | 2 ) dx dt . inf (4) 2 Q T Proof. = From the null controllability of the heat eq., the extremal problem is well-posed in ⇐ the sense that the infimum, equal to zero, is reached by any controlled solution of the heat eq. (the minimizer is not unique). ⇒ Conversely, we check that any minimizer of E is a solution of the (controlled) heat = eq.: We define the vector space 0 (Ω)); u ′ ∈ L 2 ( 0 , T , H − 1 (Ω)) , ( u , f ); u ∈ C ([ 0 , T ]; L 2 (Ω)) ∩ L 2 ( 0 , T ; H 1 A 0 = ff u ( · , 0 ) = u ( · , T ) = 0 , x ∈ Ω , f ∈ L 2 ( q T )

Least-squares approach (2) Theorem u is a controlled solution of the heat eq. by the control function f 1 ω ∈ L 2 ( q T ) if and only if ( u , f ) is a solution of the extremal problem ( u , f ) ∈A E ( u , f ) := 1 ZZ ( | v t | 2 + a ( x ) |∇ v | 2 ) dx dt . inf (4) 2 Q T Proof. = From the null controllability of the heat eq., the extremal problem is well-posed in ⇐ the sense that the infimum, equal to zero, is reached by any controlled solution of the heat eq. (the minimizer is not unique). ⇒ Conversely, we check that any minimizer of E is a solution of the (controlled) heat = eq.: We define the vector space 0 (Ω)); u ′ ∈ L 2 ( 0 , T , H − 1 (Ω)) , ( u , f ); u ∈ C ([ 0 , T ]; L 2 (Ω)) ∩ L 2 ( 0 , T ; H 1 A 0 = ff u ( · , 0 ) = u ( · , T ) = 0 , x ∈ Ω , f ∈ L 2 ( q T )

Recommend

More recommend

Explore More Topics

Stay informed with curated content and fresh updates.