- 7. Sorting I

Simple Sorting

196

7.1 Simple Sorting

Selection Sort, Insertion Sort, Bubblesort [Ottman/Widmayer, Kap. 2.1, Cormen et al, Kap. 2.1, 2.2, Exercise 2.2-2, Problem 2-2

197

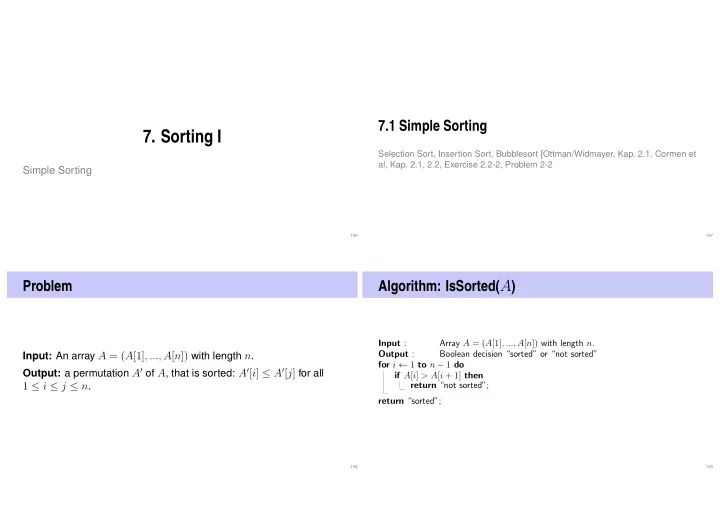

Problem

Input: An array A = (A[1], ..., A[n]) with length n. Output: a permutation A′ of A, that is sorted: A′[i] ≤ A′[j] for all

1 ≤ i ≤ j ≤ n.

198

Algorithm: IsSorted(A)

Input : Array A = (A[1], ..., A[n]) with length n. Output : Boolean decision “sorted” or “not sorted” for i ← 1 to n − 1 do if A[i] > A[i + 1] then return “not sorted”; return “sorted”;

199