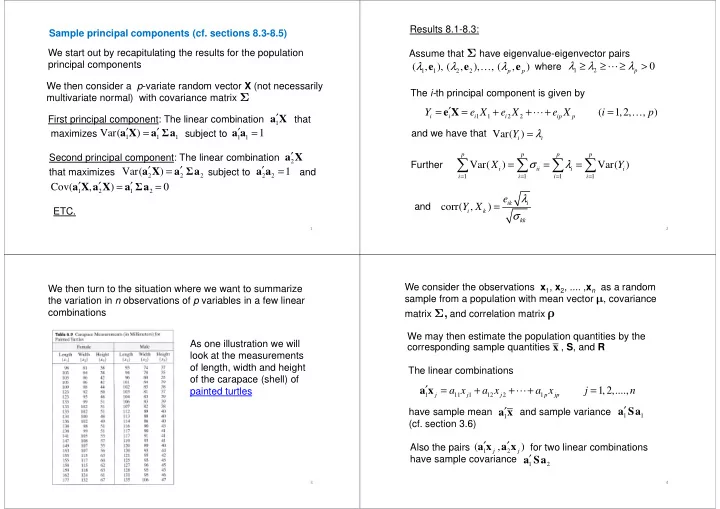

Sample principal components (cf. sections 8.3-8.5) We then consider a p-variate random vector X (not necessarily multivariate normal) with covariance matrix Σ We start out by recapitulating the results for the population principal components First principal component: The linear combination that

1

′ a X Var( ) ′ ′ = a X a Σa 1 ′ = a a

1

maximizes subject to

1 1 1

Var( ) ′ ′ = a X a Σa

1 1

1 ′ = a a

Second principal component: The linear combination that maximizes subject to and

2

′ a X

2 2 2

Var( ) ′ ′ = a X a Σa

2 2

1 ′ = a a

1 2 1 2

Cov( , ) ′ ′ ′ = = a X a X a Σa

ETC. Assume that Σ have eigenvalue-eigenvector pairs where Results 8.1-8.3: The i-th principal component is given by

1 1 2 2

( , ), ( , ), , ( , )

p p

λ λ λ e e e …

1 2 p

λ λ λ ≥ ≥ ≥ > ⋯

1 1 2 2

( 1,2, , )

i i i i ip p

Y e X e X e X i p ′ = = + + + = e X ⋯ … λ =

2

and we have that Var( )

i i

Y λ =

Further

1 1 1 1

Var( ) Var( )

p p p p i ii i i i i i i

X Y σ λ

= = = =

= = =

∑ ∑ ∑ ∑

and

corr( , )

ik i i k kk

e Y X λ σ =

As one illustration we will look at the measurements

- f length, width and height

- f the carapace (shell) of

We then turn to the situation where we want to summarize the variation in n observations of p variables in a few linear combinations

3

- f the carapace (shell) of

painted turtles We may then estimate the population quantities by the corresponding sample quantities , S, and R The linear combinations We consider the observations x1, x2, .... ,xn as a random sample from a population with mean vector , covariance matrix Σ, and correlation matrix ρ

x

have sample mean and sample variance (cf. section 3.6)

4

1 11 1 12 2 1

1,2,....,

j j j p jp

a x a x a x j n ′ = + + + = a x ⋯

Also the pairs for two linear combinations have sample covariance

1 1

′ a Sa

1

′ a x

1 2

′ a Sa

1 2

( , )

j j