Multivariate Data Multivariate Normal Distribution Multivariate Classification Multivariate Regression

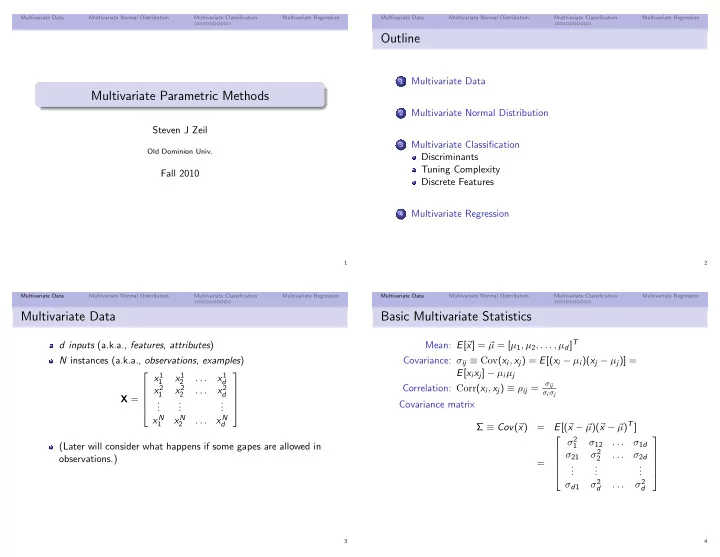

Multivariate Parametric Methods

Steven J Zeil

Old Dominion Univ.

Fall 2010

1 Multivariate Data Multivariate Normal Distribution Multivariate Classification Multivariate Regression

Outline

1

Multivariate Data

2

Multivariate Normal Distribution

3

Multivariate Classification Discriminants Tuning Complexity Discrete Features

4

Multivariate Regression

2 Multivariate Data Multivariate Normal Distribution Multivariate Classification Multivariate Regression

Multivariate Data

d inputs (a.k.a., features, attributes) N instances (a.k.a., observations, examples) X = x1

1

x1

2

. . . x1

d

x2

1

x2

2

. . . x2

d

. . . . . . . . . xN

1

xN

2

. . . xN

d

(Later will consider what happens if some gapes are allowed in

- bservations.)

3 Multivariate Data Multivariate Normal Distribution Multivariate Classification Multivariate Regression

Basic Multivariate Statistics

Mean: E[ x] = µ = [µ1, µ2, . . . , µd]T Covariance: σij ≡ Cov(xi, xj) = E[(xi − µi)(xj − µj)] = E[xixj] − µiµj Correlation: Corr(xi, xj) ≡ ρij =

σij σiσj

Covariance matrix Σ ≡ Cov( x) = E[( x − µ)( x − µ)T] = σ2

1

σ12 . . . σ1d σ21 σ2

2

. . . σ2d . . . . . . . . . σd1 σ2

d

. . . σ2

d

4