1

CS240 Computer Organization

Department of Computer Science Wellesley College

Exploiting memory hierarchy

Cache basics

Cache basics 22-2

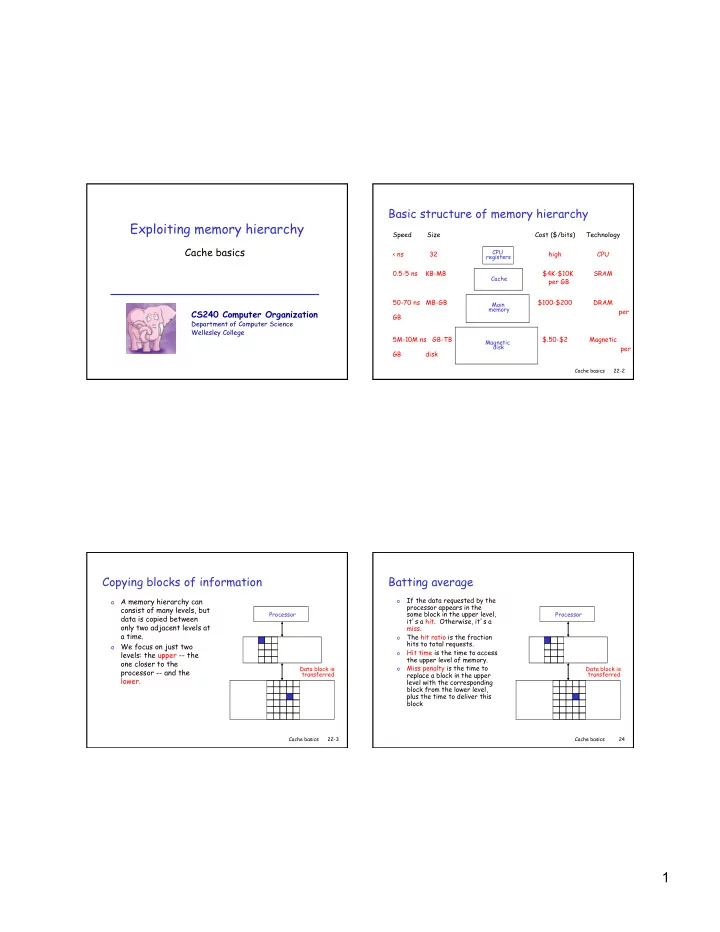

< ns 32 high CPU

Basic structure of memory hierarchy

CPU registers Cache Main memory Magnetic disk

Speed Size Cost ($/bits) Technology 0.5-5 ns KB-MB $4K-$10K SRAM per GB 50-70 ns MB-GB $100-$200 DRAM per GB 5M-10M ns GB-TB $.50-$2 Magnetic per GB disk

Cache basics 22-3

Copying blocks of information

- A memory hierarchy can

consist of many levels, but data is copied between

- nly two adjacent levels at

a time.

- We focus on just two

levels: the upper -- the

- ne closer to the

processor -- and the lower.

Processor Data block is transferred

Cache basics 24

Batting average

- If the data requested by the

processor appears in the some block in the upper level, it’s a hit. Otherwise, it’s a miss.

- The hit ratio is the fraction

hits to total requests.

- Hit time is the time to access

the upper level of memory.

- Miss penalty is the time to

replace a block in the upper level with the corresponding block from the lower level, plus the time to deliver this block

Processor Data block is transferred