1

Introduction to Artificial Intelligence

V22.0472-001 Fall 2009 Lecture 4: Constraint Lecture 4: Constraint Satisfaction Problems

Rob Fergus – Dept of Computer Science, Courant Institute, NYU Many slides from Dan Klein, Stuart Russell or Andrew Moore

Announcements

- Please ask for help on assignment

2

Today

- Search Conclusion

C S f P bl

- Constraint Satisfaction Problems

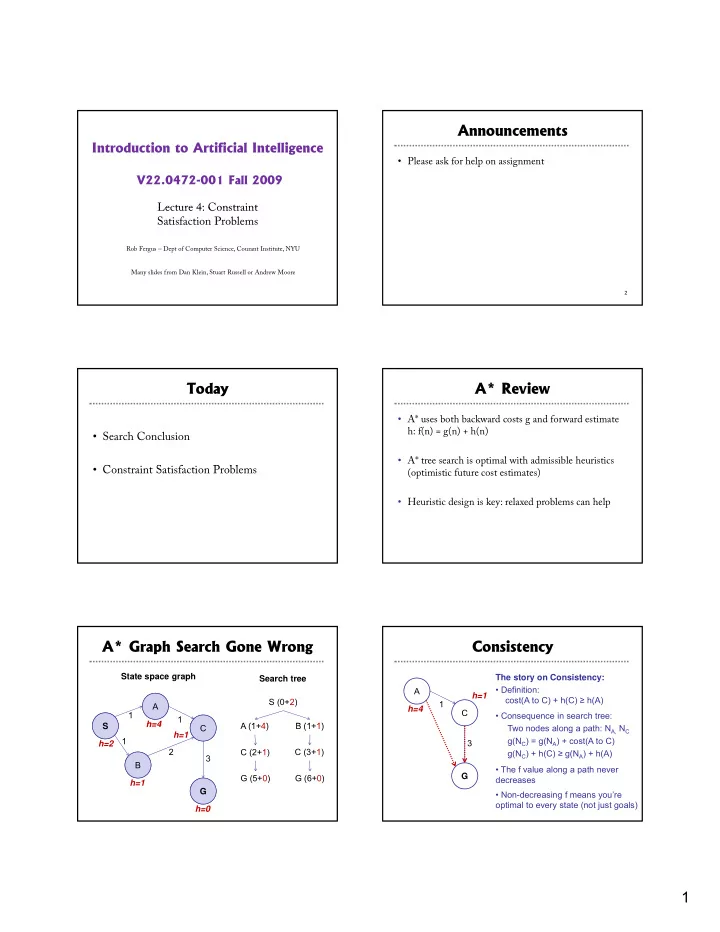

A* Review

- A* uses both backward costs g and forward estimate

h: f(n) = g(n) + h(n)

- A* tree search is optimal with admissible heuristics

p (optimistic future cost estimates)

- Heuristic design is key: relaxed problems can help

A* Graph Search Gone Wrong

S A 1 1 h=4 S (0+2) A (1 4) B (1 1) S A State space graph Search tree S B C G 1 2 3 h=2 h=1 h=4 h=1 h=0 A (1+4) B (1+1) C (2+1) G (5+0) C (3+1) G (6+0) S B C G

Consistency

A C h=4 h=1 1 The story on Consistency:

- Definition:

cost(A to C) + h(C) ≥ h(A)

- Consequence in search tree:

3 G Two nodes along a path: NA, NC g(NC) = g(NA) + cost(A to C) g(NC) + h(C) ≥ g(NA) + h(A)

- The f value along a path never

decreases

- Non-decreasing f means you’re

- ptimal to every state (not just goals)