1

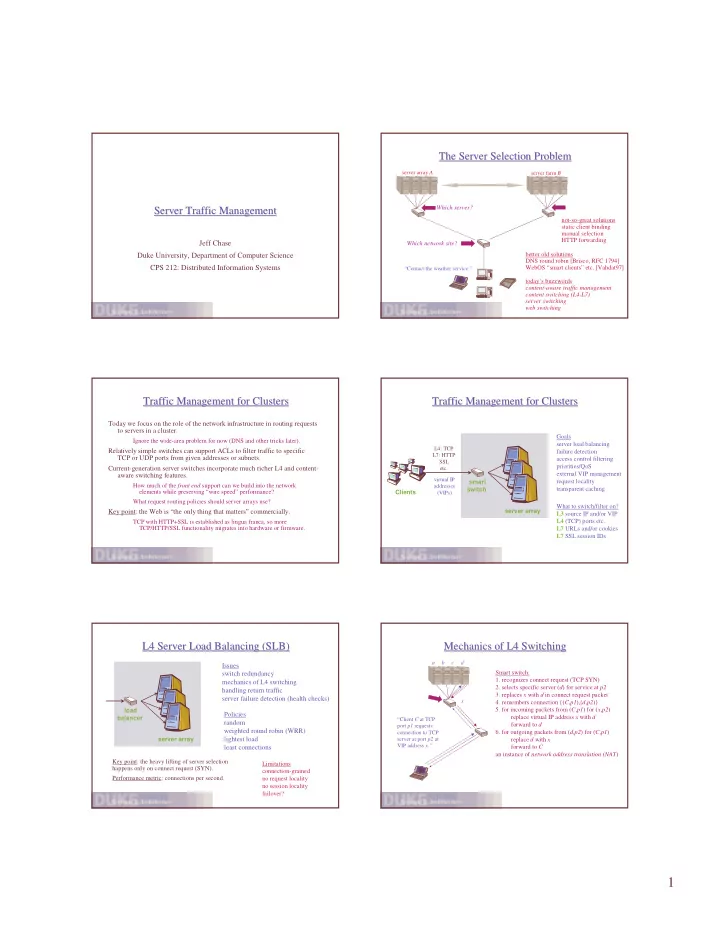

Server Traffic Management Server Traffic Management

Jeff Chase Duke University, Department of Computer Science CPS 212: Distributed Information Systems

The Server Selection Problem The Server Selection Problem

Which network site? Which server?

“Contact the weather service.” server array A server farm B

better old solutions DNS round robin [Brisco, RFC 1794] WebOS “smart clients” etc. [Vahdat97] today’s buzzwords content-aware traffic management content switching (L4-L7) server switching web switching not-so-great solutions static client binding manual selection HTTP forwarding

Traffic Management for Clusters Traffic Management for Clusters

Today we focus on the role of the network infrastructure in routing requests to servers in a cluster.

Ignore the wide-area problem for now (DNS and other tricks later).

Relatively simple switches can support ACLs to filter traffic to specific TCP or UDP ports from given addresses or subnets. Current-generation server switches incorporate much richer L4 and content- aware switching features.

How much of the front end support can we build into the network elements while preserving “wire speed” performance? What request routing policies should server arrays use?

Key point: the Web is “the only thing that matters” commercially.

TCP with HTTP+SSL is established as lingua franca, so more TCP/HTTP/SSL functionality migrates into hardware or firmware.

Traffic Management for Clusters Traffic Management for Clusters

server array Clients

L4: TCP L7: HTTP SSL etc.

Goals server load balancing failure detection access control filtering priorities/QoS external VIP management request locality transparent caching smart switch

virtual IP addresses (VIPs)

What to switch/filter on? L3 source IP and/or VIP L4 (TCP) ports etc. L7 URLs and/or cookies L7 SSL session IDs

L4 Server Load Balancing (SLB) L4 Server Load Balancing (SLB)

server array load balancer

Issues switch redundancy mechanics of L4 switching handling return traffic server failure detection (health checks)

Key point: the heavy lifting of server selection happens only on connect request (SYN). Performance metric: connections per second.

Policies random weighted round robin (WRR) lightest load least connections

Limitations connection-grained no request locality no session locality failover?

Mechanics of L4 Switching Mechanics of L4 Switching

“Client C at TCP port p1 requests connection to TCP server at port p2 at VIP address x.” a b c d

Smart switch:

- 1. recognizes connect request (TCP SYN)

- 2. selects specific server (d) for service at p2

- 3. replaces x with d in connect request packet

- 4. remembers connection {(C,p1),(d,p2)}

- 5. for incoming packets from (C,p1) for (x,p2)

replace virtual IP address x with d forward to d

- 6. for outgoing packets from (d,p2) for (C,p1)

replace d with x forward to C an instance of network address translation (NAT)

x