1

1

1

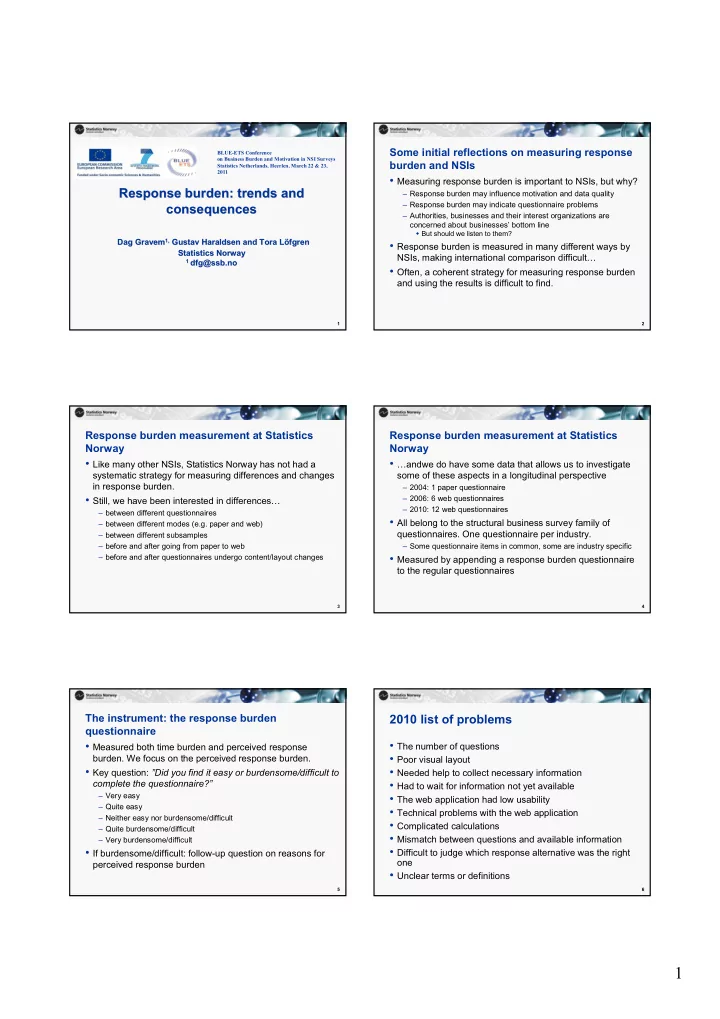

Response burden: trends and Response burden: trends and consequences consequences

Dag Gravem Dag Gravem1,

1, Gustav

Gustav Haraldsen Haraldsen and and Tora Tora Löfgren Löfgren Statistics Norway Statistics Norway

1 1 dfg@ssb.no

dfg@ssb.no

BLUE-ETS Conference

- n Business Burden and Motivation in NSI Surveys

Statistics Netherlands, Heerlen, March 22 & 23, 2011

2

Some initial reflections on measuring response burden and NSIs

- Measuring response burden is important to NSIs, but why?

– Response burden may influence motivation and data quality – Response burden may indicate questionnaire problems – Authorities, businesses and their interest organizations are concerned about businesses’ bottom line

But should we listen to them?

- Response burden is measured in many different ways by

NSIs, making international comparison difficult…

- Often, a coherent strategy for measuring response burden

and using the results is difficult to find.

3

Response burden measurement at Statistics Norway

- Like many other NSIs, Statistics Norway has not had a

systematic strategy for measuring differences and changes in response burden.

- Still, we have been interested in differences…

– between different questionnaires – between different modes (e.g. paper and web) – between different subsamples – before and after going from paper to web – before and after questionnaires undergo content/layout changes

4

Response burden measurement at Statistics Norway

- …andwe do have some data that allows us to investigate

some of these aspects in a longitudinal perspective

– 2004: 1 paper questionnaire – 2006: 6 web questionnaires – 2010: 12 web questionnaires

- All belong to the structural business survey family of

- questionnaires. One questionnaire per industry.

– Some questionnaire items in common, some are industry specific

- Measured by appending a response burden questionnaire

to the regular questionnaires

5

The instrument: the response burden questionnaire

- Measured both time burden and perceived response

- burden. We focus on the perceived response burden.

- Key question: ”Did you find it easy or burdensome/difficult to

complete the questionnaire?”

– Very easy – Quite easy – Neither easy nor burdensome/difficult – Quite burdensome/difficult – Very burdensome/difficult

- If burdensome/difficult: follow-up question on reasons for

perceived response burden

6

2010 list of problems

- The number of questions

- Poor visual layout

- Needed help to collect necessary information

- Had to wait for information not yet available

- The web application had low usability

- Technical problems with the web application

- Complicated calculations

- Mismatch between questions and available information

- Difficult to judge which response alternative was the right

- ne

- Unclear terms or definitions