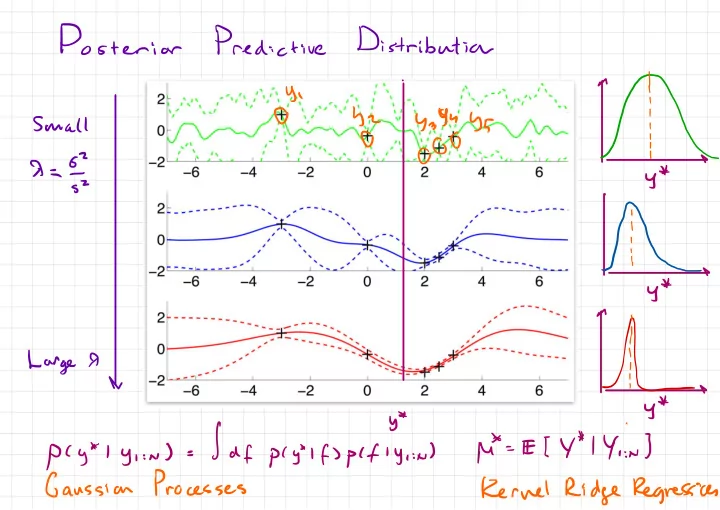

SLIDE 1 Posterior Predictive

Distribution

.

1

,

y

,Y\

yz Y } 5h45 , Small be ya9uy5@

00

1QOO

' , 7=5 y* SZ ' l- hi

- o0

µ

y # y* P(y*ly , :n ) =|df

pcytlfspcfly , :µ )