SLIDE 1 ✁ ✂ ✄ ☎✆ ✝✞ ✂ ✟ ☎ ✁ ✂ ☎ ✠ ✄ ✂ ✟✡ ✞ ✟ ☛ ☞ ✁ ✂✌ ☞ ☞ ✟ ✍ ✌ ✁ ✞ ✌ ✎✏✑ ✒ ✓ ✒ ✏ ✔ ✒ ✕ ✏ ✖ ✒ ✗ ✕ ✒ ✑ ✏ ✒✘✙ ✚ ✓✛ ✜ ✢ ✣ ✤ ✥ ✦✧ ★✩ ✪ ✫✬ ✭ ✮ ✯ ✰ ✧ ✱ ✲ ✳✴✵ ✴✶ ✷ ✸✹ ✺ ✻✼✽✾ ✿ ❀❁ ❂❃ ❁ ❄ ❅❆ ✾ ✽ ✼ ❇ ❈ ❄ ❉ ❊ ❋ ✼ ✼ ✽ ❅ ❅ ✻ ❀ ✾

- ❉❍

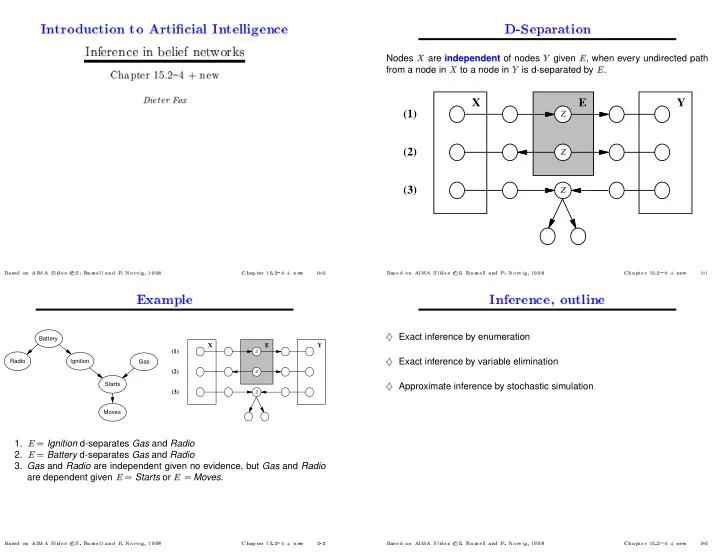

Nodes

❛are independent of nodes

❜given

❝, when every undirected path from a node in

❛to a node in

❜is d-separated by

❝.

X Y E (1) (2) (3)

Z Z Z

✺ ✻✼✽✾ ✿ ❀❁ ❂❃ ❁ ❄ ❅❆ ✾ ✽ ✼ ❇ ❈ ❄ ❉ ❊ ❋ ✼ ✼ ✽ ❅ ❅ ✻ ❀ ✾- ❉❍

Radio Battery Ignition Gas Starts Moves X Y E (1) (2) (3)

Z Z Z

1.

❝ ❣Ignition d-separates Gas and Radio 2.

❝ ❣Battery d-separates Gas and Radio

- 3. Gas and Radio are independent given no evidence, but Gas and Radio

are dependent given

❝ ❣Starts or

❝ ❣Moves.

✺ ✻✼✽✾ ✿ ❀❁ ❂❃ ❁ ❄ ❅❆ ✾ ✽ ✼ ❇ ❈ ❄ ❉ ❊ ❋ ✼ ✼ ✽ ❅ ❅ ✻ ❀ ✾- ❉❍

Exact inference by enumeration

❥Exact inference by variable elimination

❥Approximate inference by stochastic simulation

✺ ✻✼✽✾ ✿ ❀❁ ❂❃ ❁ ❄ ❅❆ ✾ ✽ ✼ ❇ ❈ ❄ ❉ ❊ ❋ ✼ ✼ ✽ ❅ ❅ ✻ ❀ ✾- ❉❍