SLIDE 1

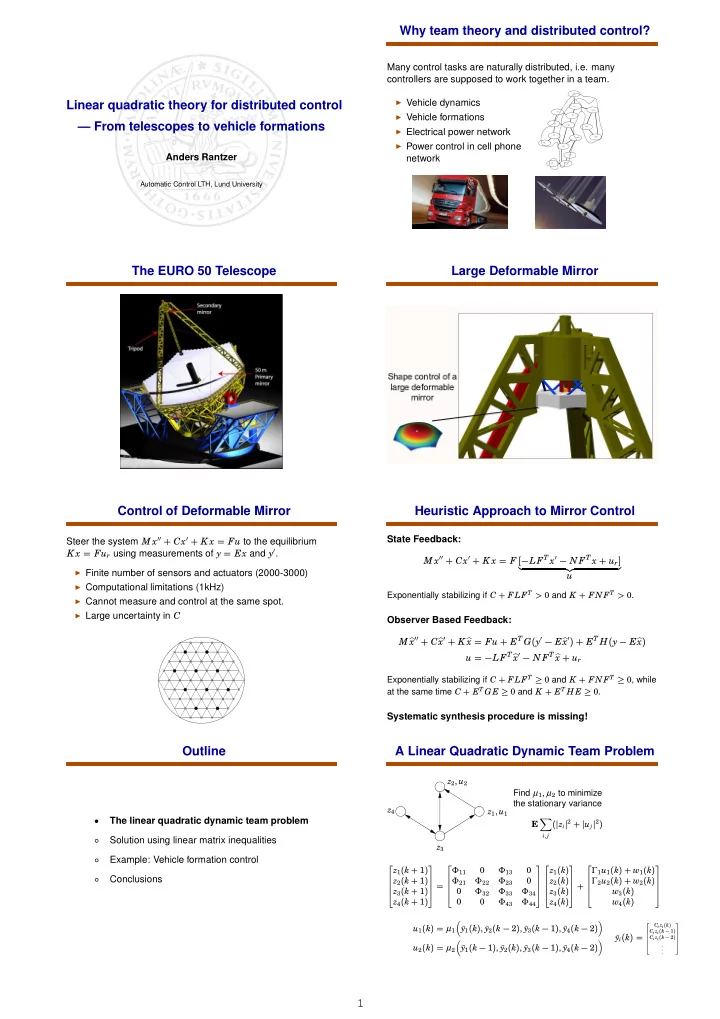

1 Linear quadratic theory for distributed control — From telescopes to vehicle formations

Anders Rantzer

Automatic Control LTH, Lund University

Why team theory and distributed control?

Many control tasks are naturally distributed, i.e. many controllers are supposed to work together in a team.

◮ Vehicle dynamics ◮ Vehicle formations ◮ Electrical power network ◮ Power control in cell phone

network

G1 G3 G5 G8 G9 G11 G12 G15 G13 G10 G16 G14 G7 G2 G4 G6

The EURO 50 Telescope Large Deformable Mirror Control of Deformable Mirror

Steer the system M x′′ + Cx′ + K x = Fu to the equilibrium K x = Fur using measurements of y = Ex and y′.

◮ Finite number of sensors and actuators (2000-3000) ◮ Computational limitations (1kHz) ◮ Cannot measure and control at the same spot. ◮ Large uncertainty in C

Heuristic Approach to Mirror Control

State Feedback: M x′′ + Cx′ + K x = F [−LFT x′ − N FT x + ur]

- u

Exponentially stabilizing if C + FLFT > 0 and K + FNFT > 0.

Observer Based Feedback: M x′′ + C x′ + K x = Fu + ET G(y′ − E x′) + ET H(y − E x) u = −LFT x′ − N FT x + ur

Exponentially stabilizing if C + FLFT ≥ 0 and K + FNFT ≥ 0, while at the same time C + ET GE ≥ 0 and K + ET H E ≥ 0.

Systematic synthesis procedure is missing!

Outline

- The linear quadratic dynamic team problem

○ Solution using linear matrix inequalities ○ Example: Vehicle formation control ○ Conclusions

A Linear Quadratic Dynamic Team Problem

z1, u1 z2, u2 z3 z4 Find µ1, µ2 to minimize the stationary variance E

- i,j

(zi2 + uj2) z1(k + 1) z2(k + 1) z3(k + 1) z4(k + 1) = Φ11 Φ13 Φ21 Φ22 Φ23 Φ32 Φ33 Φ34 Φ43 Φ44 z1(k) z2(k) z3(k) z4(k) + Γ1u1(k) + w1(k) Γ2u2(k) + w2(k) w3(k) w4(k) u1(k) = µ1

- ¯

y1(k), ¯ y2(k − 2), ¯ y3(k − 1), ¯ y4(k − 2)

- u2(k) = µ2

- ¯

y1(k − 1), ¯ y2(k), ¯ y3(k − 1), ¯ y4(k − 2)

- ¯

yi(k) =

2 6 6 6 6 4 Ci zi(k) Ci zi(k − 1) Ci zi(k − 2) . . . 3 7 7 7 7 5