Whatʼs the probability that the next candy is lime?

1

CS440/ECE448: Intro AI

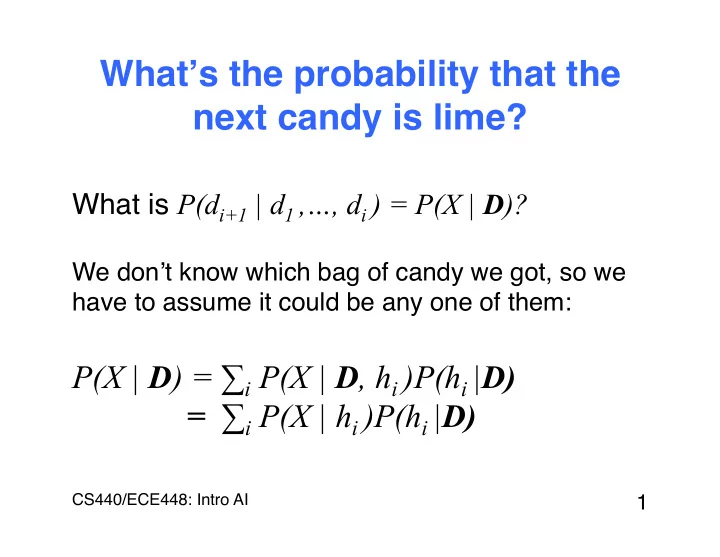

- What is P(di+1 | d1 ,…, di ) = P(X | D)?

What s the probability that the next candy is lime? What is P(d - - PowerPoint PPT Presentation

What s the probability that the next candy is lime? What is P(d i+1 | d 1 ,, d i ) = P(X | D )? We don t know which bag of candy we got, so we have to assume it could be any one of them: P(X | D ) = i P(X | D , h i )P(h i |

1

CS440/ECE448: Intro AI

3

CS440/ECE448: Intro AI

Burglary Earthquake Alarm JohnCalls MaryCalls

B=t ¡ B=f ¡ .001 ¡ 0.999 ¡ E=t ¡ E=f ¡ .002 ¡ 0.998 ¡ B ¡ E ¡ A=t ¡ A=f ¡ t ¡ t ¡ .95 ¡ .05 ¡ t ¡ f ¡ .94 ¡ .06 ¡ f ¡ t ¡ .29 ¡ .71 ¡ f ¡ f ¡ .999 ¡ .001 ¡ A ¡ M=t ¡ M=f ¡ t ¡ .7 ¡ .3 ¡ f ¡ .01 ¡ .99 ¡ A ¡ J=t ¡ J=f ¡ t ¡ .9 ¡ .1 ¡ f ¡ .05 ¡ .95 ¡

What is the probability of a burglary if John and Mary call?

– h1: 100% cherry – h2: 75% cherry + 25% lime – h3: 50% cherry + 50% lime – h4: 25% cherry + 75% lime – h5: 100% lime 6

CS440/ECE448: Intro AI

CS440/ECE448: Intro AI

CS440/ECE448: Intro AI

9

CS440/ECE448: Intro AI

10

CS440/ECE448: Intro AI

11

CS440/ECE448: Intro AI

argmaxh P(h | D) = argmaxh P(D | h)P(h) P(D) = argmaxh P(D | h)P(h)

CS440/ECE448: Intro AI

13

CS440/ECE448: Intro AI

CS440/ECE448: Intro AI

15

CS440/ECE448: Intro AI

0.2 0.4 0.6 0.8 1 2 4 6 8 10 Posterior probability of hypothesis Number of observations in d P(h1 | d) P(h2 | d) P(h3 | d) P(h4 | d) P(h5 | d)

16

CS440/ECE448: Intro AI

0.4 0.5 0.6 0.7 0.8 0.9 1 2 4 6 8 10 Probability that next candy is lime Number of observations in d

CS440/ECE448: Intro AI

18

CS440/ECE448: Intro AI

19

CS440/ECE448: Intro AI

20

CS440/ECE448: Intro AI

cherry θ lime: 1-θ

CS440/ECE448: Intro AI

CS440/ECE448: Intro AI

j=1 N

23

CS440/ECE448: Intro AI

j=1 N

24

CS440/ECE448: Intro AI

25

CS440/ECE448: Intro AI

CS440/ECE448: Intro AI