30/8/18, 10(32 pm Week 06 Lectures Page 1 of 32 file:///Users/jas/srvr/apps/cs9315/18s2/lectures/week06/notes.html

Week 06 Lectures

Recap on Implementing Selection

1/102

Selection = select * from R where C yields a subset of R tuples satisfying condition C a very important (frequent) operation in relational databases Types of selection determined by type of condition

- ne: select * from R where id=k

pmr: select * from R where age=65 rng: select * from R where age≥18 and age≤21 Strategies for implementing selection efficiently arrangement of tuples in file (e.g. sorting, hashing) auxiliary data structures (e.g. indexes, signatures)

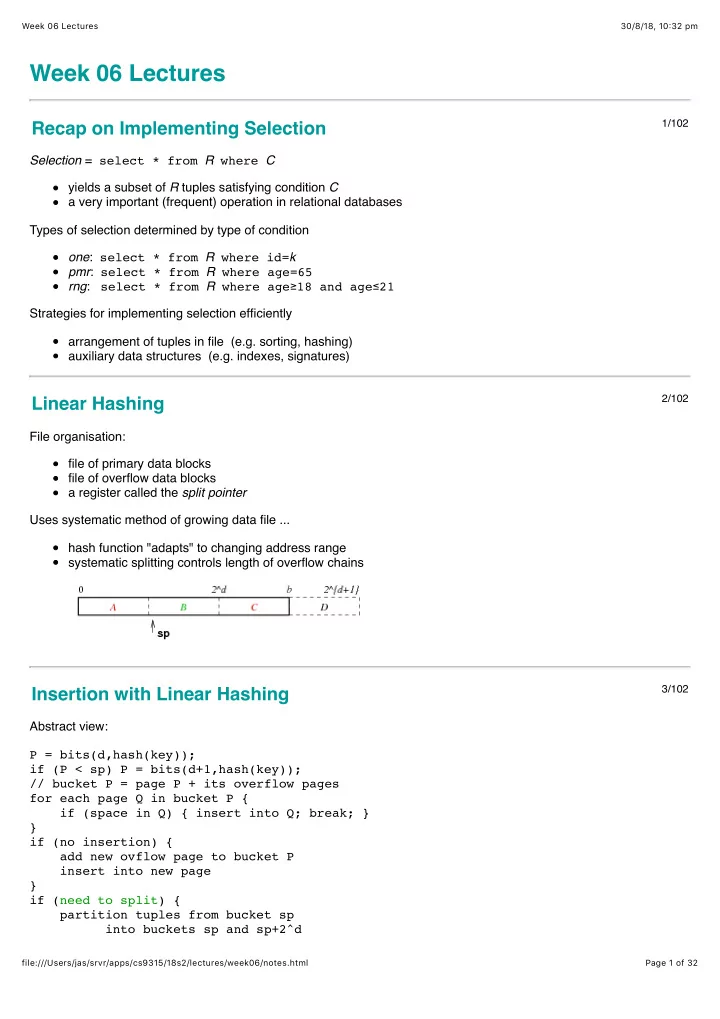

Linear Hashing

2/102

File organisation: file of primary data blocks file of overflow data blocks a register called the split pointer Uses systematic method of growing data file ... hash function "adapts" to changing address range systematic splitting controls length of overflow chains

Insertion with Linear Hashing

3/102