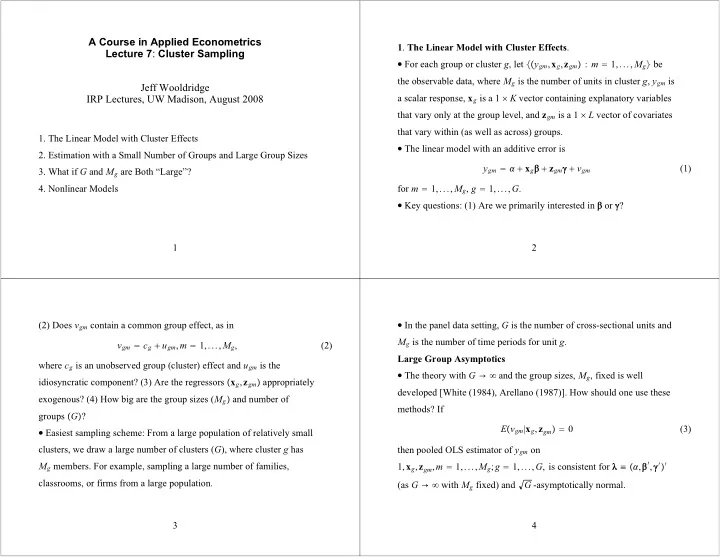

SLIDE 1

A Course in Applied Econometrics Lecture 7: Cluster Sampling Jeff Wooldridge IRP Lectures, UW Madison, August 2008

- 1. The Linear Model with Cluster Effects

- 2. Estimation with a Small Number of Groups and Large Group Sizes

- 3. What if G and Mg are Both “Large”?

- 4. Nonlinear Models

1

- 1. The Linear Model with Cluster Effects.