Heuristic Optimization

Lecture 8

Algorithm Engineering Group Hasso Plattner Institute, University of Potsdam

9 June 2015

Heuristic Optimization

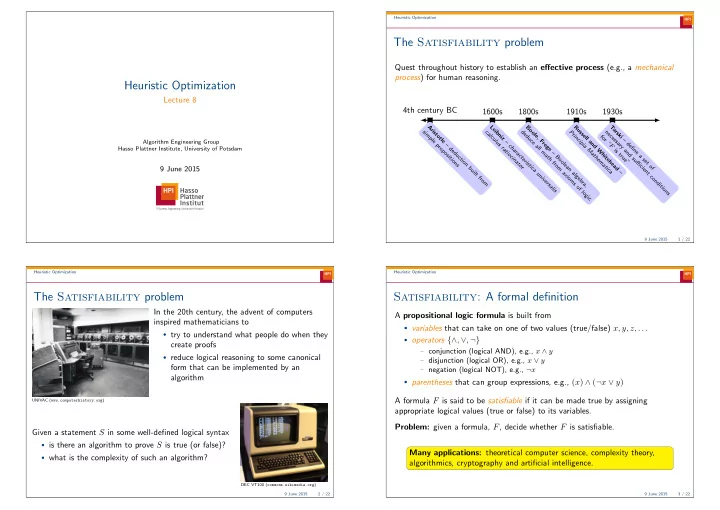

The Satisfiability problem

Quest throughout history to establish an effective process (e.g., a mechanical process) for human reasoning. 4th century BC

A r i s t

- t

l e – d e d u c t i

- n

b u i l t f r

- m

s i m p l e p r

- p

- s

i t i

- n

s

1600s

L e i b n i z – c h a r a c t e r i s t i c a u n i v e r s a l i s c a l c u l u s r a t i

- c

i n a t

- r

1800s

B

- l

e , F r e g e – B

- l

e a n a l g e b r a , d e d u c e a l l m a t h f r

- m

a x i

- m

s

- f

l

- g

i c

1910s

R u s s e l l a n d W h i t e h e a d – P r i n c i p i a M a t h e m a t i c a

1930s

T a r s k i – d e fi n e a s e t

- f

n e c e s s a r y a n d s u ffi c i e n t c

- n

d i t i

- n

s f

- r

“ F i s t r u e ”

9 June 2015 1 / 22 Heuristic Optimization

The Satisfiability problem

UNIVAC (www.computerhistory.org)

In the 20th century, the advent of computers inspired mathematicians to

- try to understand what people do when they

create proofs

- reduce logical reasoning to some canonical

form that can be implemented by an algorithm Given a statement S in some well-defined logical syntax

- is there an algorithm to prove S is true (or false)?

- what is the complexity of such an algorithm?

DEC VT100 (commons.wikimedia.org) 9 June 2015 2 / 22 Heuristic Optimization

Satisfiability: A formal definition

A propositional logic formula is built from

- variables that can take on one of two values (true/false) x, y, z, . . .

- operators {∧, ∨, ¬}

− conjunction (logical AND), e.g., x ∧ y − disjunction (logical OR), e.g., x ∨ y − negation (logical NOT), e.g., ¬x

- parentheses that can group expressions, e.g., (x) ∧ (¬x ∨ y)

A formula F is said to be satisfiable if it can be made true by assigning appropriate logical values (true or false) to its variables. Problem: given a formula, F, decide whether F is satisfiable. Many applications: theoretical computer science, complexity theory, algorithmics, cryptography and artificial intelligence.

9 June 2015 3 / 22