SLIDE 1

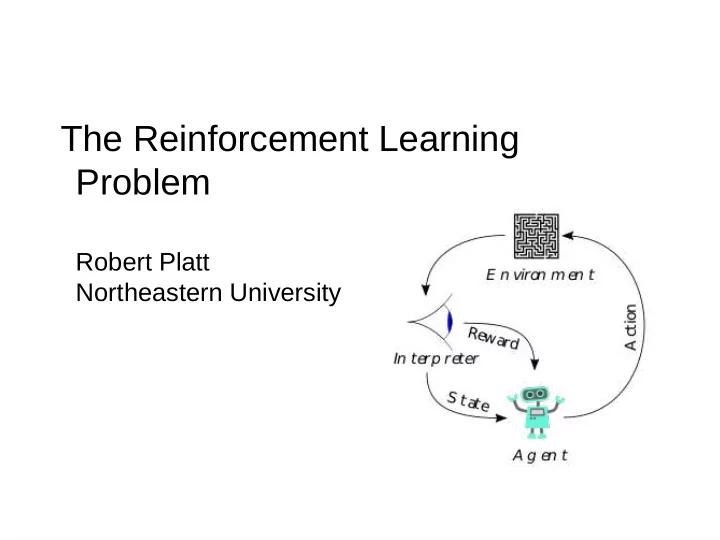

The Reinforcement Learning Problem

Robert Platt Northeastern University

SLIDE 2 Agent

Action Observation Reward

On a single time step, agent does the following:

- 1. observe some information

- 2. select an action to execute

- 3. take note of any reward

Goal of agent: select actions that maximize sum of expected future rewards.

Agent World

SLIDE 3

Example: rat in a maze

Agent World

Move left/right/up/down Observe position in maze Reward = +1 if get cheese

SLIDE 4

Example: robot makes coffee

Agent World

Move robot joints Observe camera image Reward = +1 if coffee in cup

SLIDE 5

Example: agent plays pong

Agent World

Joystick command Observe screen pixels Reward = game score

SLIDE 6

Reinforcement Learning

Action Observation Reward Agent World

Goal of agent: select actions that maximize sum of expected future rewards. – agent computes a rule for selecting actions to execute

SLIDE 7

Reinforcement Learning

Agent World

Goal of agent: select actions that maximize sum of expected future rewards. – agent computes a rule for selecting actions to execute

Joystick command Observe screen pixels Reward = game score

SLIDE 8 Model Free Reinforcement Learning

Agent World

Agent learns a strategy for selecting actions based on experience – no prior model of system dynamics, i.e. no prior knowledge

– no prior model of reward, i.e. no prior knowledge of what actions lead to reward

Joystick command Observe screen pixels Reward = game score

SLIDE 9

Distinction Relative to Planning

Agent World

Agent learns a strategy for selecting actions based on prior model – agent is given a model of system dynamics in advance – agent is “told” which states/actions are rewarding or not

Joystick command Observe screen pixels Reward = game score

SLIDE 10

RL vs Planning

When to use planning: – when the system is easily modeled – when the system is deteriminstic When to use RL: – hard to model systems – stochastic systems

SLIDE 11

RL vs Planning

When to use planning: – when the system is easily modeled – when the system is deteriminstic When to use RL: – hard to model systems – stochastic systems

Ultimately, RL and planning are closely related...