www.nr.no

The MOVIS project

Performance Monitoring System for Video Streaming Networks

Wolfgang Leister

April 2006

www.nr.no

The MOVIS project Performance Monitoring System for Video Streaming - - PDF document

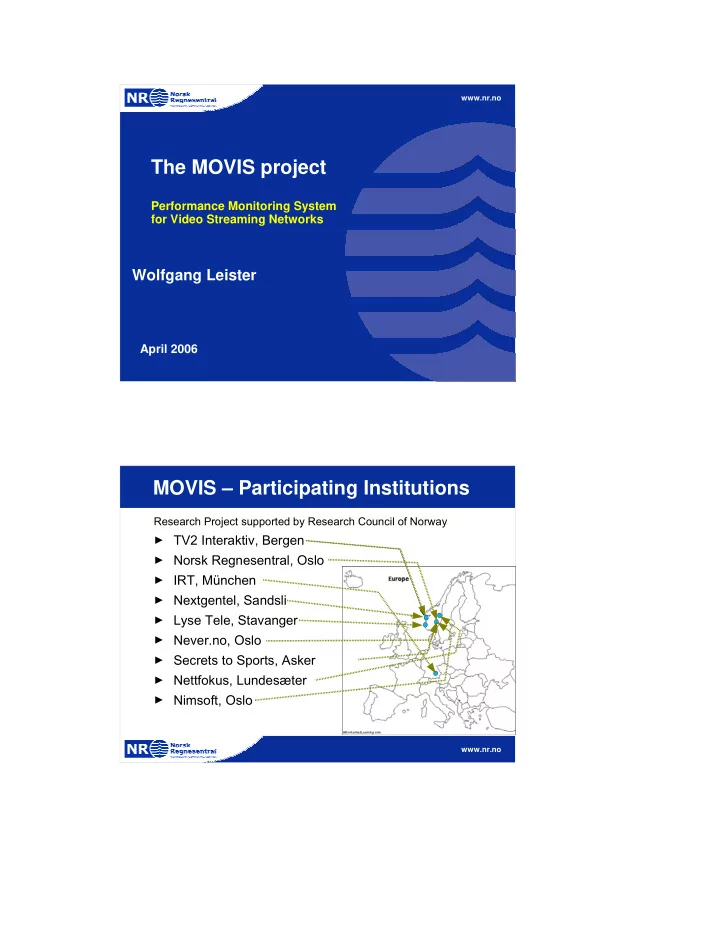

www.nr.no The MOVIS project Performance Monitoring System for Video Streaming Networks Wolfgang Leister April 2006 MOVIS Participating Institutions Research Project supported by Research Council of Norway TV2 Interaktiv, Bergen

www.nr.no

www.nr.no

www.nr.no

www.nr.no

Provider ...

Internet

Encoder Encoder Databases Video File Server Live Distrib. Server Camera Camera AV Matrix

Video Source

Provider 1 Default Server Provider 2 Provider 3

ISP Net

Encoders

www.nr.no

Streaming Server Cont Provider Default Server File Server

Internet Content Provider

Router / Switch Router / Switch Router / Switch DSLAM

www.nr.no

Access point Router WLAN Viewing Characteristics

Digital TV One PC Home Network WLAN

www.nr.no

►

►

►

►

►

►

►

►

►

►

www.nr.no

www.nr.no

Original Content Encoder Streaming Server Transmission Network Decoder Processed Content Feature Comparison Impairment Par. Feature Extraction Feature Extraction Original Content Encoder Streaming Server Transmission Network Decoder Processed Content Monitoring System + Model Impairment Par. Original Content Encoder Streaming Server Transmission Network Decoder Processed Content Measurement System Obj.Quality Rating Picture Comparison Test method Feature Extraction Test Method Single Ended Test method www.nr.no

vs vs vs vs vs

www.nr.no

www.nr.no

www.nr.no

www.nr.no

www.nr.no

Firewall

Internet enterprise networks

www.knowyoursla.com

Firewall

Master Slave Slave Slave

►

►

►

►

www.nr.no

www.nr.no

www.nr.no

www.nr.no

www.nr.no

Encoder Streaming server Network

Original Content Encoded Content Cont Prov. Network ISP Network User Network User Equipment End User

MOVIS Ancilliary Channel Viewing conditions (not part of MOVIS) Streaming Server type, parameter settings, ... Networking parameters, topology, type, ... Type, parameters ... Codec, parameter settings, encoder type, ... MOVIS-factor M=

MO

+MA(...)

Advantage factor

www.nr.no

►

►

►

►

►

►

www.nr.no

www.nr.no

Reference ref

D Hidden Reference C

B

A

Reference ref

D

C Hidden Reference B

A

Reference ref

D

C

B Hidden Reference A

Example: Algo. 1: WM9, CIF,168kbps

www.nr.no

www.nr.no

2 0 4 0 6 0 8 0 1 0 0 4 0 0 8 0 0 1 2 0 0 G lo b a l ( M e a n + C I ) S k iin g ( M e a n + C I ) R u g b y ( M e a n + C I ) R a in m a n ( M e a n + C I ) B a r c e lo n a ( M e a n + C I )

Windows Media 9 CIF format all sequences

Total bit rate (kbps)

Excellent Good Fair Poor Bad Mean Score