The Future of the In-Car Experience Abdelrahman Mahmoud Product - PowerPoint PPT Presentation

The Future of the In-Car Experience Abdelrahman Mahmoud Product Manager Ashutosh Sanan Computer Vision Scientist @affectiva Affectiva Emotion AI Emotion recognition from face and voice powers several industries Social Robots Mood

The Future of the In-Car Experience Abdelrahman Mahmoud Product Manager Ashutosh Sanan Computer Vision Scientist @affectiva

Affectiva Emotion AI Emotion recognition from face and voice powers several industries Social Robots Mood Tracking Interviewing Drug Efficacy Content Management (video / audio) Customer Analytics Academic Research Banking Telehealth Focus Groups Connected devices / loT Health & Wellness Surveillance Education Market Research Social Robotics Recruiting MOOCs Legal Mental health Security Telemedicine Healthcare Web Conferencing Real time student feedback Automotive Live streaming Video & Photo organization Retail Fraud Detection Virtual Assistants Gaming Online education In market products since 2011 Recognized Market / AI Leader Built using real-world data • 1/3 of Fortune Global 100, 1400 brands • Spun out of MIT Media Lab • 6.5M face videos from 87 countries • OEMs and Tier I suppliers • Selected for Startup Autobahn and Partnership on AI • 42,000 miles of driving quarterly @affectiva

Affectiva Automotive AI The Problem Affectiva Solution Transitions in control in semi-autonomous vehicles (e.g. the L3 handoff problem) Next generation AI based system Driver to monitor and manage driver capability Safety Current solutions based on for safe engagement steering wheel sensors are irrelevant in autonomous driving First in-market solution Occupant Differentiated and monetizable for understanding occupant state Experience in-cab experience (e.g. the L4 luxury and mood to enhance overall car challenge) in-cab experience @affectiva

People Analytics People Analytics context-aware with Emotion AI as the foundational technology. People Analytics Safety In-Cab Context Next generation driver monitoring Emotion AI Occupant relationships Smart handoff Infotainment content Inanimate objects Facial expressions Proactive intervention Cabin environment Tone of voice Body posture External Context Personalization Anger Enjoyment Weather Individually customized baseline Surprise Attention Traffic Adaptive environment Distraction Excitement Signs Personalization across vehicles Drowsiness Pedestrians Stress Intoxication Discomfort Cognitive Load Displeasure Personal Context Monetization Identity Differentiation among brands Likes/dislikes & preferences Premium content delivery Occupant state history Purchase recommendations Calendar @affectiva

Affectiva approach to addressing Emotion AI complexities Data Algorithms Infrastructure Team Our robust and scalable Using a variety of deep Deep learning infrastructure Our team of researchers and data strategy enables us to learning, computer vision allows for rapid technologists have deep acquire large and diverse and speech processing experimentation and tuning expertise in machine data sets, annotate these approaches, we have of models as wells as large learning, deep learning, data using manual and developed algorithms to scale data processing and science, data annotation, automated approaches. model complex and model evaluation. computer vision and speech nuanced emotion and processing cognitive states. @affectiva

World’s largest emotion data repository 87 countries, 6.5M faces analyzed, 3.8B facial frames Includes people emoting on device, and while driving Top Countries for Emotion Data UNITED KINGDOM 265K CHINA 562K JAPAN GERMANY 61K 148K USA 1,166K VIETNAM MEXICO 148K 150K PHILIPPINES INDIA 159K BRAZIL 1,363K 194K THAILAND 184K INDONESIA 325K @affectiva

Data Strategy To develop a deep understanding of the state of occupants in a car, one needs large amounts of data. With this data we can develop algorithms that can sense emotions and gather people analytics in real world conditions. Spontaneous Foundational proprietary data will drive value occupant data to accelerate data partner ecosystem Data partnerships Using Affectiva Driver Kits and Affectiva Moving Labs Acquire 3rd party natural in-cab data through to collect naturalistic driver and occupant academic and commercial partners (MIT AVT, data to develop metrics that are robust to fleet operators, ride-share companies) real-world conditions Simulated data Auto Data Collect challenging data Corpus in safe lab simulation environment to augment the spontaneous driver dataset and bootstrap algorithms (e.g. drowsiness, intoxication) multi-spectral & transfer learning. @affectiva Affectiva Confidential

@affectiva

Affectiva approach to addressing Emotion AI complexities Data Algorithms Infrastructure Team Our robust and scalable Using a variety of deep Deep learning infrastructure Our team of researchers and data strategy enables us to learning, computer vision allows for rapid technologists have deep acquire large and diverse and speech processing experimentation and tuning expertise in machine data sets, annotate these approaches, we have of models as wells as large learning, deep learning, data using manual and developed algorithms to scale data processing and science, data annotation, automated approaches. model complex and model evaluation. computer vision and speech nuanced emotion and processing cognitive states. @affectiva

Algorithms @affectiva

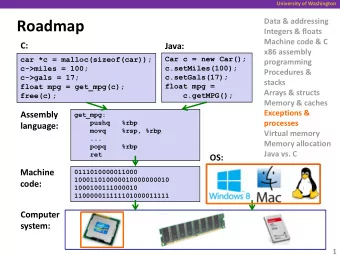

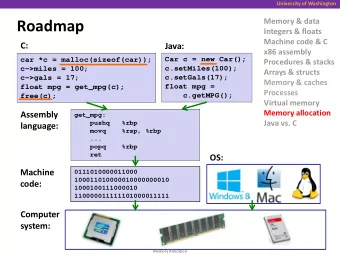

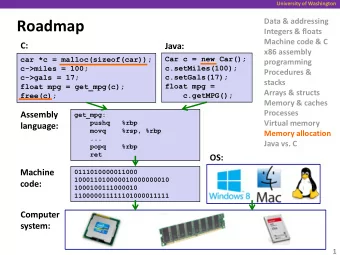

Deep learning advancements driving the automotive roadmap The current SDK consists of deep learning networks that: • Face detection: given an image, detect faces • Landmark localization: given a image + bounding box, detect and track landmarks • Facial analysis: detect facial expression/emotion/attributes per face analysis Face detection Landmark localization Facial analysis (RPN + bounding boxes) (Regression + confidence) (Multi-task CNN/RNN) Temporal Expressions bounding boxes Landmark image Classification face image refinement Attributes Shared Shared Shared Conv. Conv. Conv. Landmark Region Proposal Emotions estimate Network Confidence @affectiva

Task: Facial Action/Emotion Recognition • Given a face classify the Joy corresponding visual expression/ emotion occurrence. • Many Expressions: Facial muscles generate hundreds of facial expressions/emotions. Yawn • Multi-Attribute Classification • Fast enough to run on mobile/ embedded devices. Eye Brow Raise @affectiva

Is a single image always enough? Giphy @affectiva

Information in Time 105 Intensity of Expression 79 53 26 0 1 2 3 4 5 6 7 8 9 TIME Emotional state continuously evolving process over time. Adding temporal information makes it easier to detect highly subtle changes in facial state. How to utilize temporal information • Use post-processing based over static classifier output using previous predictions and images. • Use Recurrent Architectures. @affectiva

Spatio-Temporal Action Recognition 0 CNN 0 L S CNN 0.5 Frame Level , T Classification , M , CNN , Temporal Sequence of 0.8 Frames Learning temporal Spatial Feature Extraction structure Yawn Recognition using CNN + LSTM @affectiva

Training Challenges & Inferences @affectiva

Data challenges Missing Frames in Sequence While training RNN’s expect a continuous temporal sequence. Missing facial frames • Bad lighting • Face out of view • Face not visible Missing human annotations Facial frames not labeled by humans Possible Solutions • Use shorter and fixed continuous sequences with no missing data • Copy the last state of the sequence. Repeat last tracked frame • Mask the missing frames @affectiva

Masking vs Copying last state Results indicate that masking works better than copying the last state Chart Title 0.97 0.963 0.955 0.948 0.94 ROC-AUC Val Acc Using last state Masking @affectiva

How to train a Spatio-Temporal model? Two approaches to train our model: Input A Expressions • Train both convolution and Transfer recurrent filters jointly. • Transfer learning using previously learned Yawn Input B convolutional filters. Frozen Feature Extractors @affectiva

Transfer learning for runtime performance Usage of transfer learning to help with the runtime performance Temporal Expressions • Increased runtime performance Attributes Shared to run on mobile. Conv. Emotions • Minimal benefit by tuning filters Intelligent Filter Reuse from scratch. Chart Title 0.97 • Large real-world dataset for 0.968 pretrained filters. 0.966 0.963 0.961 ROC-AUC Val Acc Fixed Weights Fully Trainable @affectiva

Does temporal info always help? Yawn ROC-AUC Performance Outer Brow Raiser-AU02 ROC-AUC Performance (Temporal vs Static) (Temporal vs Static) 0.97 0.89 0.96 0.883 0.95 0.875 0.94 0.868 0.93 0.86 Static Temporal Static Temporal Smile ROC-AUC Performance (Temporal vs Static) 0.962 0.954 0.946 0.938 0.93 Static Temporal @affectiva

Models in Action @affectiva

Key Takeaways • Not all the metrics are benefited by adding complex temporal information • Using all the data (complete & partial sequences) definitely helps the model • Masking works better with partial sequences than copying last frames • Intelligent filters reuse makes it possible to deploy these models on mobile with real-time performance @affectiva

Recommend

More recommend

Explore More Topics

Stay informed with curated content and fresh updates.