SLIDE 1

Synthesizing 3D Shapes via Modeling Multi-View Depth Maps and - - PowerPoint PPT Presentation

Synthesizing 3D Shapes via Modeling Multi-View Depth Maps and - - PowerPoint PPT Presentation

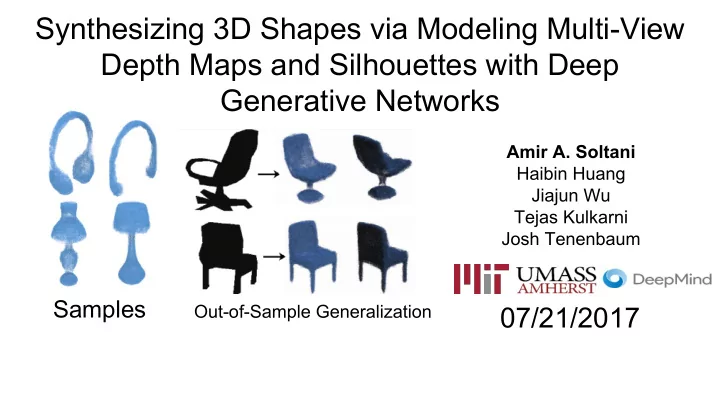

Synthesizing 3D Shapes via Modeling Multi-View Depth Maps and Silhouettes with Deep Generative Networks Amir A. Soltani Haibin Huang Jiajun Wu Tejas Kulkarni Josh Tenenbaum Samples Out-of-Sample Generalization 07/21/2017 Motivation -

SLIDE 2

SLIDE 3

Motivation - Robotics

SLIDE 4

Motivation

- Computer Vision cannot simply rely on 2D data to solve 3D problems

- We need to have good 3D representations to solve inverse problems

- A generative model for 3D is a good starting point (A lot more needed though)

- Good progress has been made in the past 2 or 3 years

- Still, the choice of 3D representation is being debated

- Each representation has advantages and disadvantages

- So far there is not a good agreement on which representation to use

SLIDE 5

Choice of Representation

Multi-view Template-based Point clouds Meshes Voxels

SLIDE 6

3D Representation - Voxels

- Computational complexity is very high (O3) if used naively

- Cannot Model High-Res Shapes

- Details can easily get lost

- Highly sparse at higher resolutions

- Cannot model regular structures easily

SLIDE 7

3D Representation - Voxels

- Directly predicting high-res voxel-based outputs is very hard

- Highest so far is 64 x 64 x 64

- One model per object category

Wu et al, NIPS 2016

SLIDE 8

3D Representation - Point Clouds

- Not a lot of work done using point clouds so far

- Things start to get mathematically-involved from here

- The choice of loss function, non-differentiability issues etc

- Not obvious how many points to have

Image courtesy: Hao Su

- Details Will Be Missing

SLIDE 9

3D Representation - Point Clouds

Su et al, CVPR 2017

SLIDE 10

3D Representation - Meshes

- Cannot directly apply out-of-the-box models on

- Mathematically Involved

- Can be seen as a graph as well

Image courtesy: Hao Su

- Need to Construct Special Kind of Kernels for CNNs

SLIDE 11

3D Representation - Template-Based (CAD)

Image courtesy: Haibin Huang

- Again, Not Able to Easily Apply Out-of-Box Models on

- Data Is Very Hard to Obtain

- Offers Compositionality Intrinsically and Explicitly

- Hard to Model Shapes Never Seen Before

- Might be a Good Option for Learning Functionalities

SLIDE 12

3D Representation - Multi-View

- Multi-view representation is very lightweight

- Can easily apply out-of-the-box CNN models on

- Offers Flexibility (Depth Maps) and Eases the Computation Significantly

- Although 2D, Still Explicitly Models 3D Shapes

- Allows Generating Hi-Res, Detailed, Novel Objects

- Without the machinery required for new voxel-based models

- Not Mathematically Involved

- More Intuitive

SLIDE 13

Motivations

- Synthesize/Generate Hi-Res, Detailed and Novel Shapes

- Use Some Sort of a Representation Whose Data is Easily Obtainable

- No Doubt that it is Very Easy to Obtain 2D images or RGBD or just D

- Have Out-of-Sample Generalizability

- A Step Forward Towards Obtaining 3D Concepts Efficiently to Solve Inverse Vision

Problems

- Model 3D via 2D (inspired by biological vision)

- Share the Same Representations For All Categories

SLIDE 14

Pipeline - Data Set

- Used ShapeNet Core

- Contains Aligned, Normalized Shapes

- ~37k for train, ~3k for test

- Normalized and Aligned

- Render 20 views of depth maps

- Camera Positions Fixed

SLIDE 15

Pipeline - Architectures

- Train 3 Different VAE Models

- AllVPNet: Train with All 20 Views

- DropoutNet: Train with 2-5 Randomly Chosen Views

- SingleVPNet: Train with 1 Randomly Chosen View

- Z Layer Has 100 Nodes for Unconditional and 40 for Conditional

- L1 Loss Function is Used During Training

SLIDE 16

Pipeline - Architectures

L1 L1

SLIDE 17

Pipeline - 3D Reconstruction

- Deterministic Function is Used to Generate the Final 3D Point Cloud

- Number of Points is Between ~30k to ~400k depending on Shape Complexity

- Not fixed!

SLIDE 18

Results - Sampling

Random Sampling

SLIDE 19

Results - Sampling

Random Samples’ Nearest Neighbors

Training set Reconstruction Random Sample

SLIDE 20

Results - Sampling

Random Samples

SLIDE 21

Results - Sampling

More Random Samples

SLIDE 22

Results - Sampling

More Random Samples

SLIDE 23

Results Samples

Conditional Sampling

SLIDE 24

Results - Sampling

Conditional Sampling

SLIDE 25

Results - Sampling

Conditional Samples

SLIDE 26

Results - Conditional Sampling

Nearest Neighbors

Training set Reconstruction

- Cond. Sample

Training set Reconstruction

- Cond. Sample

SLIDE 27

Results - Conditional Sampling

Nearest Neighbors

Training set Reconstruction

- Cond. Sample

SLIDE 28

Results - Reconstruction

SLIDE 29

Results - Classification

Classification, Reconstruction Error

SLIDE 30

Results - Reconstruction

Out-of-Sample Generalization

- Put Silhouettes/Depth Maps into 224 x 224 canvases

- Camera Pose Not Fixed

- Different Size and Orientation

- NYUD and Silhouettes from the Internet

- Images Scaled to Fit

- The Rest of The Results Are All Obtained Through SingleVPNet Model

SLIDE 31

Results - Reconstruction

Out-of-Sample Generalization (NYUD)

SLIDE 32

Results - Reconstruction

Out-of-Sample Generalization (Uncond. SinlgeVPNet - NYUD Silhouettes)

SLIDE 33

Results - Reconstruction

Out-of-Sample Generalization (Uncond. SinlgeVPNet - NYUD Silhouettes)

SLIDE 34

Results - Reconstruction

Out-of-Sample Generalization (Silhouettes From Web)

SLIDE 35

Results - Representation Analysis

Consistent Representation

- Naturally Would Like to Get The Same Shape Across All Views

- Intuitively-Thinking, Uncertainty is Actually Part of Consistency

- Obtaining Good Priors Is Important!

SLIDE 36

Results - Analysis

Consistent Representation

SLIDE 37

Results - Analysis

Consistent Representation

SLIDE 38

Results - Analysis

Priors Matter!

SLIDE 39

Results - Analysis

What 3D shape is this?

SLIDE 40

Results - Analysis

SLIDE 41

Results - Analysis

- Model’s Prediction: “airplane”

- Obtaining good, inductive biases is hard but helps a lot!

- Behaves like a hierarchical prior

- Quite meaningful and intuitive

SLIDE 42

Results - Analysis

Implicitly Learning About Parts

SLIDE 43

Results - Analysis

Implicitly Learning About Parts

SLIDE 44

Concolusion

- We showed an effective paradigm for learning 3D shapes using multiview representation

- Samples obtained look realistic, novel and detailed

- Out-of-sample generalization is attainable via good generative models + meaningful priors

- Hierarchical priors can effectively induce enough bias to generate meaningful results

- Parts can be learned implicitly. Hard to explicitly learn parts for real-word tasks

- Strong inductive biases helps get meaningful 3D shapes on highly occluded inputs

SLIDE 45

Future Directions and Challenges

- Current data sets are not sufficient to learn about 3D vision

- 3D shapes are the end product of an underlying process: physics

- Current data-driven approaches do get us to where we want to be

- 3D shapes are composed of things like material, mass, etc

- To meaningfully interact with 3D shapes we need to do more!

- Learning fast, and accurate physics simulators might be a good starting point

SLIDE 46

Future Directions and Challenges

Thank you!

SLIDE 47

Results - Conditional Sampling

Conditional Samples

SLIDE 48

Results - Classification, Recon. Err.

- The Goal Is Not to do Classification or Recon. But to Have Hierarchical Priors

- Strong Regularization

SLIDE 49

Results - IoU

IoU numbers for ShapeNet Core

SLIDE 50

Results - Conditional Sampling

More Conditional Samples

SLIDE 51

Results Conditional Sampling

More Conditional Samples

SLIDE 52

Results - Analysis

What about this?

SLIDE 53

Results - Analysis

SLIDE 54

Results - Analysis

SLIDE 55