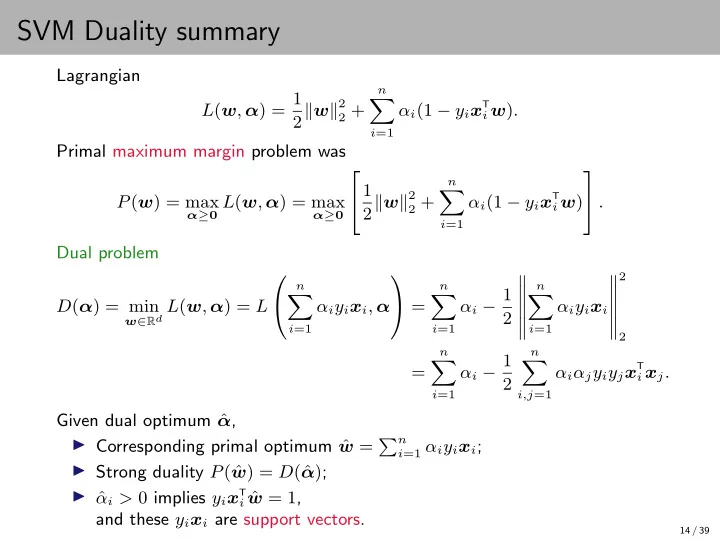

SVM Duality summary

Lagrangian L(w, α) = 1 2w2

2 + n

- i=1

αi(1 − yix

T

i w).

Primal maximum margin problem was P(w) = max

α≥0 L(w, α) = max α≥0

1 2w2

2 + n

- i=1

αi(1 − yix

T

i w)

. Dual problem D(α) = min

w∈Rd L(w, α) = L

n

- i=1

αiyixi, α =

n

- i=1

αi − 1 2

- n

- i=1

αiyixi

- 2

2

=

n

- i=1

αi − 1 2

n

- i,j=1

αiαjyiyjx

T

i xj.

Given dual optimum ˆ α, ◮ Corresponding primal optimum ˆ w = n

i=1 αiyixi;

◮ Strong duality P( ˆ w) = D(ˆ α); ◮ ˆ αi > 0 implies yixT

i ˆ

w = 1, and these yixi are support vectors.

14 / 39