Statistics and the Scientific Study of Language

What do they have to do with each other? Mark Johnson

Brown UniversityESSLLI 2005

Outline

Why Statistics? Learning probabilistic context-free grammars Factoring learning into simpler components The Janus-faced nature of computational linguistics Conclusion

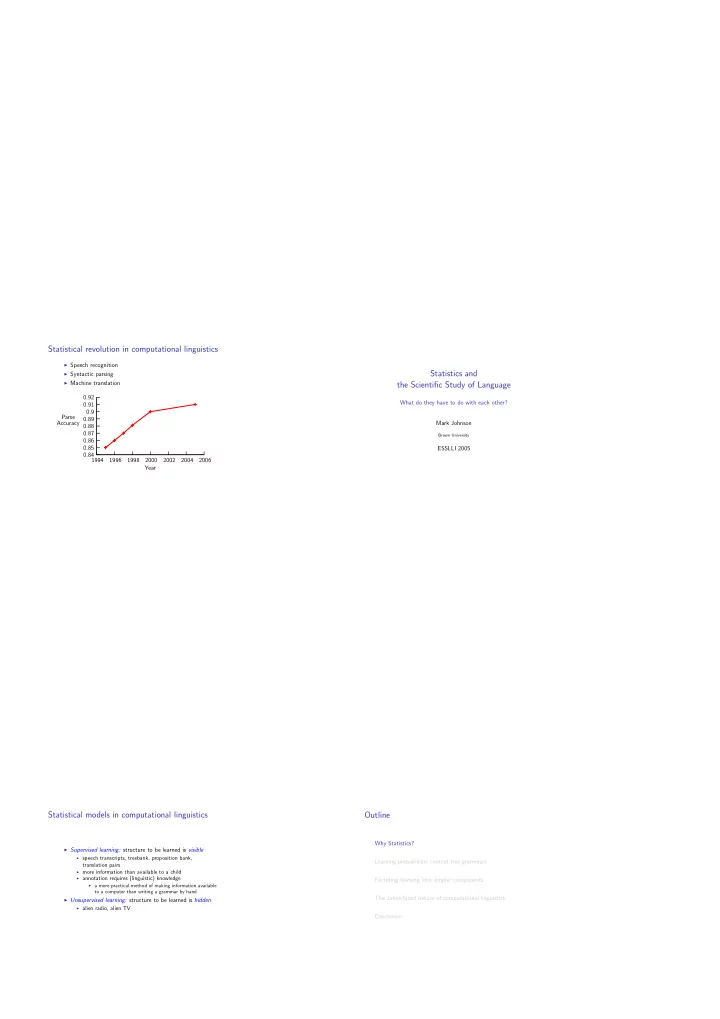

Statistical revolution in computational linguistics

◮ Speech recognition ◮ Syntactic parsing ◮ Machine translationYear Parse Accuracy 2006 2004 2002 2000 1998 1996 1994 0.92 0.91 0.9 0.89 0.88 0.87 0.86 0.85 0.84

Statistical models in computational linguistics

◮ Supervised learning: structure to be learned is visible ◮ speech transcripts, treebank, proposition bank,translation pairs

◮ more information than available to a child ◮ annotation requires (linguistic) knowledge ◮ a more practical method of making information availableto a computer than writing a grammar by hand

◮ Unsupervised learning: structure to be learned is hidden ◮ alien radio, alien TV