Statistical Natural Language Processing

Artifjcial Neural networks & deep learning Çağrı Çöltekin

University of Tübingen Seminar für Sprachwissenschaft

Summer Semester 2018

Preliminaries ANNs Deep ANNs CNNs RNNs Autoencoders

Artifjcial neural networks

- Artifjcial neural networks (ANNs) are machine learning

models inspired by biological neural networks

- ANNs are powerful non-linear models

- Power comes with a price: there are no guarantees of

fjnding a global minimum of the error function

- ANNs have been used in ML, AI, Cognitive science since

1950’s – with some ups and downs

- Currently they are the driving force behind the popular

‘deep learning’ methods

Ç. Çöltekin, SfS / University of Tübingen Summer Semester 2018 1 / 72 Preliminaries ANNs Deep ANNs CNNs RNNs Autoencoders

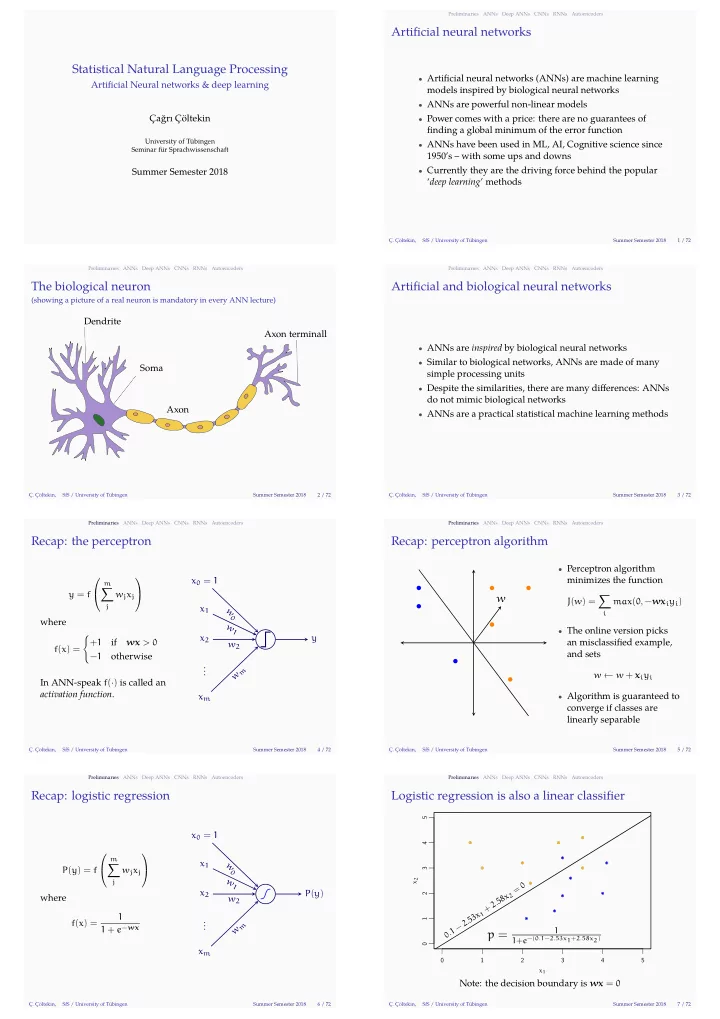

The biological neuron

(showing a picture of a real neuron is mandatory in every ANN lecture)

Dendrite Soma Axon Axon terminall

Ç. Çöltekin, SfS / University of Tübingen Summer Semester 2018 2 / 72 Preliminaries ANNs Deep ANNs CNNs RNNs Autoencoders

Artifjcial and biological neural networks

- ANNs are inspired by biological neural networks

- Similar to biological networks, ANNs are made of many

simple processing units

- Despite the similarities, there are many difgerences: ANNs

do not mimic biological networks

- ANNs are a practical statistical machine learning methods

Ç. Çöltekin, SfS / University of Tübingen Summer Semester 2018 3 / 72 Preliminaries ANNs Deep ANNs CNNs RNNs Autoencoders

Recap: the perceptron

y = f

m

∑

j

wjxj where f(x) = { +1 if wx > 0 −1

- therwise

In ANN-speak f(·) is called an activation function. x2 x1 . . . xm w

1

w2 wm y x0 = 1 w0

Ç. Çöltekin, SfS / University of Tübingen Summer Semester 2018 4 / 72 Preliminaries ANNs Deep ANNs CNNs RNNs Autoencoders

Recap: perceptron algorithm

w

- Perceptron algorithm

minimizes the function J(w) = ∑

i

max(0, −wxiyi)

- The online version picks

an misclassifjed example, and sets w ← w + xiyi

- Algorithm is guaranteed to

converge if classes are linearly separable

Ç. Çöltekin, SfS / University of Tübingen Summer Semester 2018 5 / 72 Preliminaries ANNs Deep ANNs CNNs RNNs Autoencoders

Recap: logistic regression

P(y) = f

m

∑

j

wjxj where f(x) = 1 1 + e−wx x2 x1 . . . xm w

1

w2 wm P(y) x0 = 1 w0

Ç. Çöltekin, SfS / University of Tübingen Summer Semester 2018 6 / 72 Preliminaries ANNs Deep ANNs CNNs RNNs Autoencoders

Logistic regression is also a linear classifjer

1 2 3 4 5 1 2 3 4 5 x1 x2

. 1 − 2 . 5 3 x

1

+ 2 . 5 8 x

2

=

p =

1 1+e−(0.1−2.53x1+2.58x2)

Note: the decision boundary is wx = 0

Ç. Çöltekin, SfS / University of Tübingen Summer Semester 2018 7 / 72