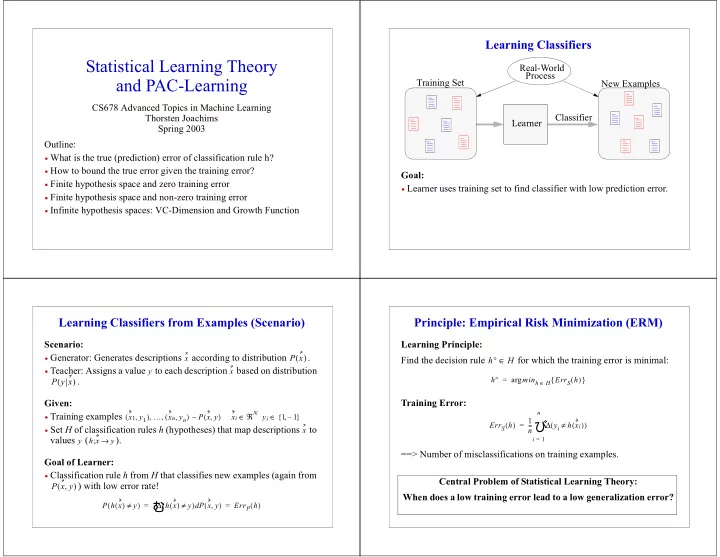

StatisticalLearningTheory andPAC-Learning

CS678AdvancedTopicsinMachineLearning ThorstenJoachims Spring2003 Outline:

- Whatisthetrue(prediction)errorofclassificationruleh?

- Howtoboundthetrueerrorgiventhetrainingerror?

- Finitehypothesisspaceandzerotrainingerror

- Finitehypothesisspaceandnon-zerotrainingerror

- Infinitehypothesisspaces:VC-DimensionandGrowthFunction

LearningClassifiers

Goal:

- Learnerusestrainingsettofindclassifierwithlowpredictionerror.

TrainingSet NewExamples Learner Classifier Real-World Process

LearningClassifiersfromExamples(Scenario)

Scenario:

- Generator:Generatesdescriptions accordingtodistribution

.

- Teacher:Assignsavalue toeachdescription basedondistribution

. Given:

- Trainingexamples

- SetHofclassificationrulesh(hypotheses)thatmapdescriptions to

values ( ). GoalofLearner:

- ClassificationrulehfromHthatclassifiesnewexamples(againfrom

)withlowerrorrate!

x

P x ( )

y x

P y x ( )

x1 y1 , ( ) … xn yn , ( ) , , P x y , ( ) xi ℜN y ∈

i

∼ 1 1 – { , } ∈ x y h x y → ;

P x y , ( )

P h x ( ) y ≠ ( ) ∆ h x ( ) y ≠ ( ) P x y , ( ) d

- ErrP h

( ) = =

Principle:EmpiricalRiskMinimization(ERM)

LearningPrinciple: Findthedecisionrule forwhichthetrainingerrorisminimal: TrainingError: ==>Numberofmisclassificationsontrainingexamples.

h° H ∈

h° minh

H ∈

ErrS h ( ) { } arg = ErrS h ( ) 1 n

- yi

h xi ( ) ≠ ( ) ∆

i 1 = n

- =