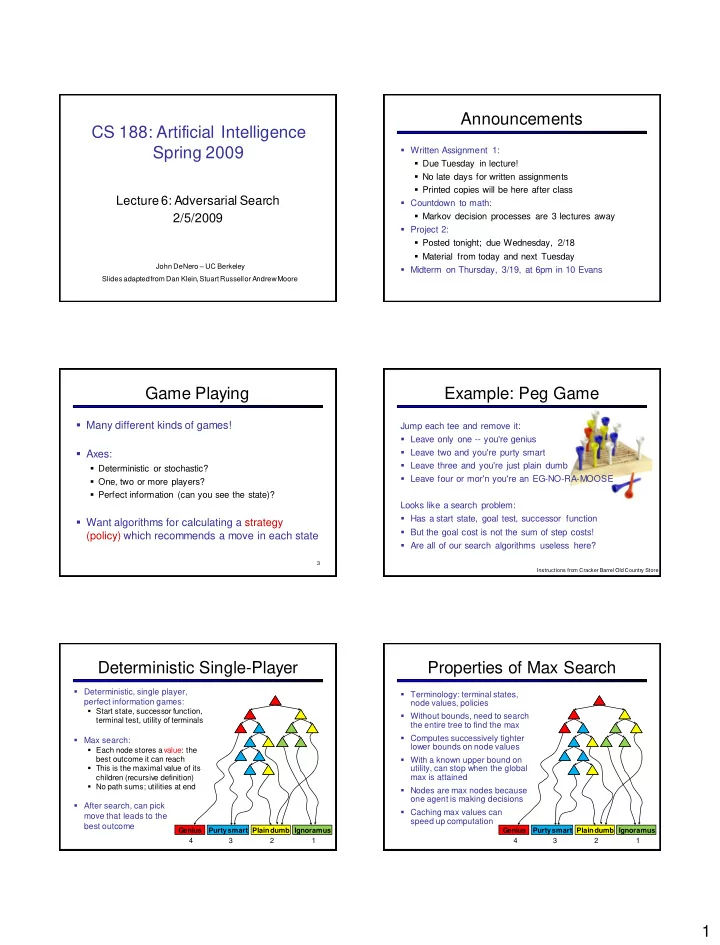

1 CS 188: Artificial Intelligence

Spring 2009

Lecture 6: Adversarial Search 2/5/2009

John DeNero – UC Berkeley Slides adapted from Dan Klein, Stuart Russell or Andrew Moore

Announcements

- Written Assignment 1:

- Due Tuesday in lecture!

- No late days for written assignments

- Printed copies will be here after class

- Countdown to math:

- Markov decision processes are 3 lectures away

- Project 2:

- Posted tonight; due Wednesday, 2/18

- Material from today and next Tuesday

- Midterm on Thursday, 3/19, at 6pm in 10 Evans

Game Playing

- Many different kinds of games!

- Axes:

- Deterministic or stochastic?

- One, two or more players?

- Perfect information (can you see the state)?

- Want algorithms for calculating a strategy

(policy) which recommends a move in each state

3

Example: Peg Game

Jump each tee and remove it:

- Leave only one -- you're genius

- Leave two and you're purty smart

- Leave three and you're just plain dumb

- Leave four or mor'n you're an EG-NO-RA-MOOSE

Looks like a search problem:

- Has a start state, goal test, successor function

- But the goal cost is not the sum of step costs!

- Are all of our search algorithms useless here?

Instructions from Cracker Barrel Old Country Store

Deterministic Single-Player

- Deterministic, single player,

perfect information games:

- Start state, successor function,

terminal test, utility of terminals

- Max search:

- Each node stores a value: the

best outcome it can reach

- This is the maximal value of its

children (recursive definition)

- No path sums; utilities at end

- After search, can pick

move that leads to the best outcome

Purtysmart Plain dumb Genius Ignoramus 4 3 2 1

Properties of Max Search

Purtysmart Plain dumb Genius Ignoramus

- Terminology: terminal states,

node values, policies

- Without bounds, need to search

the entire tree to find the max

- Computes successively tighter

lower bounds on node values

- With a known upper bound on

utility, can stop when the global max is attained

- Nodes are max nodes because

- ne agent is making decisions

- Caching max values can

speed up computation

4 3 2 1