SLIDE 1 1

Sorting integer arrays: security, speed, and verification

2

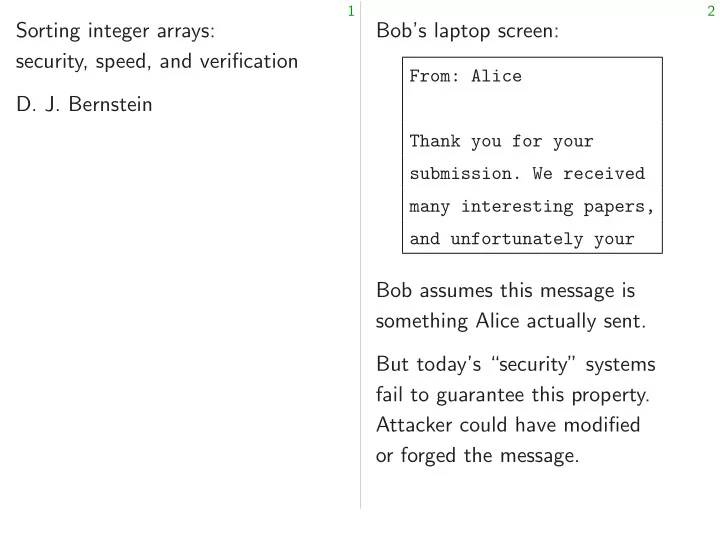

Bob’s laptop screen:

From: Alice Thank you for your

many interesting papers, and unfortunately your

Bob assumes this message is something Alice actually sent. But today’s “security” systems fail to guarantee this property. Attacker could have modified

SLIDE 2 1

rting integer arrays: y, speed, and verification Bernstein

2

Bob’s laptop screen:

From: Alice Thank you for your

many interesting papers, and unfortunately your

Bob assumes this message is something Alice actually sent. But today’s “security” systems fail to guarantee this property. Attacker could have modified

Trusted TCB: po that is resp the users’

SLIDE 3 1

rrays: and verification

2

Bob’s laptop screen:

From: Alice Thank you for your

many interesting papers, and unfortunately your

Bob assumes this message is something Alice actually sent. But today’s “security” systems fail to guarantee this property. Attacker could have modified

Trusted computing TCB: portion of computer that is responsible the users’ security

SLIDE 4 1

verification

2

Bob’s laptop screen:

From: Alice Thank you for your

many interesting papers, and unfortunately your

Bob assumes this message is something Alice actually sent. But today’s “security” systems fail to guarantee this property. Attacker could have modified

Trusted computing base (TCB TCB: portion of computer system that is responsible for enforcing the users’ security policy.

SLIDE 5 2

Bob’s laptop screen:

From: Alice Thank you for your

many interesting papers, and unfortunately your

Bob assumes this message is something Alice actually sent. But today’s “security” systems fail to guarantee this property. Attacker could have modified

3

Trusted computing base (TCB) TCB: portion of computer system that is responsible for enforcing the users’ security policy.

SLIDE 6 2

Bob’s laptop screen:

From: Alice Thank you for your

many interesting papers, and unfortunately your

Bob assumes this message is something Alice actually sent. But today’s “security” systems fail to guarantee this property. Attacker could have modified

3

Trusted computing base (TCB) TCB: portion of computer system that is responsible for enforcing the users’ security policy. Security policy for this talk: If message is displayed on Bob’s screen as “From: Alice” then message is from Alice.

SLIDE 7 2

Bob’s laptop screen:

From: Alice Thank you for your

many interesting papers, and unfortunately your

Bob assumes this message is something Alice actually sent. But today’s “security” systems fail to guarantee this property. Attacker could have modified

3

Trusted computing base (TCB) TCB: portion of computer system that is responsible for enforcing the users’ security policy. Security policy for this talk: If message is displayed on Bob’s screen as “From: Alice” then message is from Alice. If TCB works correctly, then message is guaranteed to be from Alice, no matter what the rest of the system does.

SLIDE 8 2

laptop screen:

From: Alice Thank you for your

many interesting papers, unfortunately your

assumes this message is something Alice actually sent. day’s “security” systems guarantee this property. er could have modified rged the message.

3

Trusted computing base (TCB) TCB: portion of computer system that is responsible for enforcing the users’ security policy. Security policy for this talk: If message is displayed on Bob’s screen as “From: Alice” then message is from Alice. If TCB works correctly, then message is guaranteed to be from Alice, no matter what the rest of the system does. Examples

in a device Linux

SLIDE 9 2

creen:

for your We received interesting papers, unfortunately your

this message is actually sent. “security” systems this property. have modified message.

3

Trusted computing base (TCB) TCB: portion of computer system that is responsible for enforcing the users’ security policy. Security policy for this talk: If message is displayed on Bob’s screen as “From: Alice” then message is from Alice. If TCB works correctly, then message is guaranteed to be from Alice, no matter what the rest of the system does. Examples of attack

in a device driver Linux kernel on

SLIDE 10 2

received papers, your

is sent. systems erty. dified

3

Trusted computing base (TCB) TCB: portion of computer system that is responsible for enforcing the users’ security policy. Security policy for this talk: If message is displayed on Bob’s screen as “From: Alice” then message is from Alice. If TCB works correctly, then message is guaranteed to be from Alice, no matter what the rest of the system does. Examples of attack strategies:

- 1. Attacker uses buffer overflo

in a device driver to control Linux kernel on Alice’s laptop.

SLIDE 11 3

Trusted computing base (TCB) TCB: portion of computer system that is responsible for enforcing the users’ security policy. Security policy for this talk: If message is displayed on Bob’s screen as “From: Alice” then message is from Alice. If TCB works correctly, then message is guaranteed to be from Alice, no matter what the rest of the system does.

4

Examples of attack strategies:

- 1. Attacker uses buffer overflow

in a device driver to control Linux kernel on Alice’s laptop.

SLIDE 12 3

Trusted computing base (TCB) TCB: portion of computer system that is responsible for enforcing the users’ security policy. Security policy for this talk: If message is displayed on Bob’s screen as “From: Alice” then message is from Alice. If TCB works correctly, then message is guaranteed to be from Alice, no matter what the rest of the system does.

4

Examples of attack strategies:

- 1. Attacker uses buffer overflow

in a device driver to control Linux kernel on Alice’s laptop.

- 2. Attacker uses buffer overflow

in a web browser to control disk files on Bob’s laptop.

SLIDE 13 3

Trusted computing base (TCB) TCB: portion of computer system that is responsible for enforcing the users’ security policy. Security policy for this talk: If message is displayed on Bob’s screen as “From: Alice” then message is from Alice. If TCB works correctly, then message is guaranteed to be from Alice, no matter what the rest of the system does.

4

Examples of attack strategies:

- 1. Attacker uses buffer overflow

in a device driver to control Linux kernel on Alice’s laptop.

- 2. Attacker uses buffer overflow

in a web browser to control disk files on Bob’s laptop. Device driver is in the TCB. Web browser is in the TCB. CPU is in the TCB. Etc.

SLIDE 14 3

Trusted computing base (TCB) TCB: portion of computer system that is responsible for enforcing the users’ security policy. Security policy for this talk: If message is displayed on Bob’s screen as “From: Alice” then message is from Alice. If TCB works correctly, then message is guaranteed to be from Alice, no matter what the rest of the system does.

4

Examples of attack strategies:

- 1. Attacker uses buffer overflow

in a device driver to control Linux kernel on Alice’s laptop.

- 2. Attacker uses buffer overflow

in a web browser to control disk files on Bob’s laptop. Device driver is in the TCB. Web browser is in the TCB. CPU is in the TCB. Etc. Massive TCB has many bugs, including many security holes. Any hope of fixing this?

SLIDE 15 3

rusted computing base (TCB) portion of computer system responsible for enforcing users’ security policy. Security policy for this talk: message is displayed on screen as “From: Alice” message is from Alice. works correctly, message is guaranteed from Alice, no matter what rest of the system does.

4

Examples of attack strategies:

- 1. Attacker uses buffer overflow

in a device driver to control Linux kernel on Alice’s laptop.

- 2. Attacker uses buffer overflow

in a web browser to control disk files on Bob’s laptop. Device driver is in the TCB. Web browser is in the TCB. CPU is in the TCB. Etc. Massive TCB has many bugs, including many security holes. Any hope of fixing this? Classic sec Rearchitect to have a

SLIDE 16 3

computing base (TCB) computer system

security policy. for this talk: displayed on “From: Alice” from Alice. rrectly, guaranteed Alice, no matter what system does.

4

Examples of attack strategies:

- 1. Attacker uses buffer overflow

in a device driver to control Linux kernel on Alice’s laptop.

- 2. Attacker uses buffer overflow

in a web browser to control disk files on Bob’s laptop. Device driver is in the TCB. Web browser is in the TCB. CPU is in the TCB. Etc. Massive TCB has many bugs, including many security holes. Any hope of fixing this? Classic security strategy: Rearchitect computer to have a much smaller

SLIDE 17 3

(TCB) system rcing talk:

Alice”

Alice. ranteed matter what es.

4

Examples of attack strategies:

- 1. Attacker uses buffer overflow

in a device driver to control Linux kernel on Alice’s laptop.

- 2. Attacker uses buffer overflow

in a web browser to control disk files on Bob’s laptop. Device driver is in the TCB. Web browser is in the TCB. CPU is in the TCB. Etc. Massive TCB has many bugs, including many security holes. Any hope of fixing this? Classic security strategy: Rearchitect computer systems to have a much smaller TCB

SLIDE 18 4

Examples of attack strategies:

- 1. Attacker uses buffer overflow

in a device driver to control Linux kernel on Alice’s laptop.

- 2. Attacker uses buffer overflow

in a web browser to control disk files on Bob’s laptop. Device driver is in the TCB. Web browser is in the TCB. CPU is in the TCB. Etc. Massive TCB has many bugs, including many security holes. Any hope of fixing this?

5

Classic security strategy: Rearchitect computer systems to have a much smaller TCB.

SLIDE 19 4

Examples of attack strategies:

- 1. Attacker uses buffer overflow

in a device driver to control Linux kernel on Alice’s laptop.

- 2. Attacker uses buffer overflow

in a web browser to control disk files on Bob’s laptop. Device driver is in the TCB. Web browser is in the TCB. CPU is in the TCB. Etc. Massive TCB has many bugs, including many security holes. Any hope of fixing this?

5

Classic security strategy: Rearchitect computer systems to have a much smaller TCB. Carefully audit the TCB.

SLIDE 20 4

Examples of attack strategies:

- 1. Attacker uses buffer overflow

in a device driver to control Linux kernel on Alice’s laptop.

- 2. Attacker uses buffer overflow

in a web browser to control disk files on Bob’s laptop. Device driver is in the TCB. Web browser is in the TCB. CPU is in the TCB. Etc. Massive TCB has many bugs, including many security holes. Any hope of fixing this?

5

Classic security strategy: Rearchitect computer systems to have a much smaller TCB. Carefully audit the TCB. e.g. Bob runs many VMs: VM A Alice data VM C Charlie data · · · TCB stops each VM from touching data in other VMs.

SLIDE 21 4

Examples of attack strategies:

- 1. Attacker uses buffer overflow

in a device driver to control Linux kernel on Alice’s laptop.

- 2. Attacker uses buffer overflow

in a web browser to control disk files on Bob’s laptop. Device driver is in the TCB. Web browser is in the TCB. CPU is in the TCB. Etc. Massive TCB has many bugs, including many security holes. Any hope of fixing this?

5

Classic security strategy: Rearchitect computer systems to have a much smaller TCB. Carefully audit the TCB. e.g. Bob runs many VMs: VM A Alice data VM C Charlie data · · · TCB stops each VM from touching data in other VMs. Browser in VM C isn’t in TCB. Can’t touch data in VM A, if TCB works correctly.

SLIDE 22 4

Examples of attack strategies:

- 1. Attacker uses buffer overflow

in a device driver to control Linux kernel on Alice’s laptop.

- 2. Attacker uses buffer overflow

in a web browser to control disk files on Bob’s laptop. Device driver is in the TCB. Web browser is in the TCB. CPU is in the TCB. Etc. Massive TCB has many bugs, including many security holes. Any hope of fixing this?

5

Classic security strategy: Rearchitect computer systems to have a much smaller TCB. Carefully audit the TCB. e.g. Bob runs many VMs: VM A Alice data VM C Charlie data · · · TCB stops each VM from touching data in other VMs. Browser in VM C isn’t in TCB. Can’t touch data in VM A, if TCB works correctly. Alice also runs many VMs.

SLIDE 23

4

Examples of attack strategies: ttacker uses buffer overflow device driver to control Linux kernel on Alice’s laptop. ttacker uses buffer overflow web browser to control files on Bob’s laptop. driver is in the TCB. rowser is in the TCB. is in the TCB. Etc. Massive TCB has many bugs, including many security holes. hope of fixing this?

5

Classic security strategy: Rearchitect computer systems to have a much smaller TCB. Carefully audit the TCB. e.g. Bob runs many VMs: VM A Alice data VM C Charlie data · · · TCB stops each VM from touching data in other VMs. Browser in VM C isn’t in TCB. Can’t touch data in VM A, if TCB works correctly. Alice also runs many VMs. Cryptography How does that incoming is from Alice’s Cryptographic Message-authentication Alice’s authenticated authenticated Alice’s

SLIDE 24 4

attack strategies: buffer overflow driver to control

buffer overflow wser to control Bob’s laptop. in the TCB. in the TCB.

has many bugs, security holes. fixing this?

5

Classic security strategy: Rearchitect computer systems to have a much smaller TCB. Carefully audit the TCB. e.g. Bob runs many VMs: VM A Alice data VM C Charlie data · · · TCB stops each VM from touching data in other VMs. Browser in VM C isn’t in TCB. Can’t touch data in VM A, if TCB works correctly. Alice also runs many VMs. Cryptography How does Bob’s laptop that incoming netw is from Alice’s laptop? Cryptographic solution: Message-authentication Alice’s message

untrusted

- authenticated message

- Alice’s message

SLIDE 25 4

strategies:

control laptop.

control laptop. TCB. TCB. bugs, holes.

5

Classic security strategy: Rearchitect computer systems to have a much smaller TCB. Carefully audit the TCB. e.g. Bob runs many VMs: VM A Alice data VM C Charlie data · · · TCB stops each VM from touching data in other VMs. Browser in VM C isn’t in TCB. Can’t touch data in VM A, if TCB works correctly. Alice also runs many VMs. Cryptography How does Bob’s laptop know that incoming network data is from Alice’s laptop? Cryptographic solution: Message-authentication codes. Alice’s message

untrusted netwo

- authenticated message

- Alice’s message

SLIDE 26 5

Classic security strategy: Rearchitect computer systems to have a much smaller TCB. Carefully audit the TCB. e.g. Bob runs many VMs: VM A Alice data VM C Charlie data · · · TCB stops each VM from touching data in other VMs. Browser in VM C isn’t in TCB. Can’t touch data in VM A, if TCB works correctly. Alice also runs many VMs.

6

Cryptography How does Bob’s laptop know that incoming network data is from Alice’s laptop? Cryptographic solution: Message-authentication codes. Alice’s message

untrusted network

- authenticated message

- Alice’s message

k

SLIDE 27 5

Classic security strategy: Rearchitect computer systems to have a much smaller TCB. Carefully audit the TCB. e.g. Bob runs many VMs: VM A Alice data VM C Charlie data · · · TCB stops each VM from touching data in other VMs. Browser in VM C isn’t in TCB. Can’t touch data in VM A, if TCB works correctly. Alice also runs many VMs.

6

Cryptography How does Bob’s laptop know that incoming network data is from Alice’s laptop? Cryptographic solution: Message-authentication codes. Alice’s message

untrusted network

- modified message

- “Alert: forgery!”

k

SLIDE 28 5

security strategy: rchitect computer systems have a much smaller TCB. refully audit the TCB. Bob runs many VMs: A data VM C Charlie data · · · stops each VM from touching data in other VMs. wser in VM C isn’t in TCB. touch data in VM A, works correctly. also runs many VMs.

6

Cryptography How does Bob’s laptop know that incoming network data is from Alice’s laptop? Cryptographic solution: Message-authentication codes. Alice’s message

untrusted network

- modified message

- “Alert: forgery!”

k

to share What if

SLIDE 29 5

strategy: computer systems smaller TCB. the TCB. many VMs: VM C Charlie data · · · VM from

C isn’t in TCB. ta in VM A, rrectly. many VMs.

6

Cryptography How does Bob’s laptop know that incoming network data is from Alice’s laptop? Cryptographic solution: Message-authentication codes. Alice’s message

untrusted network

- modified message

- “Alert: forgery!”

k

to share the same What if attacker w

SLIDE 30 5

systems TCB. · · · VMs. TCB. A, VMs.

6

Cryptography How does Bob’s laptop know that incoming network data is from Alice’s laptop? Cryptographic solution: Message-authentication codes. Alice’s message

untrusted network

- modified message

- “Alert: forgery!”

k

- Important for Alice and Bob

to share the same secret k. What if attacker was spying

- n their communication of k

SLIDE 31 6

Cryptography How does Bob’s laptop know that incoming network data is from Alice’s laptop? Cryptographic solution: Message-authentication codes. Alice’s message

untrusted network

- modified message

- “Alert: forgery!”

k

Important for Alice and Bob to share the same secret k. What if attacker was spying

- n their communication of k?

SLIDE 32 6

Cryptography How does Bob’s laptop know that incoming network data is from Alice’s laptop? Cryptographic solution: Message-authentication codes. Alice’s message

untrusted network

- modified message

- “Alert: forgery!”

k

Important for Alice and Bob to share the same secret k. What if attacker was spying

- n their communication of k?

Solution 1: Public-key encryption. k private key a

network

network

SLIDE 33 6

Cryptography does Bob’s laptop know incoming network data Alice’s laptop? Cryptographic solution: Message-authentication codes. Alice’s message

untrusted network

- dified message

- “Alert: forgery!”

k

Important for Alice and Bob to share the same secret k. What if attacker was spying

- n their communication of k?

Solution 1: Public-key encryption. k private key a

network

network

Public-key m

- signed message

- signed message

- m

SLIDE 34 6

laptop know network data laptop? solution: Message-authentication codes. message k

untrusted network message rgery!” k

Important for Alice and Bob to share the same secret k. What if attacker was spying

- n their communication of k?

Solution 1: Public-key encryption. k private key a

network

network

- public key aG

- k

- Solution 2:

Public-key signatures. m

network

m

SLIDE 35 6

know data des. k work k

7

Important for Alice and Bob to share the same secret k. What if attacker was spying

- n their communication of k?

Solution 1: Public-key encryption. k private key a

network

network

- public key aG

- k

- Solution 2:

Public-key signatures. m

network

net

SLIDE 36 7

Important for Alice and Bob to share the same secret k. What if attacker was spying

- n their communication of k?

Solution 1: Public-key encryption. k private key a

network

network

Solution 2: Public-key signatures. m

network

network

SLIDE 37 7

Important for Alice and Bob to share the same secret k. What if attacker was spying

- n their communication of k?

Solution 1: Public-key encryption. k private key a

network

network

Solution 2: Public-key signatures. m

network

network

No more shared secret k but Alice still has secret a. Cryptography requires TCB to protect secrecy of keys, even if user has no other secrets.

SLIDE 38 7

rtant for Alice and Bob re the same secret k. if attacker was spying their communication of k? Solution 1: Public-key encryption. private key a

public key aG network

network public key aG

Solution 2: Public-key signatures. m

network

network

No more shared secret k but Alice still has secret a. Cryptography requires TCB to protect secrecy of keys, even if user has no other secrets. Constant-time Large po

addresses Consider instruction parallel cache store-to-load branch p

SLIDE 39 7

Alice and Bob same secret k. was spying communication of k? encryption. private key a

network

8

Solution 2: Public-key signatures. m

network

network

No more shared secret k but Alice still has secret a. Cryptography requires TCB to protect secrecy of keys, even if user has no other secrets. Constant-time soft Large portion of CPU

addresses of memo Consider data cachin instruction caching, parallel cache banks, store-to-load forwa branch prediction,

SLIDE 40 7

Bob . ying

key a y aG network y aG

8

Solution 2: Public-key signatures. m

network

network

No more shared secret k but Alice still has secret a. Cryptography requires TCB to protect secrecy of keys, even if user has no other secrets. Constant-time software Large portion of CPU hardw

- ptimizations depending on

addresses of memory locations. Consider data caching, instruction caching, parallel cache banks, store-to-load forwarding, branch prediction, etc.

SLIDE 41 8

Solution 2: Public-key signatures. m

network

network

No more shared secret k but Alice still has secret a. Cryptography requires TCB to protect secrecy of keys, even if user has no other secrets.

9

Constant-time software Large portion of CPU hardware:

- ptimizations depending on

addresses of memory locations. Consider data caching, instruction caching, parallel cache banks, store-to-load forwarding, branch prediction, etc.

SLIDE 42 8

Solution 2: Public-key signatures. m

network

network

No more shared secret k but Alice still has secret a. Cryptography requires TCB to protect secrecy of keys, even if user has no other secrets.

9

Constant-time software Large portion of CPU hardware:

- ptimizations depending on

addresses of memory locations. Consider data caching, instruction caching, parallel cache banks, store-to-load forwarding, branch prediction, etc. Many attacks (e.g. TLBleed from 2018 Gras–Razavi–Bos–Giuffrida) show that this portion of the CPU has trouble keeping secrets.

SLIDE 43 8

Solution 2: Public-key signatures. m

network

network

re shared secret k Alice still has secret a. Cryptography requires TCB rotect secrecy of keys, if user has no other secrets.

9

Constant-time software Large portion of CPU hardware:

- ptimizations depending on

addresses of memory locations. Consider data caching, instruction caching, parallel cache banks, store-to-load forwarding, branch prediction, etc. Many attacks (e.g. TLBleed from 2018 Gras–Razavi–Bos–Giuffrida) show that this portion of the CPU has trouble keeping secrets. Typical literature Understand But details not exposed Try to push This becomes Tweak the to try to

SLIDE 44 8

signatures. a

network

secret k has secret a. requires TCB secrecy of keys, no other secrets.

9

Constant-time software Large portion of CPU hardware:

- ptimizations depending on

addresses of memory locations. Consider data caching, instruction caching, parallel cache banks, store-to-load forwarding, branch prediction, etc. Many attacks (e.g. TLBleed from 2018 Gras–Razavi–Bos–Giuffrida) show that this portion of the CPU has trouble keeping secrets. Typical literature on Understand this po But details are often not exposed to securit Try to push attacks This becomes very Tweak the attacked to try to stop the kno

SLIDE 45 8

network . TCB eys, secrets.

9

Constant-time software Large portion of CPU hardware:

- ptimizations depending on

addresses of memory locations. Consider data caching, instruction caching, parallel cache banks, store-to-load forwarding, branch prediction, etc. Many attacks (e.g. TLBleed from 2018 Gras–Razavi–Bos–Giuffrida) show that this portion of the CPU has trouble keeping secrets. Typical literature on this topic: Understand this portion of CPU. But details are often proprieta not exposed to security review. Try to push attacks further. This becomes very complicated. Tweak the attacked software to try to stop the known attacks.

SLIDE 46 9

Constant-time software Large portion of CPU hardware:

- ptimizations depending on

addresses of memory locations. Consider data caching, instruction caching, parallel cache banks, store-to-load forwarding, branch prediction, etc. Many attacks (e.g. TLBleed from 2018 Gras–Razavi–Bos–Giuffrida) show that this portion of the CPU has trouble keeping secrets.

10

Typical literature on this topic: Understand this portion of CPU. But details are often proprietary, not exposed to security review. Try to push attacks further. This becomes very complicated. Tweak the attacked software to try to stop the known attacks.

SLIDE 47 9

Constant-time software Large portion of CPU hardware:

- ptimizations depending on

addresses of memory locations. Consider data caching, instruction caching, parallel cache banks, store-to-load forwarding, branch prediction, etc. Many attacks (e.g. TLBleed from 2018 Gras–Razavi–Bos–Giuffrida) show that this portion of the CPU has trouble keeping secrets.

10

Typical literature on this topic: Understand this portion of CPU. But details are often proprietary, not exposed to security review. Try to push attacks further. This becomes very complicated. Tweak the attacked software to try to stop the known attacks. For researchers: This is great!

SLIDE 48 9

Constant-time software Large portion of CPU hardware:

- ptimizations depending on

addresses of memory locations. Consider data caching, instruction caching, parallel cache banks, store-to-load forwarding, branch prediction, etc. Many attacks (e.g. TLBleed from 2018 Gras–Razavi–Bos–Giuffrida) show that this portion of the CPU has trouble keeping secrets.

10

Typical literature on this topic: Understand this portion of CPU. But details are often proprietary, not exposed to security review. Try to push attacks further. This becomes very complicated. Tweak the attacked software to try to stop the known attacks. For researchers: This is great! For auditors: This is a nightmare. Many years of security failures. No confidence in future security.

SLIDE 49 9

Constant-time software portion of CPU hardware:

- ptimizations depending on

addresses of memory locations. Consider data caching, instruction caching, rallel cache banks, re-to-load forwarding, prediction, etc. attacks (e.g. TLBleed from Gras–Razavi–Bos–Giuffrida) that this portion of the CPU trouble keeping secrets.

10

Typical literature on this topic: Understand this portion of CPU. But details are often proprietary, not exposed to security review. Try to push attacks further. This becomes very complicated. Tweak the attacked software to try to stop the known attacks. For researchers: This is great! For auditors: This is a nightmare. Many years of security failures. No confidence in future security. The “constant-time” Don’t give to this p (1987 Goldreich, Oblivious domain-sp

SLIDE 50 9

software CPU hardware: depending on memory locations. caching, caching, banks, rwarding, rediction, etc. (e.g. TLBleed from Gras–Razavi–Bos–Giuffrida)

eeping secrets.

10

Typical literature on this topic: Understand this portion of CPU. But details are often proprietary, not exposed to security review. Try to push attacks further. This becomes very complicated. Tweak the attacked software to try to stop the known attacks. For researchers: This is great! For auditors: This is a nightmare. Many years of security failures. No confidence in future security. The “constant-time” Don’t give any secrets to this portion of the (1987 Goldreich, 1990 Oblivious RAM; 2004 domain-specific for

SLIDE 51 9

rdware:

cations. TLBleed from Gras–Razavi–Bos–Giuffrida) the CPU secrets.

10

Typical literature on this topic: Understand this portion of CPU. But details are often proprietary, not exposed to security review. Try to push attacks further. This becomes very complicated. Tweak the attacked software to try to stop the known attacks. For researchers: This is great! For auditors: This is a nightmare. Many years of security failures. No confidence in future security. The “constant-time” solution: Don’t give any secrets to this portion of the CPU. (1987 Goldreich, 1990 Ostrovsky: Oblivious RAM; 2004 Bernstein: domain-specific for better sp

SLIDE 52

10

Typical literature on this topic: Understand this portion of CPU. But details are often proprietary, not exposed to security review. Try to push attacks further. This becomes very complicated. Tweak the attacked software to try to stop the known attacks. For researchers: This is great! For auditors: This is a nightmare. Many years of security failures. No confidence in future security.

11

The “constant-time” solution: Don’t give any secrets to this portion of the CPU. (1987 Goldreich, 1990 Ostrovsky: Oblivious RAM; 2004 Bernstein: domain-specific for better speed)

SLIDE 53 10

Typical literature on this topic: Understand this portion of CPU. But details are often proprietary, not exposed to security review. Try to push attacks further. This becomes very complicated. Tweak the attacked software to try to stop the known attacks. For researchers: This is great! For auditors: This is a nightmare. Many years of security failures. No confidence in future security.

11

The “constant-time” solution: Don’t give any secrets to this portion of the CPU. (1987 Goldreich, 1990 Ostrovsky: Oblivious RAM; 2004 Bernstein: domain-specific for better speed) TCB analysis: Need this portion

- f the CPU to be correct, but

don’t need it to keep secrets. Makes auditing much easier.

SLIDE 54 10

Typical literature on this topic: Understand this portion of CPU. But details are often proprietary, not exposed to security review. Try to push attacks further. This becomes very complicated. Tweak the attacked software to try to stop the known attacks. For researchers: This is great! For auditors: This is a nightmare. Many years of security failures. No confidence in future security.

11

The “constant-time” solution: Don’t give any secrets to this portion of the CPU. (1987 Goldreich, 1990 Ostrovsky: Oblivious RAM; 2004 Bernstein: domain-specific for better speed) TCB analysis: Need this portion

- f the CPU to be correct, but

don’t need it to keep secrets. Makes auditing much easier. Good match for attitude and experience of CPU designers: e.g., Intel issues errata for correctness bugs, not for information leaks.

SLIDE 55 10

ypical literature on this topic: Understand this portion of CPU. details are often proprietary, exposed to security review. push attacks further. ecomes very complicated. the attacked software to stop the known attacks. researchers: This is great! auditors: This is a nightmare. years of security failures. confidence in future security.

11

The “constant-time” solution: Don’t give any secrets to this portion of the CPU. (1987 Goldreich, 1990 Ostrovsky: Oblivious RAM; 2004 Bernstein: domain-specific for better speed) TCB analysis: Need this portion

- f the CPU to be correct, but

don’t need it to keep secrets. Makes auditing much easier. Good match for attitude and experience of CPU designers: e.g., Intel issues errata for correctness bugs, not for information leaks. Case study: Serious risk Attacker breaking public-key e.g., finding

SLIDE 56 10

literature on this topic: portion of CPU.

security review. ttacks further. very complicated. attacked software the known attacks. This is great! This is a nightmare. security failures. future security.

11

The “constant-time” solution: Don’t give any secrets to this portion of the CPU. (1987 Goldreich, 1990 Ostrovsky: Oblivious RAM; 2004 Bernstein: domain-specific for better speed) TCB analysis: Need this portion

- f the CPU to be correct, but

don’t need it to keep secrets. Makes auditing much easier. Good match for attitude and experience of CPU designers: e.g., Intel issues errata for correctness bugs, not for information leaks. Case study: Constant-time Serious risk within Attacker has quantum breaking today’s most public-key crypto (RSA e.g., finding a given

SLIDE 57 10

topic:

rietary, review. further. complicated. are attacks. great! nightmare. failures. security.

11

The “constant-time” solution: Don’t give any secrets to this portion of the CPU. (1987 Goldreich, 1990 Ostrovsky: Oblivious RAM; 2004 Bernstein: domain-specific for better speed) TCB analysis: Need this portion

- f the CPU to be correct, but

don’t need it to keep secrets. Makes auditing much easier. Good match for attitude and experience of CPU designers: e.g., Intel issues errata for correctness bugs, not for information leaks. Case study: Constant-time so Serious risk within 10 years: Attacker has quantum computer breaking today’s most popula public-key crypto (RSA and e.g., finding a given aG).

SLIDE 58 11

The “constant-time” solution: Don’t give any secrets to this portion of the CPU. (1987 Goldreich, 1990 Ostrovsky: Oblivious RAM; 2004 Bernstein: domain-specific for better speed) TCB analysis: Need this portion

- f the CPU to be correct, but

don’t need it to keep secrets. Makes auditing much easier. Good match for attitude and experience of CPU designers: e.g., Intel issues errata for correctness bugs, not for information leaks.

12

Case study: Constant-time sorting Serious risk within 10 years: Attacker has quantum computer breaking today’s most popular public-key crypto (RSA and ECC; e.g., finding a given aG).

SLIDE 59 11

The “constant-time” solution: Don’t give any secrets to this portion of the CPU. (1987 Goldreich, 1990 Ostrovsky: Oblivious RAM; 2004 Bernstein: domain-specific for better speed) TCB analysis: Need this portion

- f the CPU to be correct, but

don’t need it to keep secrets. Makes auditing much easier. Good match for attitude and experience of CPU designers: e.g., Intel issues errata for correctness bugs, not for information leaks.

12

Case study: Constant-time sorting Serious risk within 10 years: Attacker has quantum computer breaking today’s most popular public-key crypto (RSA and ECC; e.g., finding a given aG). 2017: Hundreds of people submit 69 complete proposals to international competition for post-quantum crypto standards.

SLIDE 60 11

The “constant-time” solution: Don’t give any secrets to this portion of the CPU. (1987 Goldreich, 1990 Ostrovsky: Oblivious RAM; 2004 Bernstein: domain-specific for better speed) TCB analysis: Need this portion

- f the CPU to be correct, but

don’t need it to keep secrets. Makes auditing much easier. Good match for attitude and experience of CPU designers: e.g., Intel issues errata for correctness bugs, not for information leaks.

12

Case study: Constant-time sorting Serious risk within 10 years: Attacker has quantum computer breaking today’s most popular public-key crypto (RSA and ECC; e.g., finding a given aG). 2017: Hundreds of people submit 69 complete proposals to international competition for post-quantum crypto standards. Subroutine in some submissions: sort array of secret integers. e.g. sort 768 32-bit integers.

SLIDE 61

11

“constant-time” solution: give any secrets portion of the CPU. Goldreich, 1990 Ostrovsky: Oblivious RAM; 2004 Bernstein: domain-specific for better speed) analysis: Need this portion CPU to be correct, but need it to keep secrets. auditing much easier. match for attitude and erience of CPU designers: e.g., issues errata for correctness not for information leaks.

12

Case study: Constant-time sorting Serious risk within 10 years: Attacker has quantum computer breaking today’s most popular public-key crypto (RSA and ECC; e.g., finding a given aG). 2017: Hundreds of people submit 69 complete proposals to international competition for post-quantum crypto standards. Subroutine in some submissions: sort array of secret integers. e.g. sort 768 32-bit integers. How to so without

SLIDE 62 11

“constant-time” solution: secrets

Goldreich, 1990 Ostrovsky: 2004 Bernstein: for better speed) Need this portion e correct, but keep secrets. much easier. attitude and U designers: e.g., errata for correctness information leaks.

12

Case study: Constant-time sorting Serious risk within 10 years: Attacker has quantum computer breaking today’s most popular public-key crypto (RSA and ECC; e.g., finding a given aG). 2017: Hundreds of people submit 69 complete proposals to international competition for post-quantum crypto standards. Subroutine in some submissions: sort array of secret integers. e.g. sort 768 32-bit integers. How to sort secret without any secret

SLIDE 63 11

solution: CPU. Ostrovsky: Bernstein: speed)

but secrets. easier. and designers: e.g., rrectness leaks.

12

Case study: Constant-time sorting Serious risk within 10 years: Attacker has quantum computer breaking today’s most popular public-key crypto (RSA and ECC; e.g., finding a given aG). 2017: Hundreds of people submit 69 complete proposals to international competition for post-quantum crypto standards. Subroutine in some submissions: sort array of secret integers. e.g. sort 768 32-bit integers. How to sort secret data without any secret addresses?

SLIDE 64

12

Case study: Constant-time sorting Serious risk within 10 years: Attacker has quantum computer breaking today’s most popular public-key crypto (RSA and ECC; e.g., finding a given aG). 2017: Hundreds of people submit 69 complete proposals to international competition for post-quantum crypto standards. Subroutine in some submissions: sort array of secret integers. e.g. sort 768 32-bit integers.

13

How to sort secret data without any secret addresses?

SLIDE 65

12

Case study: Constant-time sorting Serious risk within 10 years: Attacker has quantum computer breaking today’s most popular public-key crypto (RSA and ECC; e.g., finding a given aG). 2017: Hundreds of people submit 69 complete proposals to international competition for post-quantum crypto standards. Subroutine in some submissions: sort array of secret integers. e.g. sort 768 32-bit integers.

13

How to sort secret data without any secret addresses? Typical sorting algorithms— merge sort, quicksort, etc.— choose load/store addresses based on secret data. Usually also branch based on secret data.

SLIDE 66

12

Case study: Constant-time sorting Serious risk within 10 years: Attacker has quantum computer breaking today’s most popular public-key crypto (RSA and ECC; e.g., finding a given aG). 2017: Hundreds of people submit 69 complete proposals to international competition for post-quantum crypto standards. Subroutine in some submissions: sort array of secret integers. e.g. sort 768 32-bit integers.

13

How to sort secret data without any secret addresses? Typical sorting algorithms— merge sort, quicksort, etc.— choose load/store addresses based on secret data. Usually also branch based on secret data. One submission to competition: “Radix sort is used as constant-time sorting algorithm.” Some versions of radix sort avoid secret branches.

SLIDE 67

12

Case study: Constant-time sorting Serious risk within 10 years: Attacker has quantum computer breaking today’s most popular public-key crypto (RSA and ECC; e.g., finding a given aG). 2017: Hundreds of people submit 69 complete proposals to international competition for post-quantum crypto standards. Subroutine in some submissions: sort array of secret integers. e.g. sort 768 32-bit integers.

13

How to sort secret data without any secret addresses? Typical sorting algorithms— merge sort, quicksort, etc.— choose load/store addresses based on secret data. Usually also branch based on secret data. One submission to competition: “Radix sort is used as constant-time sorting algorithm.” Some versions of radix sort avoid secret branches. But data addresses in radix sort still depend on secrets.

SLIDE 68 12

study: Constant-time sorting Serious risk within 10 years: er has quantum computer reaking today’s most popular public-key crypto (RSA and ECC; finding a given aG). Hundreds of people submit 69 complete proposals international competition for

- st-quantum crypto standards.

routine in some submissions: rray of secret integers. rt 768 32-bit integers.

13

How to sort secret data without any secret addresses? Typical sorting algorithms— merge sort, quicksort, etc.— choose load/store addresses based on secret data. Usually also branch based on secret data. One submission to competition: “Radix sort is used as constant-time sorting algorithm.” Some versions of radix sort avoid secret branches. But data addresses in radix sort still depend on secrets. Foundation a compa x

Easy constant-time Warning: compiler Even easier

SLIDE 69 12

Constant-time sorting within 10 years: quantum computer most popular crypto (RSA and ECC; given aG).

complete proposals competition for crypto standards. some submissions: cret integers. 32-bit integers.

13

How to sort secret data without any secret addresses? Typical sorting algorithms— merge sort, quicksort, etc.— choose load/store addresses based on secret data. Usually also branch based on secret data. One submission to competition: “Radix sort is used as constant-time sorting algorithm.” Some versions of radix sort avoid secret branches. But data addresses in radix sort still depend on secrets. Foundation of solution: a comparator sorting x

max Easy constant-time Warning: C standa compiler to screw Even easier exercise

SLIDE 70 12

Constant-time sorting rs: computer

and ECC;

etition for standards. submissions: integers. gers.

13

How to sort secret data without any secret addresses? Typical sorting algorithms— merge sort, quicksort, etc.— choose load/store addresses based on secret data. Usually also branch based on secret data. One submission to competition: “Radix sort is used as constant-time sorting algorithm.” Some versions of radix sort avoid secret branches. But data addresses in radix sort still depend on secrets. Foundation of solution: a comparator sorting 2 integers. x y

max{x; y} Easy constant-time exercise Warning: C standard allows compiler to screw this up. Even easier exercise in asm.

SLIDE 71 13

How to sort secret data without any secret addresses? Typical sorting algorithms— merge sort, quicksort, etc.— choose load/store addresses based on secret data. Usually also branch based on secret data. One submission to competition: “Radix sort is used as constant-time sorting algorithm.” Some versions of radix sort avoid secret branches. But data addresses in radix sort still depend on secrets.

14

Foundation of solution: a comparator sorting 2 integers. x y

max{x; y} Easy constant-time exercise in C. Warning: C standard allows compiler to screw this up. Even easier exercise in asm.

SLIDE 72 13

to sort secret data without any secret addresses? ypical sorting algorithms— sort, quicksort, etc.— load/store addresses

anch based on secret data. submission to competition: sort is used as constant-time sorting algorithm.” versions of radix sort secret branches. data addresses in radix sort depend on secrets.

14

Foundation of solution: a comparator sorting 2 integers. x y

max{x; y} Easy constant-time exercise in C. Warning: C standard allows compiler to screw this up. Even easier exercise in asm. Combine sorting net Example

SLIDE 73 13

ecret data secret addresses? algorithms— quicksort, etc.— re addresses

based on secret data. to competition: used as rting algorithm.”

ranches. addresses in radix sort secrets.

14

Foundation of solution: a comparator sorting 2 integers. x y

max{x; y} Easy constant-time exercise in C. Warning: C standard allows compiler to screw this up. Even easier exercise in asm. Combine comparato sorting network fo Example of a sorting

SLIDE 74 13

addresses? rithms— tc.— addresses Usually secret data. etition: rithm.” rt radix sort

14

Foundation of solution: a comparator sorting 2 integers. x y

max{x; y} Easy constant-time exercise in C. Warning: C standard allows compiler to screw this up. Even easier exercise in asm. Combine comparators into a sorting network for more inputs. Example of a sorting network:

SLIDE 75 14

Foundation of solution: a comparator sorting 2 integers. x y

max{x; y} Easy constant-time exercise in C. Warning: C standard allows compiler to screw this up. Even easier exercise in asm.

15

Combine comparators into a sorting network for more inputs. Example of a sorting network:

SLIDE 76 14

comparator sorting 2 integers. y

max{x; y} constant-time exercise in C. rning: C standard allows compiler to screw this up. easier exercise in asm.

15

Combine comparators into a sorting network for more inputs. Example of a sorting network:

in a sorting independent Naturally

SLIDE 77 14

solution: sorting 2 integers. y

constant-time exercise in C. standard allows screw this up. exercise in asm.

15

Combine comparators into a sorting network for more inputs. Example of a sorting network:

in a sorting network independent of the Naturally constant-time.

SLIDE 78 14

integers. } exercise in C. ws .

15

Combine comparators into a sorting network for more inputs. Example of a sorting network:

in a sorting network are independent of the input. Naturally constant-time.

SLIDE 79 15

Combine comparators into a sorting network for more inputs. Example of a sorting network:

Positions of comparators in a sorting network are independent of the input. Naturally constant-time.

SLIDE 80 15

Combine comparators into a sorting network for more inputs. Example of a sorting network:

Positions of comparators in a sorting network are independent of the input. Naturally constant-time. But (n2 − n)=2 comparators produce complaints about performance as n increases.

SLIDE 81 15

Combine comparators into a sorting network for more inputs. Example of a sorting network:

Positions of comparators in a sorting network are independent of the input. Naturally constant-time. But (n2 − n)=2 comparators produce complaints about performance as n increases. Speed is a serious issue in the post-quantum competition. “Cost” is evaluation criterion; “we’d like to stress this once again on the forum that we’d really like to see more platform-

- ptimized implementations”; etc.

SLIDE 82 15

Combine comparators into a rting network for more inputs. Example of a sorting network:

Positions of comparators in a sorting network are independent of the input. Naturally constant-time. But (n2 − n)=2 comparators produce complaints about performance as n increases. Speed is a serious issue in the post-quantum competition. “Cost” is evaluation criterion; “we’d like to stress this once again on the forum that we’d really like to see more platform-

- ptimized implementations”; etc.

void int32_sort(int32 { int64 if (n t = 1; while for (p for if for for } }

SLIDE 83 15

rators into a for more inputs. rting network:

Positions of comparators in a sorting network are independent of the input. Naturally constant-time. But (n2 − n)=2 comparators produce complaints about performance as n increases. Speed is a serious issue in the post-quantum competition. “Cost” is evaluation criterion; “we’d like to stress this once again on the forum that we’d really like to see more platform-

- ptimized implementations”; etc.

void int32_sort(int32 { int64 t,p,q,i; if (n < 2) return; t = 1; while (t < n - for (p = t;p > for (i = 0;i if (!(i & p)) minmax(x+i,x+i+p); for (q = t;q for (i = 0;i if (!(i & minmax(x+i+p,x+i+q); } }

SLIDE 84 15

a inputs.

Positions of comparators in a sorting network are independent of the input. Naturally constant-time. But (n2 − n)=2 comparators produce complaints about performance as n increases. Speed is a serious issue in the post-quantum competition. “Cost” is evaluation criterion; “we’d like to stress this once again on the forum that we’d really like to see more platform-

- ptimized implementations”; etc.

void int32_sort(int32 *x,int64 { int64 t,p,q,i; if (n < 2) return; t = 1; while (t < n - t) t += for (p = t;p > 0;p >>= for (i = 0;i < n - p;++i) if (!(i & p)) minmax(x+i,x+i+p); for (q = t;q > p;q >>= for (i = 0;i < n - if (!(i & p)) minmax(x+i+p,x+i+q); } }

SLIDE 85 16

Positions of comparators in a sorting network are independent of the input. Naturally constant-time. But (n2 − n)=2 comparators produce complaints about performance as n increases. Speed is a serious issue in the post-quantum competition. “Cost” is evaluation criterion; “we’d like to stress this once again on the forum that we’d really like to see more platform-

- ptimized implementations”; etc.

17

void int32_sort(int32 *x,int64 n) { int64 t,p,q,i; if (n < 2) return; t = 1; while (t < n - t) t += t; for (p = t;p > 0;p >>= 1) { for (i = 0;i < n - p;++i) if (!(i & p)) minmax(x+i,x+i+p); for (q = t;q > p;q >>= 1) for (i = 0;i < n - q;++i) if (!(i & p)) minmax(x+i+p,x+i+q); } }

SLIDE 86 16

rting network are endent of the input. Naturally constant-time.

2 − n)=2 comparators

duce complaints about rmance as n increases. is a serious issue in the

is evaluation criterion; like to stress this once

like to see more platform-

- ptimized implementations”; etc.

17

void int32_sort(int32 *x,int64 n) { int64 t,p,q,i; if (n < 2) return; t = 1; while (t < n - t) t += t; for (p = t;p > 0;p >>= 1) { for (i = 0;i < n - p;++i) if (!(i & p)) minmax(x+i,x+i+p); for (q = t;q > p;q >>= 1) for (i = 0;i < n - q;++i) if (!(i & p)) minmax(x+i+p,x+i+q); } }

Previous 1973 Knuth which is 1968 Batcher sorting net ≈n(log2 Much faster Warning:

require n Also, Wikip networks handling

SLIDE 87 16

comparators

the input. constant-time. comparators complaints about n increases. serious issue in the competition. evaluation criterion; stress this once rum that we’d more platform- implementations”; etc.

17

void int32_sort(int32 *x,int64 n) { int64 t,p,q,i; if (n < 2) return; t = 1; while (t < n - t) t += t; for (p = t;p > 0;p >>= 1) { for (i = 0;i < n - p;++i) if (!(i & p)) minmax(x+i,x+i+p); for (q = t;q > p;q >>= 1) for (i = 0;i < n - q;++i) if (!(i & p)) minmax(x+i+p,x+i+q); } }

Previous slide: C translation 1973 Knuth “merge which is a simplified 1968 Batcher “odd-even sorting networks. ≈n(log2 n)2=4 compa Much faster than bubble Warning: many other

require n to be a p Also, Wikipedia sa networks : : : are n handling arbitrarily

SLIDE 88 16

rs increases. the etition. criterion;

e’d platform- tations”; etc.

17

void int32_sort(int32 *x,int64 n) { int64 t,p,q,i; if (n < 2) return; t = 1; while (t < n - t) t += t; for (p = t;p > 0;p >>= 1) { for (i = 0;i < n - p;++i) if (!(i & p)) minmax(x+i,x+i+p); for (q = t;q > p;q >>= 1) for (i = 0;i < n - q;++i) if (!(i & p)) minmax(x+i+p,x+i+q); } }

Previous slide: C translation 1973 Knuth “merge exchange”, which is a simplified version 1968 Batcher “odd-even merge” sorting networks. ≈n(log2 n)2=4 comparators. Much faster than bubble sort. Warning: many other descriptions

- f Batcher’s sorting networks

require n to be a power of 2. Also, Wikipedia says “Sorting networks : : : are not capable handling arbitrarily large inputs

SLIDE 89 17

void int32_sort(int32 *x,int64 n) { int64 t,p,q,i; if (n < 2) return; t = 1; while (t < n - t) t += t; for (p = t;p > 0;p >>= 1) { for (i = 0;i < n - p;++i) if (!(i & p)) minmax(x+i,x+i+p); for (q = t;q > p;q >>= 1) for (i = 0;i < n - q;++i) if (!(i & p)) minmax(x+i+p,x+i+q); } }

18

Previous slide: C translation of 1973 Knuth “merge exchange”, which is a simplified version of 1968 Batcher “odd-even merge” sorting networks. ≈n(log2 n)2=4 comparators. Much faster than bubble sort. Warning: many other descriptions

- f Batcher’s sorting networks

require n to be a power of 2. Also, Wikipedia says “Sorting networks : : : are not capable of handling arbitrarily large inputs.”

SLIDE 90 17

int32_sort(int32 *x,int64 n) t,p,q,i; < 2) return; 1; (t < n - t) t += t; (p = t;p > 0;p >>= 1) { (i = 0;i < n - p;++i) if (!(i & p)) minmax(x+i,x+i+p); (q = t;q > p;q >>= 1) for (i = 0;i < n - q;++i) if (!(i & p)) minmax(x+i+p,x+i+q);

18

Previous slide: C translation of 1973 Knuth “merge exchange”, which is a simplified version of 1968 Batcher “odd-even merge” sorting networks. ≈n(log2 n)2=4 comparators. Much faster than bubble sort. Warning: many other descriptions

- f Batcher’s sorting networks

require n to be a power of 2. Also, Wikipedia says “Sorting networks : : : are not capable of handling arbitrarily large inputs.” This constant-time Constant-time Bernstein–Chuengsatiansup– Lange–van “NTRU constant-time

SLIDE 91 17

int32_sort(int32 *x,int64 n) return; t) t += t; 0;p >>= 1) { < n - p;++i) p)) minmax(x+i,x+i+p); > p;q >>= 1) 0;i < n - q;++i) & p)) minmax(x+i+p,x+i+q);

18

Previous slide: C translation of 1973 Knuth “merge exchange”, which is a simplified version of 1968 Batcher “odd-even merge” sorting networks. ≈n(log2 n)2=4 comparators. Much faster than bubble sort. Warning: many other descriptions

- f Batcher’s sorting networks

require n to be a power of 2. Also, Wikipedia says “Sorting networks : : : are not capable of handling arbitrarily large inputs.” This constant-time vecto (fo

included in Bernstein–Chuengsatiansup– Lange–van V “NTRU Prime” soft revamp higher

constant-time so

SLIDE 92 17

*x,int64 n) t; 1) { p;++i) minmax(x+i,x+i+p); >>= 1) q;++i) minmax(x+i+p,x+i+q);

18

Previous slide: C translation of 1973 Knuth “merge exchange”, which is a simplified version of 1968 Batcher “odd-even merge” sorting networks. ≈n(log2 n)2=4 comparators. Much faster than bubble sort. Warning: many other descriptions

- f Batcher’s sorting networks

require n to be a power of 2. Also, Wikipedia says “Sorting networks : : : are not capable of handling arbitrarily large inputs.” This constant-time sorting co vectorization (for Haswell)

- Constant-time sorting code

included in 2017 Bernstein–Chuengsatiansup– Lange–van Vredendaal “NTRU Prime” software release revamped fo higher speed

constant-time sorting code

SLIDE 93 18

Previous slide: C translation of 1973 Knuth “merge exchange”, which is a simplified version of 1968 Batcher “odd-even merge” sorting networks. ≈n(log2 n)2=4 comparators. Much faster than bubble sort. Warning: many other descriptions

- f Batcher’s sorting networks

require n to be a power of 2. Also, Wikipedia says “Sorting networks : : : are not capable of handling arbitrarily large inputs.”

19

This constant-time sorting code vectorization (for Haswell)

- Constant-time sorting code

included in 2017 Bernstein–Chuengsatiansup– Lange–van Vredendaal “NTRU Prime” software release revamped for higher speed

constant-time sorting code

SLIDE 94 18

Previous slide: C translation of Knuth “merge exchange”, is a simplified version of Batcher “odd-even merge” networks. (log2 n)2=4 comparators. faster than bubble sort. rning: many other descriptions Batcher’s sorting networks n to be a power of 2. Wikipedia says “Sorting rks : : : are not capable of handling arbitrarily large inputs.”

19

This constant-time sorting code vectorization (for Haswell)

- Constant-time sorting code

included in 2017 Bernstein–Chuengsatiansup– Lange–van Vredendaal “NTRU Prime” software release revamped for higher speed

constant-time sorting code The slowdo Massive 2015 Gueron–Krasnov: AVX2 (Hasw quicksort. ≈45 cycles/b ≈55 cycles/b Slower than implemented the fastest aware of IPP: Intel’s Performance

SLIDE 95 18

translation of “merge exchange”, simplified version of dd-even merge” rks. comparators. than bubble sort.

rting networks power of 2. says “Sorting not capable of rily large inputs.”

19

This constant-time sorting code vectorization (for Haswell)

- Constant-time sorting code

included in 2017 Bernstein–Chuengsatiansup– Lange–van Vredendaal “NTRU Prime” software release revamped for higher speed

constant-time sorting code The slowdown for Massive fast-sorting 2015 Gueron–Krasnov: AVX2 (Haswell) optimization

≈45 cycles/byte fo ≈55 cycles/byte fo Slower than “the radix implemented of IPP the fastest in-memo aware of”: 32, 40 IPP: Intel’s Integrated Performance Primitives

SLIDE 96 18

translation of exchange”, version of merge” rs. sort. descriptions rks 2. rting capable of inputs.”

19

This constant-time sorting code vectorization (for Haswell)

- Constant-time sorting code

included in 2017 Bernstein–Chuengsatiansup– Lange–van Vredendaal “NTRU Prime” software release revamped for higher speed

constant-time sorting code The slowdown for constant time Massive fast-sorting literature. 2015 Gueron–Krasnov: AVX AVX2 (Haswell) optimization

- quicksort. For 32-bit integers:

≈45 cycles/byte for n ≈ 210 ≈55 cycles/byte for n ≈ 220 Slower than “the radix sort implemented of IPP, which is the fastest in-memory sort w aware of”: 32, 40 cycles/byte. IPP: Intel’s Integrated Performance Primitives library

SLIDE 97 19

This constant-time sorting code vectorization (for Haswell)

- Constant-time sorting code

included in 2017 Bernstein–Chuengsatiansup– Lange–van Vredendaal “NTRU Prime” software release revamped for higher speed

constant-time sorting code

20

The slowdown for constant time Massive fast-sorting literature. 2015 Gueron–Krasnov: AVX and AVX2 (Haswell) optimization of

- quicksort. For 32-bit integers:

≈45 cycles/byte for n ≈ 210, ≈55 cycles/byte for n ≈ 220. Slower than “the radix sort implemented of IPP, which is the fastest in-memory sort we are aware of”: 32, 40 cycles/byte. IPP: Intel’s Integrated Performance Primitives library.

SLIDE 98 19

constant-time sorting code vectorization (for Haswell)

- Constant-time sorting code

included in 2017 Bernstein–Chuengsatiansup– Lange–van Vredendaal “NTRU Prime” software release revamped for higher speed

constant-time sorting code

20

The slowdown for constant time Massive fast-sorting literature. 2015 Gueron–Krasnov: AVX and AVX2 (Haswell) optimization of

- quicksort. For 32-bit integers:

≈45 cycles/byte for n ≈ 210, ≈55 cycles/byte for n ≈ 220. Slower than “the radix sort implemented of IPP, which is the fastest in-memory sort we are aware of”: 32, 40 cycles/byte. IPP: Intel’s Integrated Performance Primitives library. Constant-time again on

SLIDE 99 19

constant-time sorting code vectorization (for Haswell) Constant-time sorting code in 2017 Bernstein–Chuengsatiansup– Vredendaal software release revamped for higher speed “djbsort” constant-time sorting code

20

The slowdown for constant time Massive fast-sorting literature. 2015 Gueron–Krasnov: AVX and AVX2 (Haswell) optimization of

- quicksort. For 32-bit integers:

≈45 cycles/byte for n ≈ 210, ≈55 cycles/byte for n ≈ 220. Slower than “the radix sort implemented of IPP, which is the fastest in-memory sort we are aware of”: 32, 40 cycles/byte. IPP: Intel’s Integrated Performance Primitives library. Constant-time results, again on Haswell CPU

SLIDE 100 19

rting code rization ell) code Bernstein–Chuengsatiansup– redendaal release ed for eed code

20

The slowdown for constant time Massive fast-sorting literature. 2015 Gueron–Krasnov: AVX and AVX2 (Haswell) optimization of

- quicksort. For 32-bit integers:

≈45 cycles/byte for n ≈ 210, ≈55 cycles/byte for n ≈ 220. Slower than “the radix sort implemented of IPP, which is the fastest in-memory sort we are aware of”: 32, 40 cycles/byte. IPP: Intel’s Integrated Performance Primitives library. Constant-time results, again on Haswell CPU core:

SLIDE 101 20

The slowdown for constant time Massive fast-sorting literature. 2015 Gueron–Krasnov: AVX and AVX2 (Haswell) optimization of

- quicksort. For 32-bit integers:

≈45 cycles/byte for n ≈ 210, ≈55 cycles/byte for n ≈ 220. Slower than “the radix sort implemented of IPP, which is the fastest in-memory sort we are aware of”: 32, 40 cycles/byte. IPP: Intel’s Integrated Performance Primitives library.

21

Constant-time results, again on Haswell CPU core:

SLIDE 102 20

The slowdown for constant time Massive fast-sorting literature. 2015 Gueron–Krasnov: AVX and AVX2 (Haswell) optimization of

- quicksort. For 32-bit integers:

≈45 cycles/byte for n ≈ 210, ≈55 cycles/byte for n ≈ 220. Slower than “the radix sort implemented of IPP, which is the fastest in-memory sort we are aware of”: 32, 40 cycles/byte. IPP: Intel’s Integrated Performance Primitives library.

21

Constant-time results, again on Haswell CPU core: 2017 BCLvV: 6:5 cycles/byte for n ≈ 210, 33 cycles/byte for n ≈ 220.

SLIDE 103 20

The slowdown for constant time Massive fast-sorting literature. 2015 Gueron–Krasnov: AVX and AVX2 (Haswell) optimization of

- quicksort. For 32-bit integers:

≈45 cycles/byte for n ≈ 210, ≈55 cycles/byte for n ≈ 220. Slower than “the radix sort implemented of IPP, which is the fastest in-memory sort we are aware of”: 32, 40 cycles/byte. IPP: Intel’s Integrated Performance Primitives library.

21

Constant-time results, again on Haswell CPU core: 2017 BCLvV: 6:5 cycles/byte for n ≈ 210, 33 cycles/byte for n ≈ 220. 2018 djbsort: 2:5 cycles/byte for n ≈ 210, 15:5 cycles/byte for n ≈ 220.

SLIDE 104 20

The slowdown for constant time Massive fast-sorting literature. 2015 Gueron–Krasnov: AVX and AVX2 (Haswell) optimization of

- quicksort. For 32-bit integers:

≈45 cycles/byte for n ≈ 210, ≈55 cycles/byte for n ≈ 220. Slower than “the radix sort implemented of IPP, which is the fastest in-memory sort we are aware of”: 32, 40 cycles/byte. IPP: Intel’s Integrated Performance Primitives library.

21

Constant-time results, again on Haswell CPU core: 2017 BCLvV: 6:5 cycles/byte for n ≈ 210, 33 cycles/byte for n ≈ 220. 2018 djbsort: 2:5 cycles/byte for n ≈ 210, 15:5 cycles/byte for n ≈ 220. No slowdown. New speed records!

SLIDE 105 20

The slowdown for constant time Massive fast-sorting literature. 2015 Gueron–Krasnov: AVX and AVX2 (Haswell) optimization of

- quicksort. For 32-bit integers:

≈45 cycles/byte for n ≈ 210, ≈55 cycles/byte for n ≈ 220. Slower than “the radix sort implemented of IPP, which is the fastest in-memory sort we are aware of”: 32, 40 cycles/byte. IPP: Intel’s Integrated Performance Primitives library.

21

Constant-time results, again on Haswell CPU core: 2017 BCLvV: 6:5 cycles/byte for n ≈ 210, 33 cycles/byte for n ≈ 220. 2018 djbsort: 2:5 cycles/byte for n ≈ 210, 15:5 cycles/byte for n ≈ 220. No slowdown. New speed records! Warning: Comparison for n ≈ 220 involves microarchitecture details beyond Haswell core. Should measure all code on same CPU.

SLIDE 106 20

slowdown for constant time Massive fast-sorting literature. Gueron–Krasnov: AVX and (Haswell) optimization of

- quicksort. For 32-bit integers:

cycles/byte for n ≈ 210, cycles/byte for n ≈ 220. than “the radix sort implemented of IPP, which is fastest in-memory sort we are

Intel’s Integrated rmance Primitives library.

21

Constant-time results, again on Haswell CPU core: 2017 BCLvV: 6:5 cycles/byte for n ≈ 210, 33 cycles/byte for n ≈ 220. 2018 djbsort: 2:5 cycles/byte for n ≈ 210, 15:5 cycles/byte for n ≈ 220. No slowdown. New speed records! Warning: Comparison for n ≈ 220 involves microarchitecture details beyond Haswell core. Should measure all code on same CPU. How can beat standa

SLIDE 107 20

for constant time rting literature. Gueron–Krasnov: AVX and

32-bit integers: for n ≈ 210, for n ≈ 220. “the radix sort IPP, which is in-memory sort we are 40 cycles/byte. Integrated Primitives library.

21

Constant-time results, again on Haswell CPU core: 2017 BCLvV: 6:5 cycles/byte for n ≈ 210, 33 cycles/byte for n ≈ 220. 2018 djbsort: 2:5 cycles/byte for n ≈ 210, 15:5 cycles/byte for n ≈ 220. No slowdown. New speed records! Warning: Comparison for n ≈ 220 involves microarchitecture details beyond Haswell core. Should measure all code on same CPU. How can an n(log n beat standard n log

SLIDE 108 20

constant time literature. VX and

integers:

10, 20.

rt which is we are yte. rary.

21

Constant-time results, again on Haswell CPU core: 2017 BCLvV: 6:5 cycles/byte for n ≈ 210, 33 cycles/byte for n ≈ 220. 2018 djbsort: 2:5 cycles/byte for n ≈ 210, 15:5 cycles/byte for n ≈ 220. No slowdown. New speed records! Warning: Comparison for n ≈ 220 involves microarchitecture details beyond Haswell core. Should measure all code on same CPU. How can an n(log n)2 algorithm beat standard n log n algorithms?

SLIDE 109

21

Constant-time results, again on Haswell CPU core: 2017 BCLvV: 6:5 cycles/byte for n ≈ 210, 33 cycles/byte for n ≈ 220. 2018 djbsort: 2:5 cycles/byte for n ≈ 210, 15:5 cycles/byte for n ≈ 220. No slowdown. New speed records! Warning: Comparison for n ≈ 220 involves microarchitecture details beyond Haswell core. Should measure all code on same CPU.

22

How can an n(log n)2 algorithm beat standard n log n algorithms?

SLIDE 110 21

Constant-time results, again on Haswell CPU core: 2017 BCLvV: 6:5 cycles/byte for n ≈ 210, 33 cycles/byte for n ≈ 220. 2018 djbsort: 2:5 cycles/byte for n ≈ 210, 15:5 cycles/byte for n ≈ 220. No slowdown. New speed records! Warning: Comparison for n ≈ 220 involves microarchitecture details beyond Haswell core. Should measure all code on same CPU.

22

How can an n(log n)2 algorithm beat standard n log n algorithms? Answer: well-known trends in CPU design, reflecting fundamental hardware costs

SLIDE 111 21

Constant-time results, again on Haswell CPU core: 2017 BCLvV: 6:5 cycles/byte for n ≈ 210, 33 cycles/byte for n ≈ 220. 2018 djbsort: 2:5 cycles/byte for n ≈ 210, 15:5 cycles/byte for n ≈ 220. No slowdown. New speed records! Warning: Comparison for n ≈ 220 involves microarchitecture details beyond Haswell core. Should measure all code on same CPU.

22

How can an n(log n)2 algorithm beat standard n log n algorithms? Answer: well-known trends in CPU design, reflecting fundamental hardware costs

Every cycle, Haswell core can do 8 “min” ops on 32-bit integers + 8 “max” ops on 32-bit integers.

SLIDE 112 21

Constant-time results, again on Haswell CPU core: 2017 BCLvV: 6:5 cycles/byte for n ≈ 210, 33 cycles/byte for n ≈ 220. 2018 djbsort: 2:5 cycles/byte for n ≈ 210, 15:5 cycles/byte for n ≈ 220. No slowdown. New speed records! Warning: Comparison for n ≈ 220 involves microarchitecture details beyond Haswell core. Should measure all code on same CPU.

22

How can an n(log n)2 algorithm beat standard n log n algorithms? Answer: well-known trends in CPU design, reflecting fundamental hardware costs

Every cycle, Haswell core can do 8 “min” ops on 32-bit integers + 8 “max” ops on 32-bit integers. Loading a 32-bit integer from a random address: much slower. Conditional branch: much slower.

SLIDE 113 21

Constant-time results,

BCLvV: cycles/byte for n ≈ 210, cycles/byte for n ≈ 220. djbsort: cycles/byte for n ≈ 210, cycles/byte for n ≈ 220.

- wdown. New speed records!

rning: Comparison for n ≈ 220 involves microarchitecture details

measure all code on same CPU.

22

How can an n(log n)2 algorithm beat standard n log n algorithms? Answer: well-known trends in CPU design, reflecting fundamental hardware costs

Every cycle, Haswell core can do 8 “min” ops on 32-bit integers + 8 “max” ops on 32-bit integers. Loading a 32-bit integer from a random address: much slower. Conditional branch: much slower. Verification Sorting s Does it w Test the random inputs, decreasing

SLIDE 114 21

results, ell CPU core: for n ≈ 210, for n ≈ 220. for n ≈ 210, for n ≈ 220. New speed records! Comparison for n ≈ 220 rchitecture details

22

How can an n(log n)2 algorithm beat standard n log n algorithms? Answer: well-known trends in CPU design, reflecting fundamental hardware costs

Every cycle, Haswell core can do 8 “min” ops on 32-bit integers + 8 “max” ops on 32-bit integers. Loading a 32-bit integer from a random address: much slower. Conditional branch: much slower. Verification Sorting software is Does it work corre Test the sorting soft random inputs, increasing decreasing inputs.

SLIDE 115 21

re: , . ,

20.

records! n ≈ 220 details Should CPU.

22

How can an n(log n)2 algorithm beat standard n log n algorithms? Answer: well-known trends in CPU design, reflecting fundamental hardware costs

Every cycle, Haswell core can do 8 “min” ops on 32-bit integers + 8 “max” ops on 32-bit integers. Loading a 32-bit integer from a random address: much slower. Conditional branch: much slower. Verification Sorting software is in the TCB. Does it work correctly? Test the sorting software on random inputs, increasing inputs, decreasing inputs. Seems to

SLIDE 116 22

How can an n(log n)2 algorithm beat standard n log n algorithms? Answer: well-known trends in CPU design, reflecting fundamental hardware costs

Every cycle, Haswell core can do 8 “min” ops on 32-bit integers + 8 “max” ops on 32-bit integers. Loading a 32-bit integer from a random address: much slower. Conditional branch: much slower.

23

Verification Sorting software is in the TCB. Does it work correctly? Test the sorting software on many random inputs, increasing inputs, decreasing inputs. Seems to work.

SLIDE 117 22

How can an n(log n)2 algorithm beat standard n log n algorithms? Answer: well-known trends in CPU design, reflecting fundamental hardware costs

Every cycle, Haswell core can do 8 “min” ops on 32-bit integers + 8 “max” ops on 32-bit integers. Loading a 32-bit integer from a random address: much slower. Conditional branch: much slower.

23

Verification Sorting software is in the TCB. Does it work correctly? Test the sorting software on many random inputs, increasing inputs, decreasing inputs. Seems to work. But are there occasional inputs where this sorting software fails to sort correctly? History: Many security problems involve occasional inputs where TCB works incorrectly.

SLIDE 118

22

can an n(log n)2 algorithm standard n log n algorithms? er: well-known trends design, reflecting fundamental hardware costs rious operations. cycle, Haswell core can do “min” ops on 32-bit integers + “max” ops on 32-bit integers. Loading a 32-bit integer from a address: much slower. Conditional branch: much slower.

23

Verification Sorting software is in the TCB. Does it work correctly? Test the sorting software on many random inputs, increasing inputs, decreasing inputs. Seems to work. But are there occasional inputs where this sorting software fails to sort correctly? History: Many security problems involve occasional inputs where TCB works incorrectly. For each machine fully unrolled unrolled yes,

SLIDE 119 22

(log n)2 algorithm log n algorithms? ell-known trends reflecting rdware costs tions. Haswell core can do 32-bit integers + 32-bit integers. integer from a much slower. ranch: much slower.

23

Verification Sorting software is in the TCB. Does it work correctly? Test the sorting software on many random inputs, increasing inputs, decreasing inputs. Seems to work. But are there occasional inputs where this sorting software fails to sort correctly? History: Many security problems involve occasional inputs where TCB works incorrectly. For each used n (e.g., C code normal

symbolic

new p

new so

SLIDE 120 22

rithm rithms? trends costs can do integers + integers. from a wer. slower.

23

Verification Sorting software is in the TCB. Does it work correctly? Test the sorting software on many random inputs, increasing inputs, decreasing inputs. Seems to work. But are there occasional inputs where this sorting software fails to sort correctly? History: Many security problems involve occasional inputs where TCB works incorrectly. For each used n (e.g., 768): C code normal compiler

symbolic execution

new peephole optim

new sorting verifier

SLIDE 121 23

Verification Sorting software is in the TCB. Does it work correctly? Test the sorting software on many random inputs, increasing inputs, decreasing inputs. Seems to work. But are there occasional inputs where this sorting software fails to sort correctly? History: Many security problems involve occasional inputs where TCB works incorrectly.

24

For each used n (e.g., 768): C code normal compiler

symbolic execution

new peephole optimizer

new sorting verifier

SLIDE 122 23

erification rting software is in the TCB. it work correctly? the sorting software on many inputs, increasing inputs, decreasing inputs. Seems to work. re there occasional inputs this sorting software sort correctly? ry: Many security problems

TCB works incorrectly.

24

For each used n (e.g., 768): C code normal compiler

symbolic execution

new peephole optimizer

new sorting verifier

Symbolic use existing with tiny eliminating a few missing

SLIDE 123 23

is in the TCB. rrectly? software on many increasing inputs,

ccasional inputs rting software rrectly? security problems ccasional inputs rks incorrectly.

24

For each used n (e.g., 768): C code normal compiler

symbolic execution

new peephole optimizer

new sorting verifier

Symbolic execution: use existing “angr” with tiny new patches eliminating byte splitting, a few missing vecto

SLIDE 124 23

TCB.

inputs, to work. inputs roblems rrectly.

24

For each used n (e.g., 768): C code normal compiler

symbolic execution

new peephole optimizer

new sorting verifier

Symbolic execution: use existing “angr” library, with tiny new patches for eliminating byte splitting, adding a few missing vector instructions.

SLIDE 125 24

For each used n (e.g., 768): C code normal compiler

symbolic execution

new peephole optimizer

new sorting verifier

25

Symbolic execution: use existing “angr” library, with tiny new patches for eliminating byte splitting, adding a few missing vector instructions.

SLIDE 126 24

For each used n (e.g., 768): C code normal compiler

symbolic execution

new peephole optimizer

new sorting verifier

25

Symbolic execution: use existing “angr” library, with tiny new patches for eliminating byte splitting, adding a few missing vector instructions. Peephole optimizer: recognize instruction patterns equivalent to min, max.

SLIDE 127 24

For each used n (e.g., 768): C code normal compiler

symbolic execution

new peephole optimizer

new sorting verifier

25

Symbolic execution: use existing “angr” library, with tiny new patches for eliminating byte splitting, adding a few missing vector instructions. Peephole optimizer: recognize instruction patterns equivalent to min, max. Sorting verifier: decompose DAG into merging networks. Verify each merging network using generalization of 2007 Even–Levi–Litman, correction of 1990 Chung–Ravikumar.

SLIDE 128 24

each used n (e.g., 768): C code normal compiler

symbolic execution

new peephole optimizer

new sorting verifier

25

Symbolic execution: use existing “angr” library, with tiny new patches for eliminating byte splitting, adding a few missing vector instructions. Peephole optimizer: recognize instruction patterns equivalent to min, max. Sorting verifier: decompose DAG into merging networks. Verify each merging network using generalization of 2007 Even–Levi–Litman, correction of 1990 Chung–Ravikumar. First djbso verified int32 https://sorting.cr.yp.to Includes automatic simple b verification Web site use the verification Next release verified ARM and verified

SLIDE 129 24

(e.g., 768): rmal compiler de symbolic execution code peephole optimizer min-max code sorting verifier

25

Symbolic execution: use existing “angr” library, with tiny new patches for eliminating byte splitting, adding a few missing vector instructions. Peephole optimizer: recognize instruction patterns equivalent to min, max. Sorting verifier: decompose DAG into merging networks. Verify each merging network using generalization of 2007 Even–Levi–Litman, correction of 1990 Chung–Ravikumar. First djbsort release, verified int32 on A https://sorting.cr.yp.to Includes the sorting automatic build-time simple benchmarking verification tools. Web site shows ho use the verification Next release planned: verified ARM NEON and verified portable

SLIDE 130 24