Semantic Segmentation Dr. Eyal Gruss Director of AI, Flatspace - PowerPoint PPT Presentation

Semantic Segmentation Dr. Eyal Gruss Director of AI, Flatspace Eyal Gruss Talpiyot PhD Physics Machine Learning Researcher Consultant Entrepreneur Digital Artist Flatspace An AI-powered service that creates a VR model from a

Semantic Segmentation Dr. Eyal Gruss Director of AI, Flatspace

Eyal Gruss Talpiyot PhD Physics Machine Learning • Researcher • Consultant • Entrepreneur Digital Artist

Flatspace An AI-powered service that creates a VR model from a simple floorplan. Bitmap Floorplan Architectural 3D Model For photorealistic VR Interpretation experience Using deep neural networks Demo video: http://flatspace.xyz

Image Recognition (ImageNet ILSVRC) 30% 28.19% Move to 25.77% deep 25% neural networks: AlexNet Trimps- 20% Top 5 classification error Soushen Ministery 16.42% of public security, 15% GoogLeNet China 11.74% Microsoft Karpathy 10% Residual Momenta/ Net 6.66% Oxford 5.10% 5% 3.57% 2.99% 2.25% 0% 2010 2011 2012 2013 2014 2015 2016 2017 Human 1.2M train images, 100k test images, 1000 categories level

Object Detection Concurrence, and Recognition (ImageNet) Localization googleresearch.blogspot.com/2014/09/ building-deeper-understanding-of- images.html (Szegedy et al., GoogLeNet ) Tracking Out of context Counting Live: • VGG • YOLO • YOLO v2 Occlusion • LeCun

Multi Instance Semantic Segmentation Li et al., arxiv.org/abs/1611.07709 Won the COCO 2016 Detection Challenge (for segmentation)

Adversarial Perturbations Against Semantic Segmentation Fischer et al., arxiv.org/abs/1703.01101 Xie et al., arxiv.org/abs/1703.08603 Metzen et al., arxiv.org/abs/1704.05712 Cisse et al., arxiv.org/abs/1707.05373

Other related tasks • Edge detection • Semantic edge detection • Surface normals • Matting / objectness (foreground/background) • Saliency / memorability • Pose estimation • Depth estimation • Optical flow interpolation and estimation • Motion prediction • E.g. Eigen and Fergus, UberNet, PixelNet combine several of the above

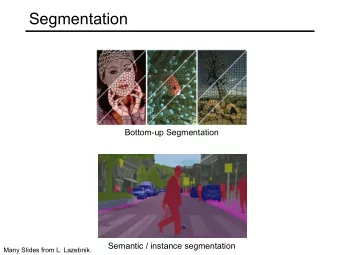

This talk: Semantic Segmentation aka: scene labeling / scene parsing / dense prediction / dense labeling / pixel-level classification (d) Input (e) semantic segmentation (f) naive instance segmentation (g) instance segmentation (e) semantic segmentation

Datasets and use cases • General • Pascal VOC 2012 • MS COCO (evaluation only for instance segmentation) • ADE20K / SceneParse150K (all pixels annotated) • DAVIS 2017 (video; review) • Urban (e.g. for autonomous vehicles) • Cityscapes (all pixels annotated) • CMP Facades (strong priors) • KITTI road/lane • CamVid (all pixels annotated, video) • Aerial / Satellite • ISPRS Potsdam and Vaihingen • DSTL Kaggle (multi-modal) • Human parsing (LIP, MHP) • Medial imaging (can be 2.5D/multi-view) • More: riemenschneider.hayko.at/vision/dataset

Train+Validation: Pascal VOC 2012 11,530 6,929 20 + background github.com/nightrome/really- awesome-semantic-segmentation

Evaluation metrics • Pixel accuracy (dominated by background class) • Mean accuracy over classes (individual class recall does not penalize false pos; must include background class) • Jaccard index = Intersection over Union (IoU) = (GT ∩ Pred) / (GT U Pred) = TP / (TP + FN + FP) • <= Recall = TP / GT, Precision = TP / Pred • Usually: mean over classes, on the whole dataset • Can include or exclude the background class • Can be mean over images instead of whole dataset • Can be frequency weighted (unbalanced, similar to pixel accuracy) • Can be weighted by inverse instance size (cityscapes, important in traffic use cases) • Can be averaged with e.g. pixel accuracy (ADE20K) • Dice index = F1 score = 2(GT ∩ Pred) / (GT + Pred) = 2TP / (2TP + FN + FP) • = Harmonic mean of Recall and Precision • = 2IoU / (1 + IoU), Monotonic with IoU

Evaluation metrics • Trimap IoU around boundaries 4/8px (Krähenbühl and Koltun, Kohli et al.) • Boundary F1 (BF) - Nearest boundary pixel distance (Csurka et al.) • For some distance error tolerance = e.g. 0.75% of the image diagonal • Can be averaged with IoU (Davis) • Average precision (AP) = Area under the precision-recall curve (MS COCO) • Here precision, recall are instance-level given some IoU threshold e.g. 0.5 • Can be additionally averaged over different thresholds (e.g. 0.5 - 0.95 in steps of 0.05) • Multiple detections (instance fragmentation) are counted as false positives beyond the best • Primary metric for instance segmentation (pixel-level metrics can be ambiguous)

Loss • Cross entropy = - sum classes sum pixels p*log(q) • p = targets; q = output probabilites • Can be weighted by inverse class size • Can be weighted to emphasize areas around edges (U-Net, Meyer) • IoU approximated with probabilities = sum classes [(sum pixels p*q) / sum pixels (p + q – p*q)] • Approximation is needed since IOU is discrete • Makes sense since this is our evaluation metric • Multiclass formulation is balanced over class sizes • Rediscovered in literature from time to time [1-16] • Visualead reported mixed results • Loss = • - IoU [1 2 3 4 5 6] • - Dice [7 8 9 10] • - Tversky generalization [11] • 0.1 * CE + 0.9 * (1 - Dice) [12] • CE - log(IoU) [13] • Other approximations [14 15 16 (TBD in TF)] • Total variation smoothing = sum classes sum x,y |q x+1,y – q x,y |+|q x,y+1 – q x,y | • Adversarial (later)

Architectures 1. Patchwise CNN 2. FCN 3. DeepLab 4. DeconvNet 5. U-Net 6. SegNet 7. Dilated Convolutions (Yu and Koltun) 8. 100-Layer Tiramisu (DesneNets) 9. Wide ResNet 10. PSPNet 11. Adversarial 12. PolygonRNN 13. Mask R-CNN 14. Semi-supervised with unsupervised loss

Patchwise CNN • Ning et al., http://yann. lecun .com/exdb/publis/pdf/ning- 05 .pdf • Ciresan et al., people.idsia.ch/%7E juergen /nips 2012 .pdf • A sliding window CNN classifies each pixel in turn

Fully Convolutional NN • cs231n.github.io/convolutional-networks/#converting-fc-layers-to-conv- layers • Start from a CNN classifier • Convert fully connected to conv (with filter size = input volume, no padding): • CNN -> 7*7*512 -> fc(4096) -> 4096 -> fc(1000) -> 1000 • CNN -> 7*7*512 -> conv(7*7*4096) -> 1*1*4096 -> conv (1*1*1000) -> 1*1*1000 • Can take arbitrarily larger input: • 224*224 -> 7*7*512 -> 1*1*100 • 384*384 -> 12*12*512 -> 6*6*100 • Equivalent to sliding a patchwise CNN, but with a single pass that is much more efficient due to convolution sharing

Deconvolution/Upconvolution Layers (Resolution Increasing Convolutions) input • FC convolution transposed • cs231n.stanford.edu/slides/2017/ cs231n_2017_lecture11.pdf • Fractionally strided convolution • github.com/vdumoulin/conv_arithmetic Stride = 1/2 Stride = 2

Fully Convolutional Network (FCN; 2014-11) • Long et al., arxiv.org/abs/1411.4038 • Shelhamer et al., arxiv.org/abs/1605.06211 • Start from classification CNN pre-trained on ImageNet (AlexNet/VGG-16/GoogLeNet) and convert fully connected to conv (conv7) • Replace final layer to 1*1*21 and add bilinear upsampling to get full spatial output (FCN-32s) • Add x2 deconv (initialized as bilinear) on conv7 and sum with conv prediction added to pool4 • Add bilinear upsampling to get full spatial output (FCN-16s). Fine tune from FCN-32s • Do similarly for above fuse and pool3 (FCN-8s) • Pascal VOC 2012 IoU=62.2%-67.2% (up from 51.6%) • 100-175 ms (vs. 50 s) • 134M params

hole = atrous = dilated convolutions increase DeepLab (2014-12) field of view without decreasing resolution, or adding parameters • Chen et al. (Google), arxiv.org/abs/1412.7062 • VGG-16 pre-trained on ImageNet -> fully conv • Cancel last two max-pool • Change conv after above to x2/x4 dilated convolutions • Train with x8 subsampled targets (IoU<90.7%). Infer with bilinear upsampling. • Fully connected CRF (raw image dependent potential) post-processing in inference (+ 3%-5%) • Add multi-scale layers fine tuned separately (similar to FCN-8s but with concats and convs) • Increase dilation for first FC layer to x12 (large field of view) + change FC kernel, filters • 20.5M params Before softmax After softmax • Pascal VOC 2012 IoU = 71.6% • V2: arxiv.org/abs/1606.00915 with ResNet-101 + “ atrous spatial pyramid pooling ” • Pascal VOC 2012 IoU = 79.7% Cityscapes IoU = 70.4% • V3: arxiv.org/abs/1706.05587 • Pascal VOC 2012 IoU = 86.9% Cityscapes IoU = 81.3% (SOTA 2017)

DeconvNet (2015-05) • Noh et al., arxiv.org/abs/1505.04366 • VGG-16 pre-trained on ImageNet • Unpooling layers use saved max pooling indices • Symmetric encoder-decoder: multiple deconvolutions + BatchNorm + ReLU (no dropout) • Relies on region proposals. Training with two-stage curriculum learning: • 1. Instances cropped to GT bounding boxes * 1.2, all non-class pixels labeled as background • 2. Object proposals from edge-box * 1.2 • Inference: • Top 50 objectness score of 2000 edge-box object proposals, Max per pixel/class before softmax • Fully connected CRF post-processing (+ ~1%) • 252M params • Pascal VOC 2012 IoU = 70.5% • Ensemble with FCN-8s = 72.5%

U-Net (2015-05) • Ronneberger et al., arxiv.org/abs/1505.04597 • No VGG! Not pre-trained! • Skip connections to keep res.! • Separate deconv to: learned 2x2 upconv + (3x3 regular conv + ReLU) * 2 • Weighting to emphasize areas around morphological edges • Implementations I ’ ve seen use half the filters and padding dropout

Recommend

More recommend

Explore More Topics

Stay informed with curated content and fresh updates.